With the new S4HANA custom code migration FIORI app you can include system usage data (from productive system) to see which code blocks are used and which ones are not.

This blog will give answers to the following questions:

- How to collect usage data from productive system?

- How to include the usage data in the S4HANA custom code migration FIORI app?

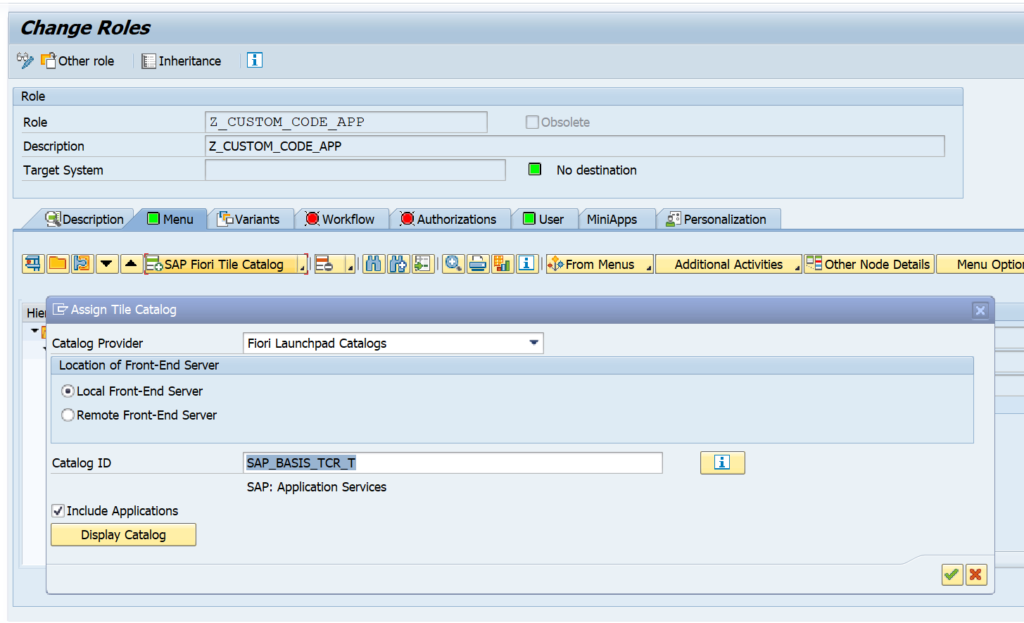

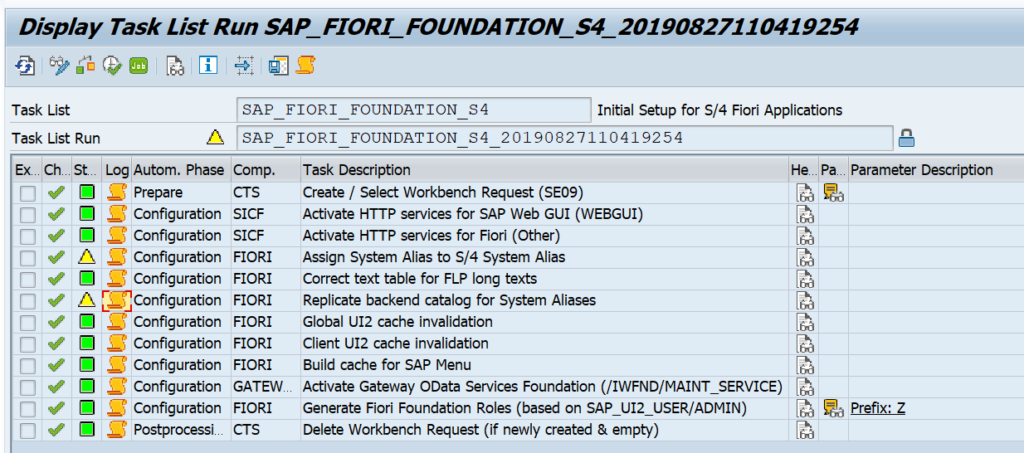

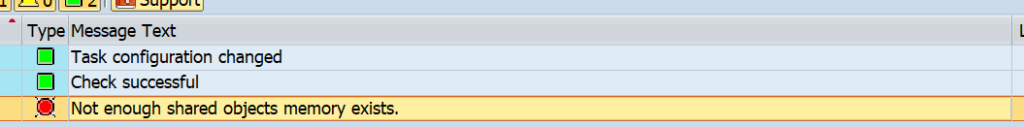

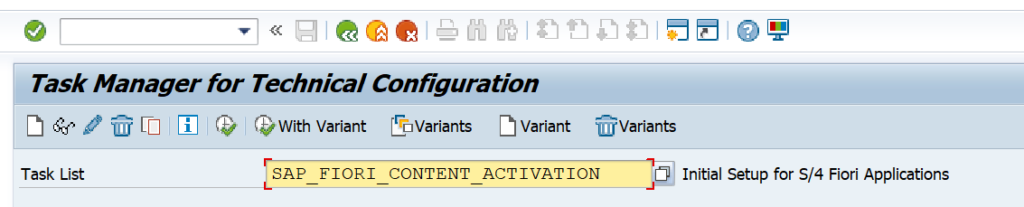

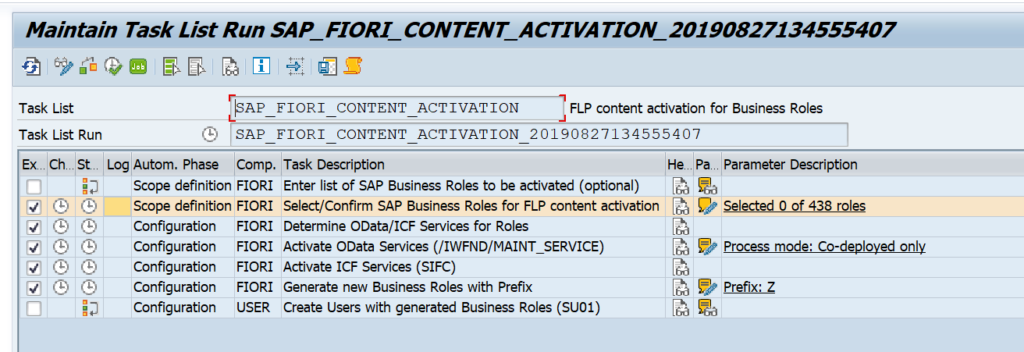

This blog assumes you have already setup the S4HANA custom code migration FIORI app. If you have not done this, follow the instructions in this blog.

Collecting usage data in production with transaction SUSG

General recommendations for the use of transaction SUSG can be found in OSS note 2701371 – Recommendations for aggregating usage data using transaction SUSG. SUSG assumes you have already activated the SCMON ABAP call monitor. If that is not done, read this blog.

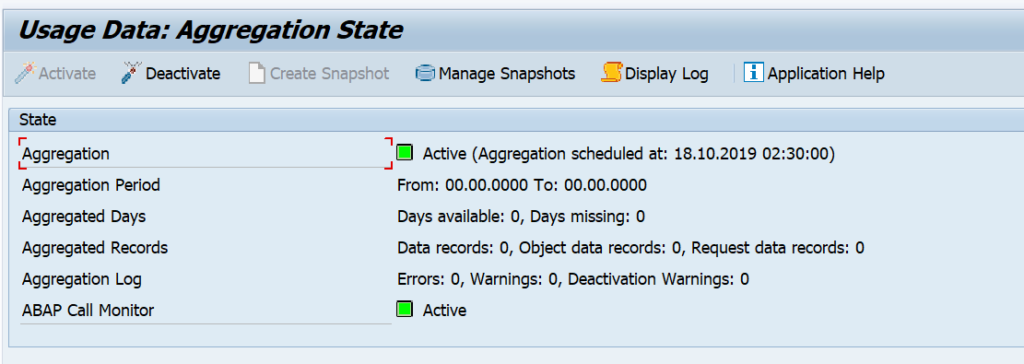

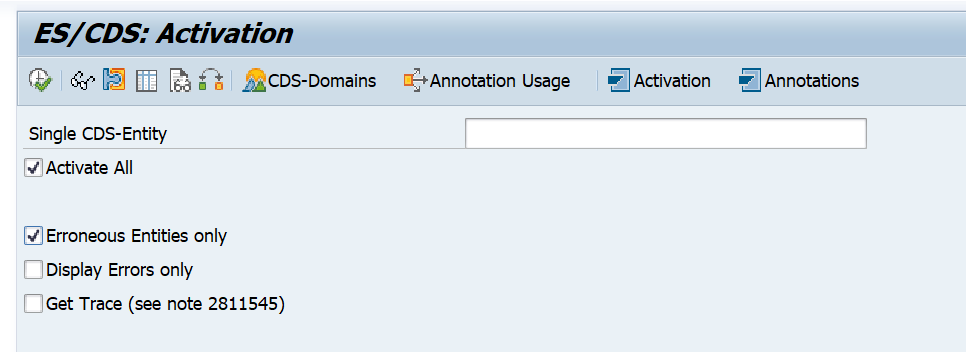

In your productive system start transaction SUSG and activate the usage data aggregation:

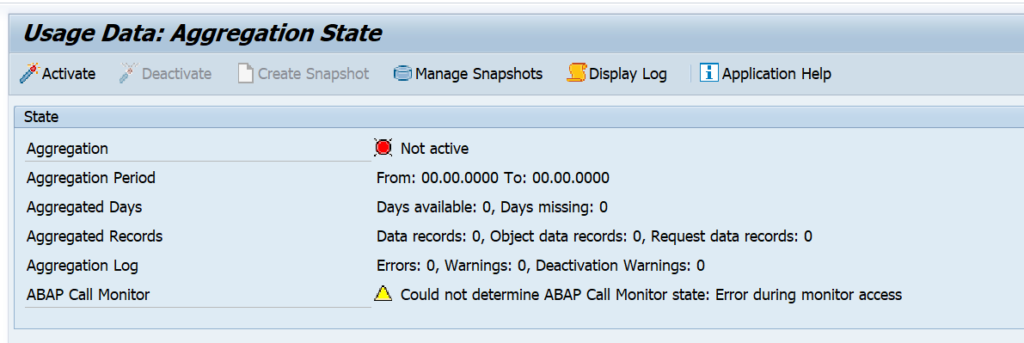

If you don’t have sufficient authorizations, you might get this weird screen:

If you see this screen, first check your user authorizations.

SUSG performance impact

SUSG performance impact is negligible. SCMON might have an impact. See the blog on SCMON.

Background: 3100194 – Memory Requirement and Performance Impact of transaction SUSG.

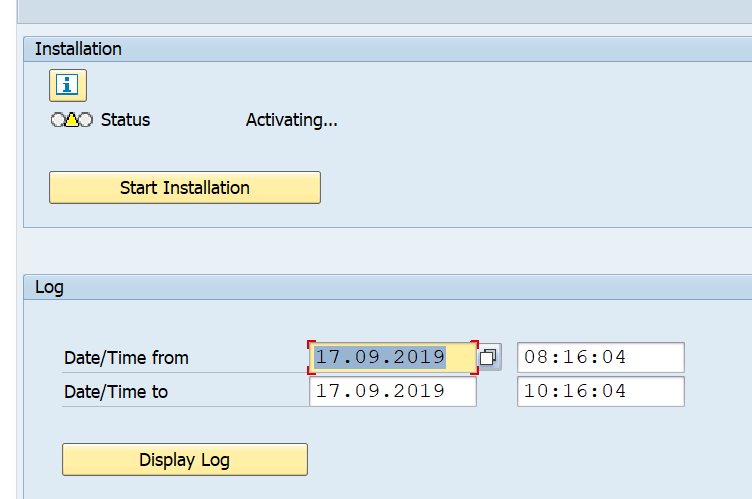

SUSG installation

If SUSG does not start in your productive system it needs to be installed first. To install SUSG apply OSS note 2643357 – Installation of Transaction SUSG. This is a TCI based OSS note (see blog).

After the TCI note also apply these OSS notes:

- 2822357 – SUSG: Robustness of transaction SUSG

- 2830186 – SUSG: Runtime of SUSG_COLLECT_FROM_SCMON

- 3235725 – SCMON / SUSG performance improvement for 7.50

- 3244347 – SUSG: Disable actions for read only authorization

- 3317852 – SUSG: Provide options for snapshot creation

- 3354462 – SUSG: Program to Delete Request Entry Points by Pattern

- 3428495 – Speed up download of SUSG snapshot

- 3455813 – SUSG: File not found in ZIP during upload

Creating the snapshot

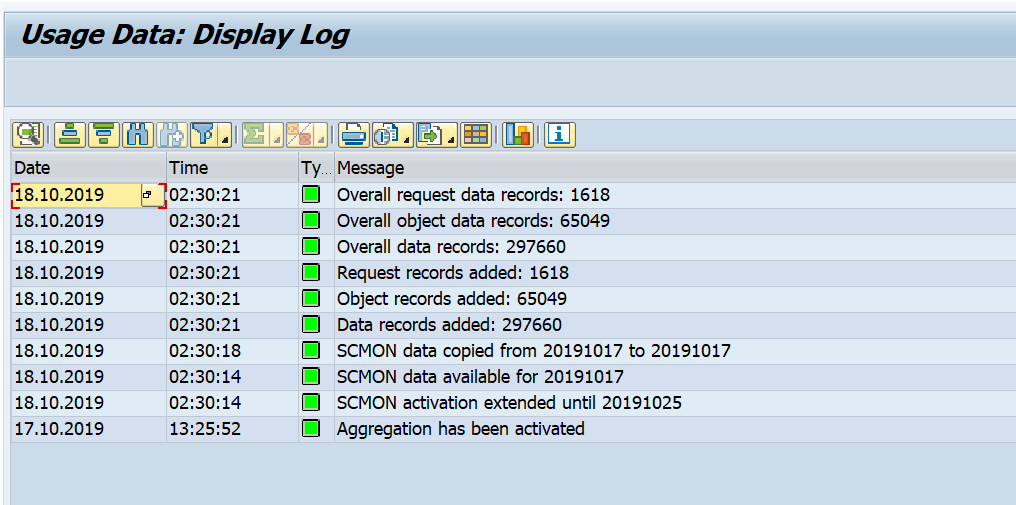

Now that the data collection and aggregation is activated, you will need to be patient. Let the system collect the data for the next few days. Now go to transaction SUSG and check the log that the aggregation went fine:

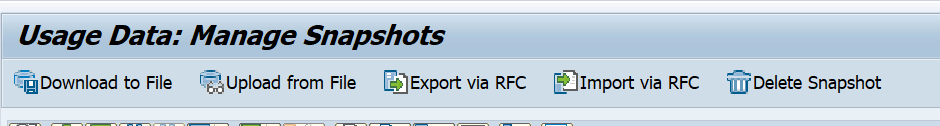

Now you can create a snapshot in the Manage Snapshots section:

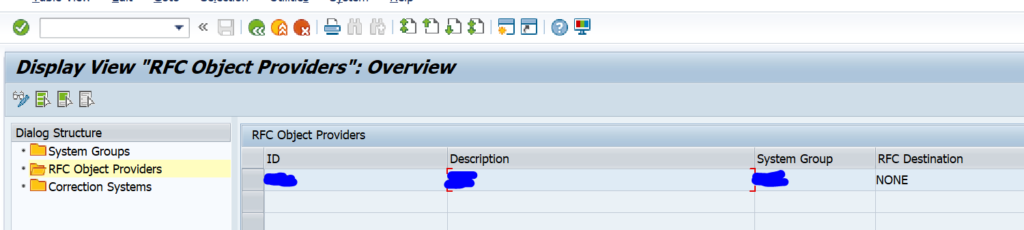

Create the snapshot and download it to a file on your desktop or laptop. If wanted you can setup RFC connection as well.

The security and basis team normally does not like any RFC going from production system to non-production system. So the file option is normally the best way.

Loading the data into your upgraded S4HANA system

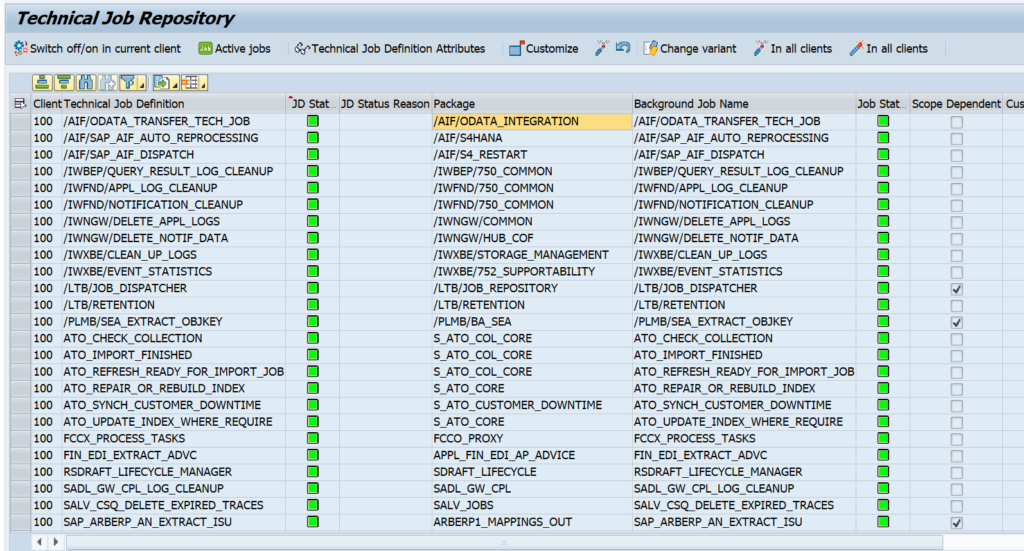

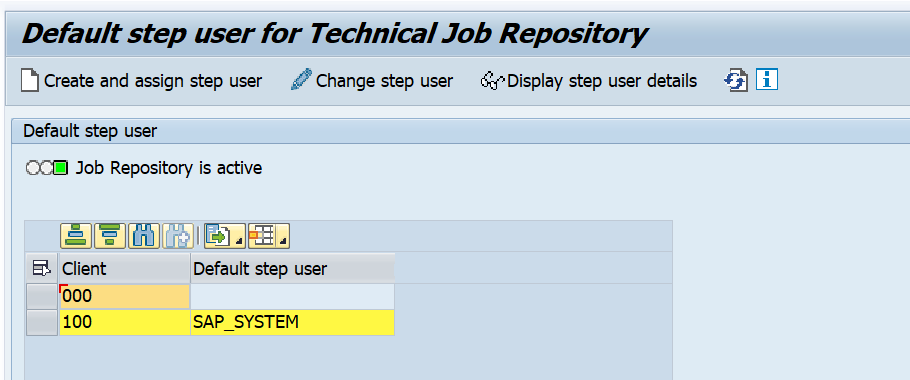

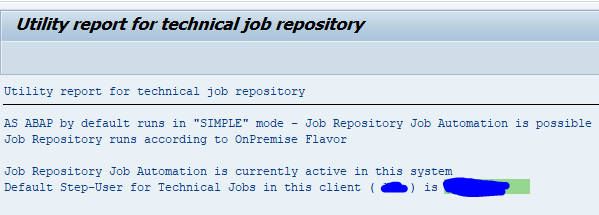

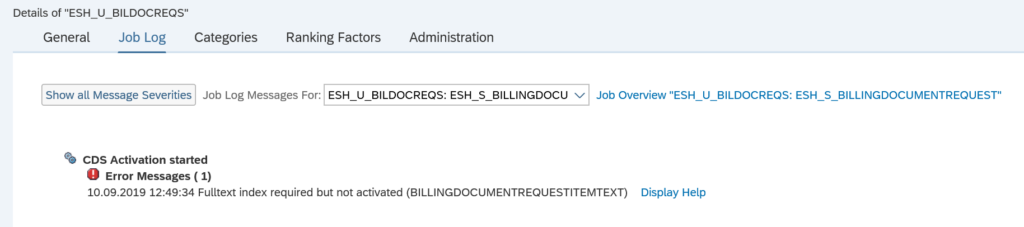

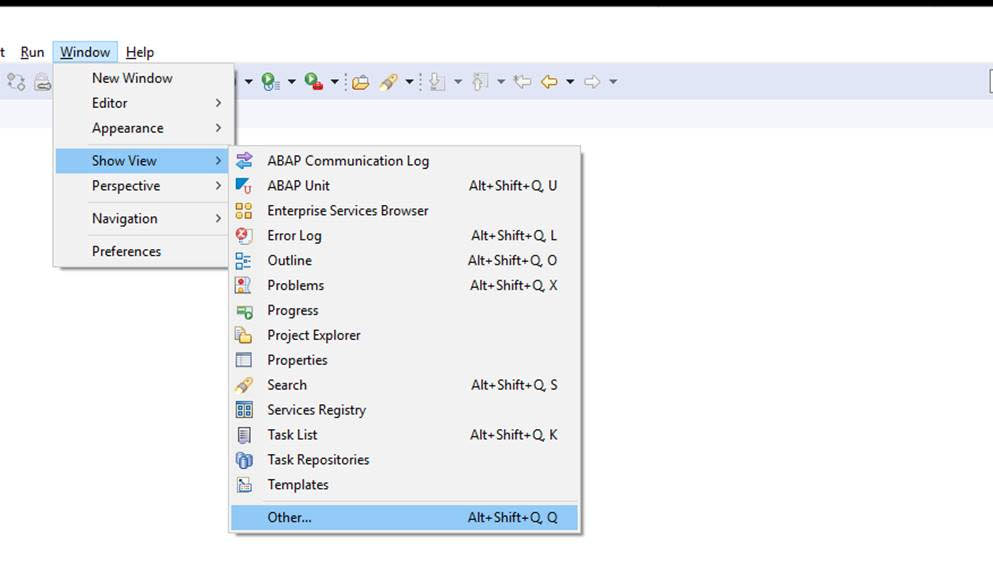

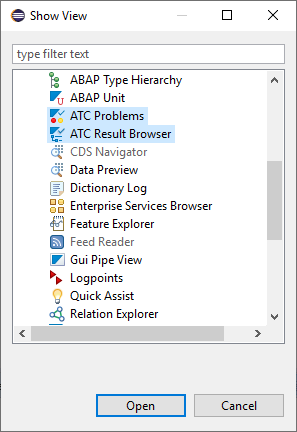

In your S4HANA system where your custom code analysis runs now start transaction SUSG and make sure it is active. Now you can upload the snapshot from the productive server you have downloaded in the previous step.

Please make sure that the OSS notes on both your productive system and your S4HANA system are identical. The notes have changes to file format of the download file. If the notes are notes identically applied, you will have file format upload issues. Recommendation is to apply all recent SUSG note to both your productive server and the S4HANA system.

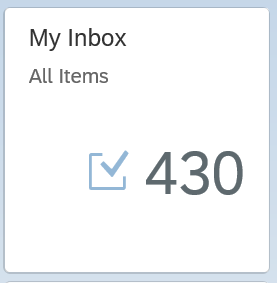

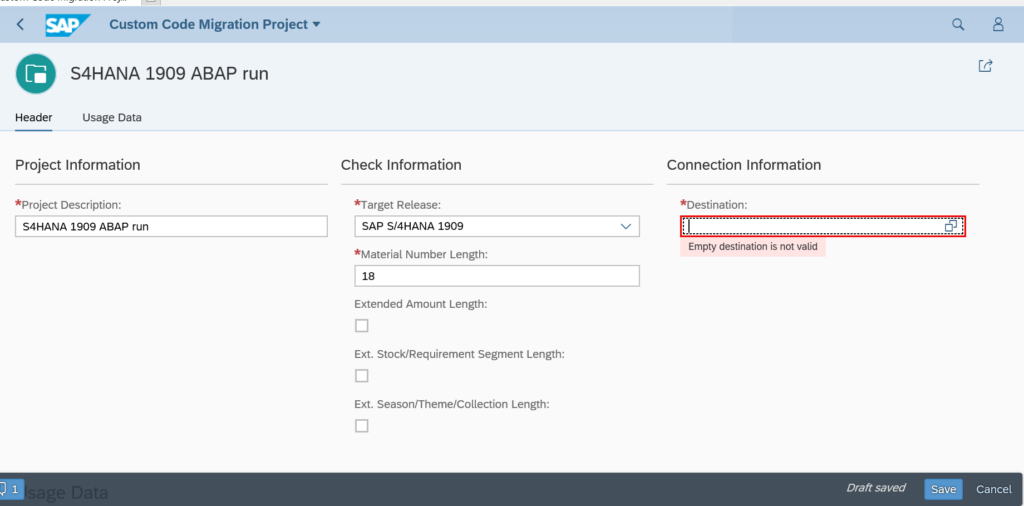

S4HANA custom code migration app with usage data

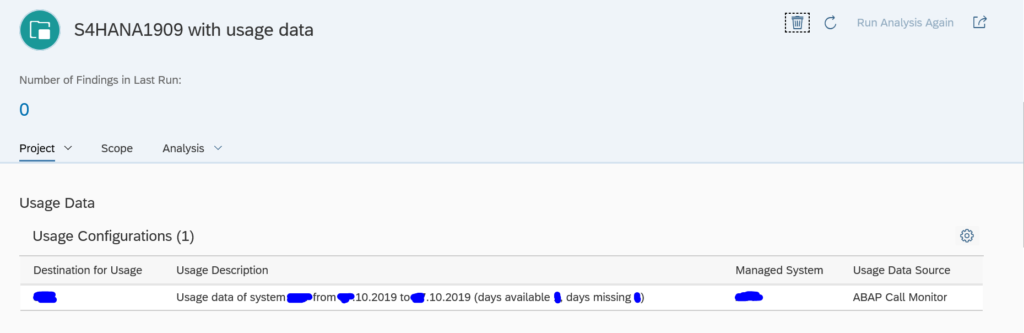

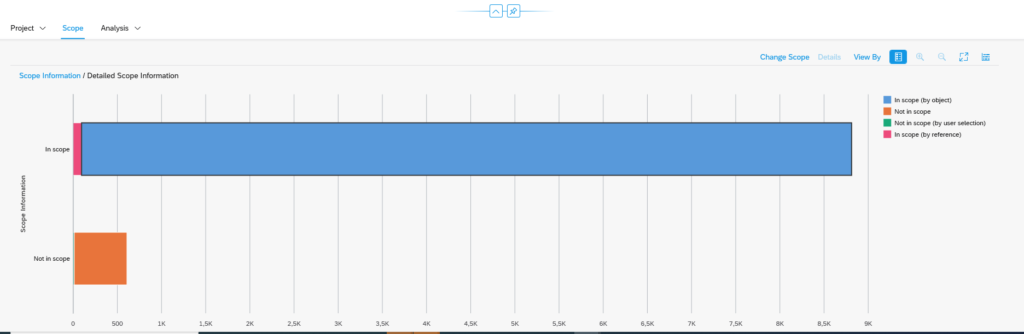

Now you can finally launch the S4HANA custom code migration app. Create a new analysis. In the usage data part of the app, you can assign the snapshot you have uploaded in the previous section:

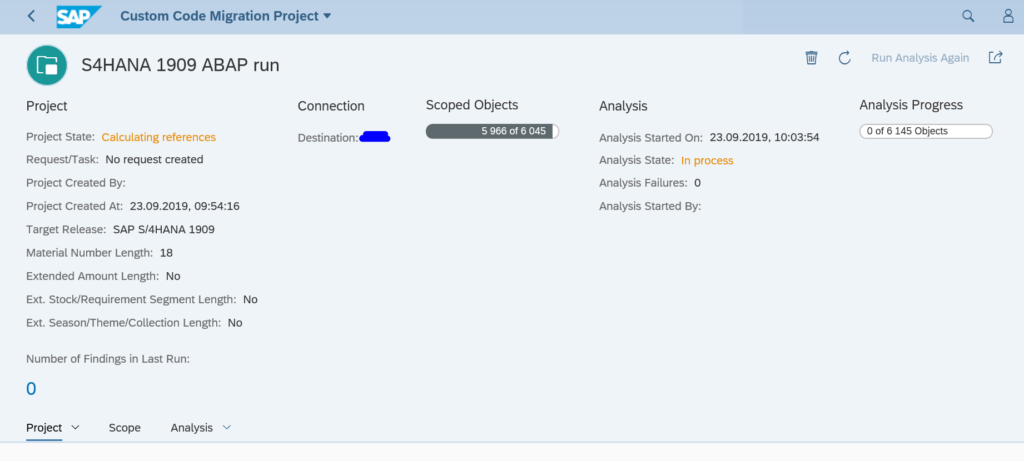

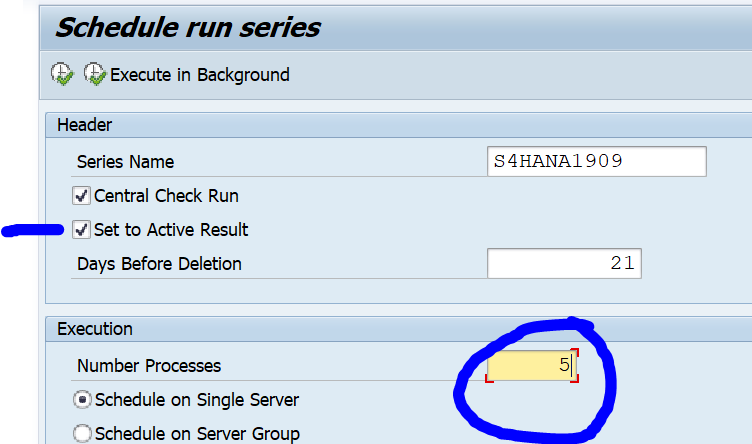

Now start the custom code analysis and let it run.

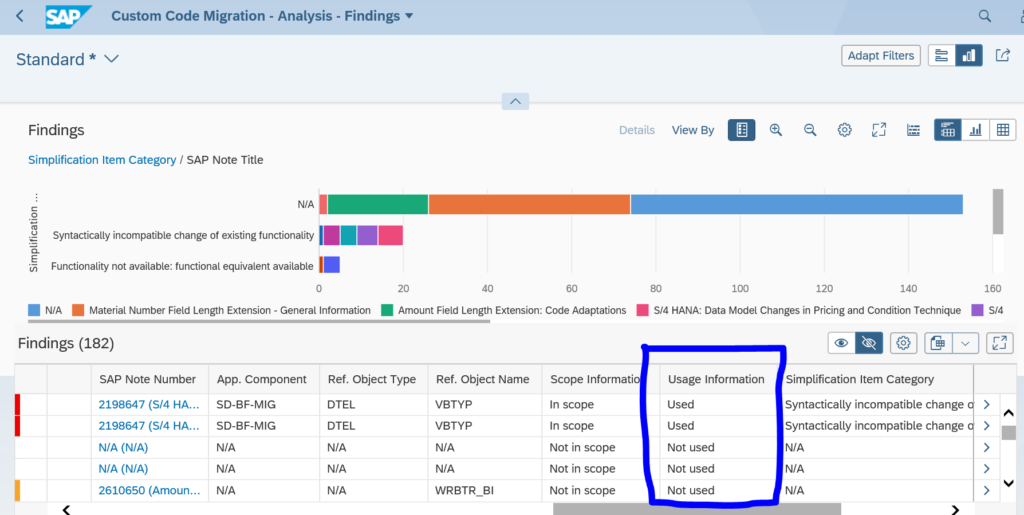

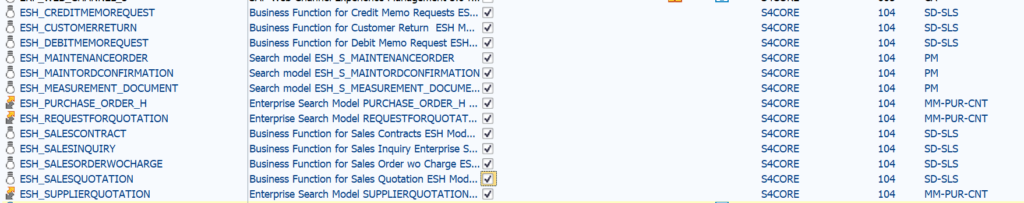

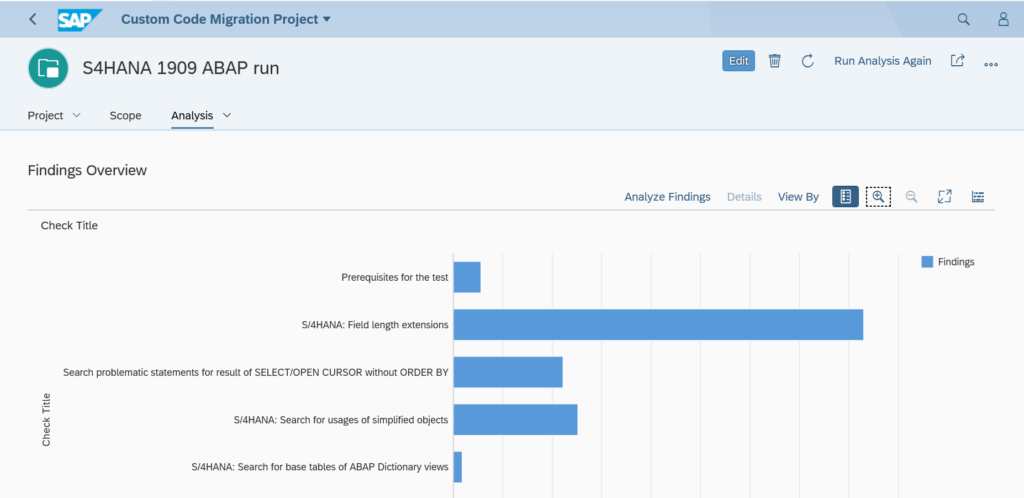

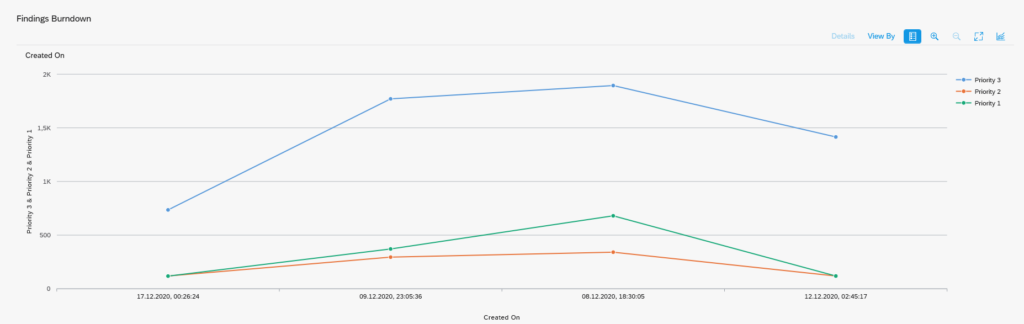

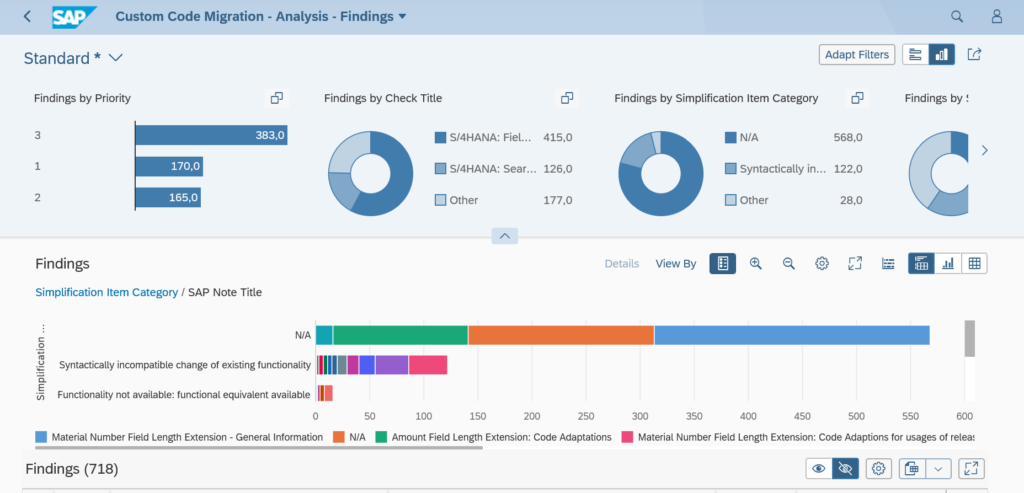

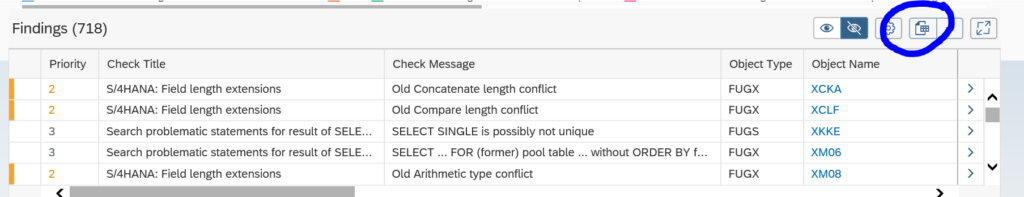

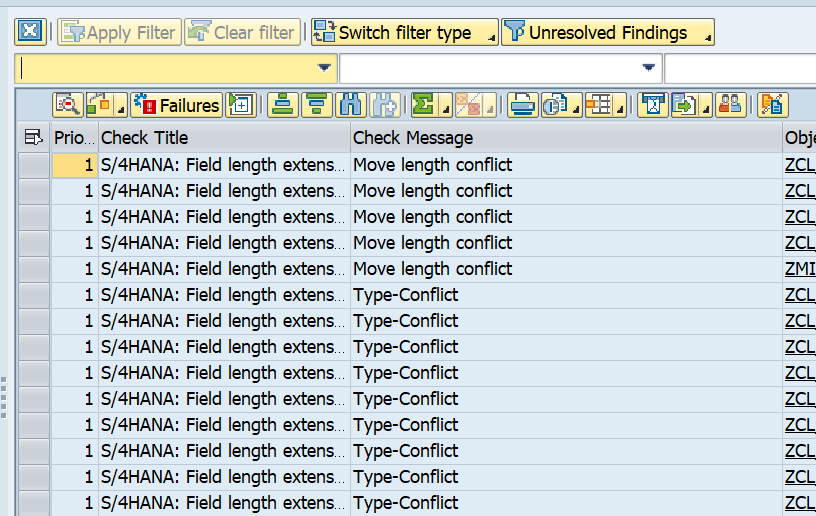

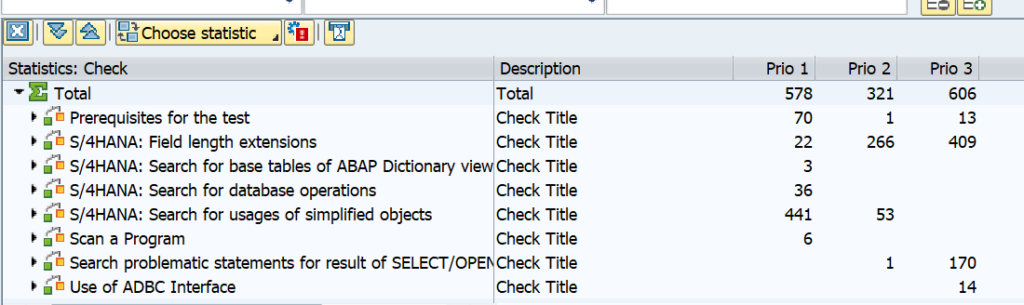

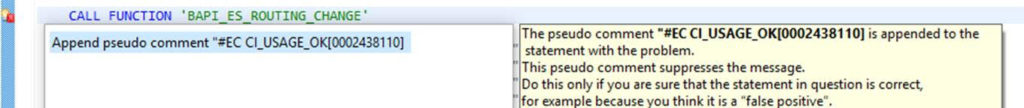

The end results of code being used or not can be seen in the column Usage Information in the Analyze Findings section:

See also OSS note 3505318 – How to start SCMON/SUSG on custom code migration app?.

Different view of usage

OSS note 3410478 – How to utilize and display usage data collected in SUSG? explains to use view SUSG_I_DATA.

Background information

More background on SUSG setup can be found on this blog.

Deletion of SUSG data

Deletion can be done with program SUSG_DELETE_PROGRAMS after applying OSS note 3130631 – SUSG: Report to delete programs from usage data. This will clean up table SUSG_DATA.