SAP GUI comes with a 32 and 64 bit version. The advantage of the 64bit version is the performance. The setback is its dependency on, and its compatibility with, the 64bit Microsoft Office products.

A user cannot have both versions installed on a single machine. It is either the 32bit or 64 bit version.

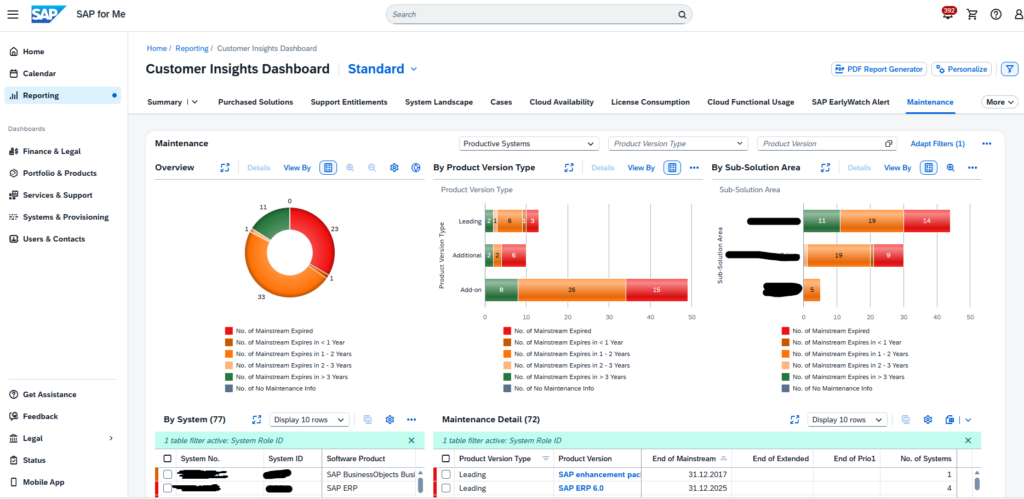

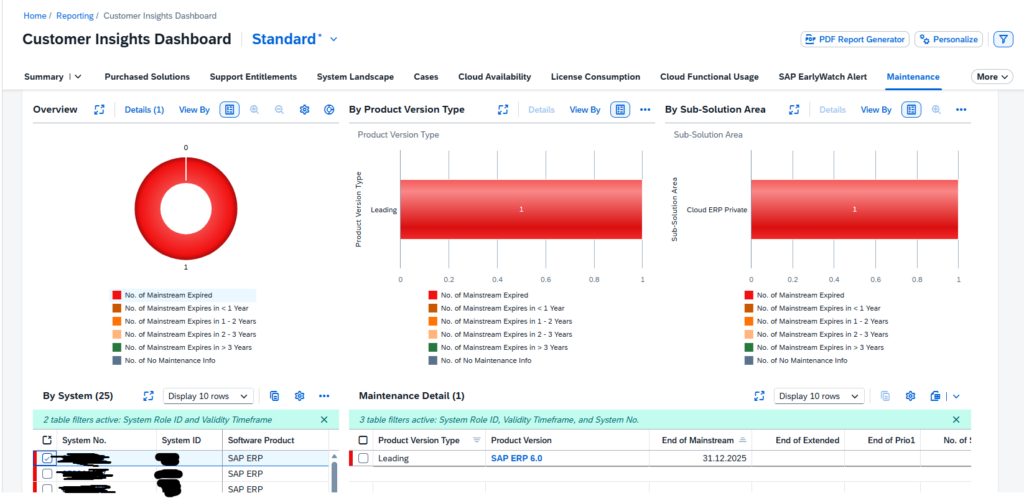

Download location: 3398259 – Where to download 64-bit patches for SAP GUI for Windows 800. – SAP for Me.

Keep track of the SAP GUI build in this blog. With the upcoming SAP GUI 8.10 the information below (which is valid for 8.0) might be different.

Differences between 32bit and 64bit

The main differences are describes in OSS note 3218166 – SAP GUI for Windows: Functional differences of the 64bit version compared to the 32bit version.

The better performance of the controls and download functions are described in this OSS note: 2724656 – SAP GUI NWRFC Controls: 64bit support for Logon, Table, Function and BAPI controls – SAP for Me.

Compatibility issues

The Office compatibility issues are described in the following OSS notes:

- 3192327 – SAP GUI Desktop Office Integration: CreateDocument is failing for older document formats (doc,xls,etc) with 64bit SAP GUI version and 32bit Microsoft Office version

- 3448111 – SAPGUI for windows 8.0 64/32bit compatibility issues with Office.

- 3479996 – SAP GUI for Windows Desktop Office Integration: Unable to open MS office applications inside SAP GUI.

- 3570272 – ALV Grid “Word Processing” export does not work with SAP GUI 64bit and 32bit Microsoft Office

Basic rule: when SAPGUI 64bit is to work with Office products, make sure also the Office products are installed with 64bit version.