For production systems you need to be very careful running clean up programs. When you want to clean up data there, it does take time to do proper analysis and do it carefully.

When you have a sandbox, development or test system, launching and planning all the diverse clean up programs can take quite some time. Even more time than the storage gain.

Solution: install below Z mass clean up program.

Install and run this program at your own risk!

The program will trigger several clean up programs. If you want to change anything: you can do so at your own risk.

There are 2 built-in failsafes:

- Authorization check for NADM (basis admin)

- Check not to run in production system (based on SCC4 client settings)

After the initial clean up program in batch, the next run is scheduled automatically.

Objects, Programs, job frequency and references:

| Object | Program(s) | Job frequency | Reference |

| Table logging | RSTBPDEL | Daily | Table logging |

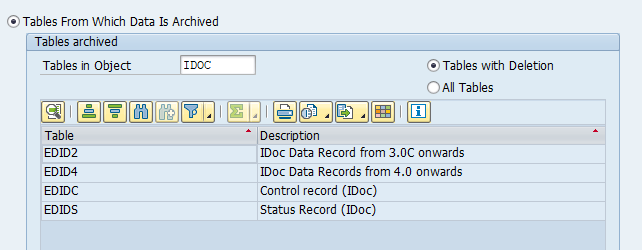

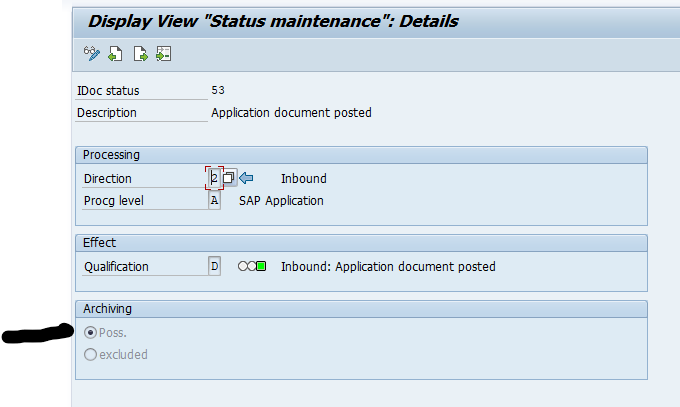

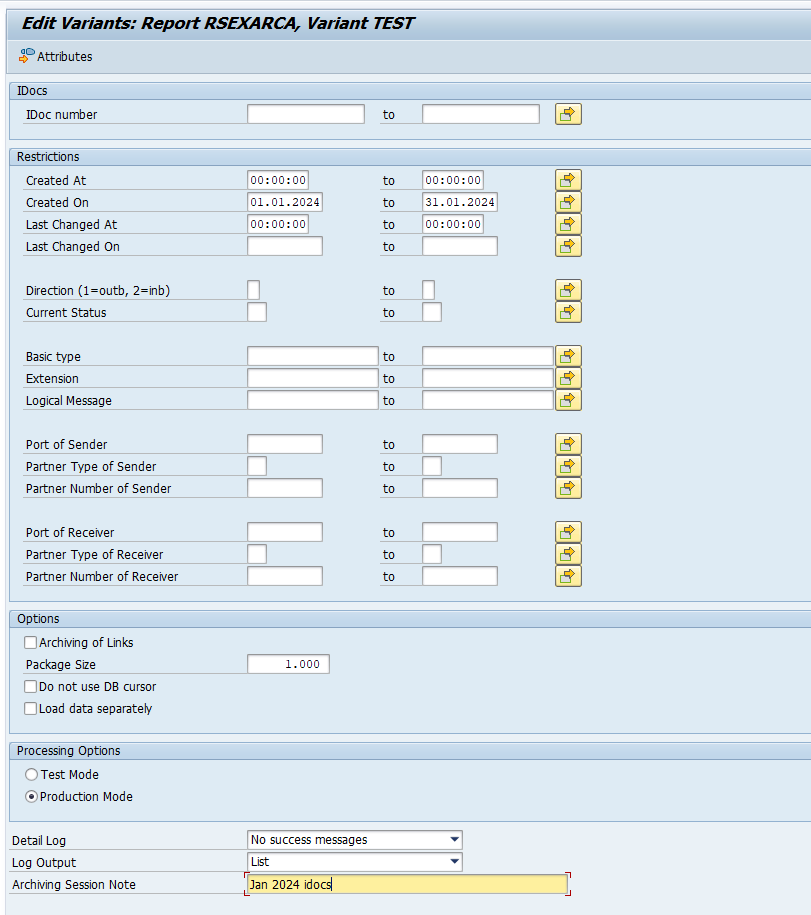

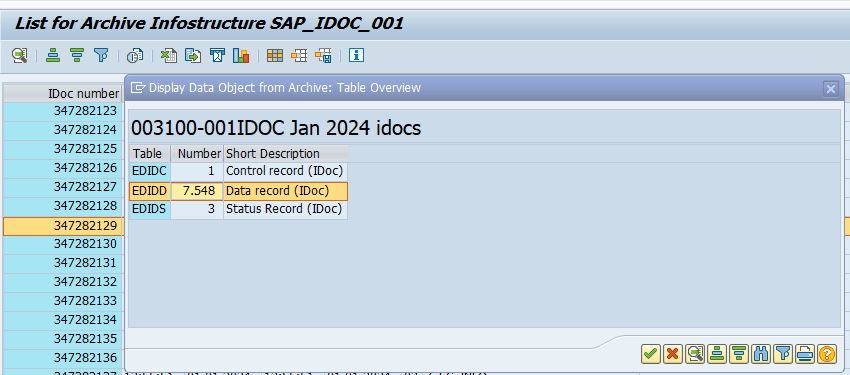

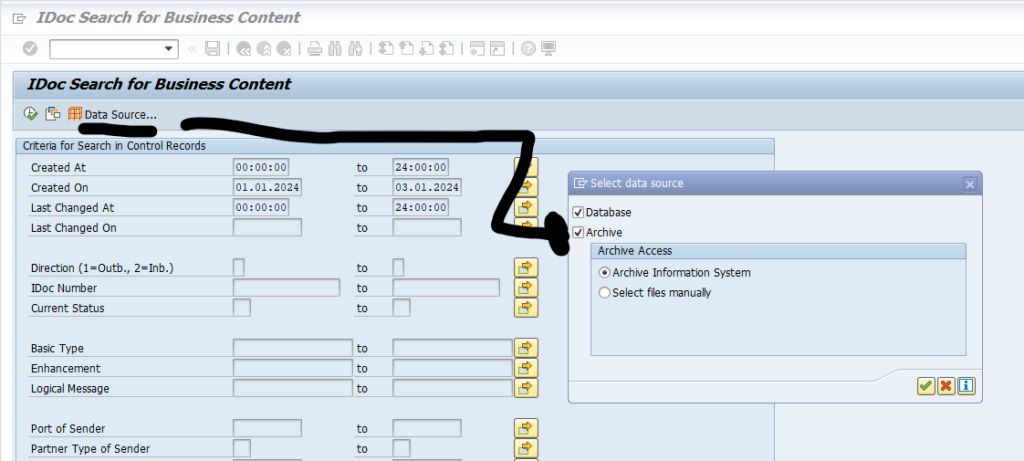

| Idocs | RSETESTD RSRLDREL RSRLDREL2 | Hourly Daily Daily | Idocs |

| Application logs | SBAL_DELETE | Daily | General technical clean up |

| Workflows | RSWWWIDE | Daily | General technical clean up |

| SAP Office | RSBCS_REORG RSBCS_ADRVP RSBCS_SREQ_EXPIRE RSBCS_SREQ_INITIAL_RELEASE RSBCS_DELETE_QUEUE RSSODFRE RSSO_DELETE_PRIVATE RSGOSRE02 | Daily Daily Daily Daily Daily Daily Daily Daily | Deleting SAP office documents |

| Old RFC data | RSARFCER RSTRFCES | Daily Daily | General technical clean up |

| Change documents | RSCDOK99 | Daily | General technical clean up |

| Change pointers | RBDCPCLR2 | Daily | Change pointers |

| ABAP where used | RS_DEL_WBCROSSGT SAPRSEUB | Only once | General technical clean up and ABAP where used index |

| Old batch jobs | RSBTCDEL2 | Daily | General technical clean up |

| Saved lists | RSAQQLRE_MASS | Daily | Table AQLDB clean up |

| BW logging | RSDDSTAT_DATA_DELETE RSBATCH_DEL_MSG_PARM_DTPTEMP RSPM_HOUSEKEEPING RSBKCLEANUPBUFFER ODQ_CLEANUP | Daily Daily Daily Daily | Technical clean up for BI |

*&---------------------------------------------------------------------*

*& Report ZMASSCLEANUP

*&---------------------------------------------------------------------*

*&

*&---------------------------------------------------------------------*

REPORT zmasscleanup.

DATA(zlv_fcode) = 'NADM'. "authorization check object

DATA: zls_t000 TYPE t000.

DATA:

zlt_params TYPE TABLE OF rsparams,

zls_param TYPE rsparams.

DATA zlv_variant_text TYPE varid.

DATA zls_vari_text TYPE varit.

DATA zlt_vari_text TYPE TABLE OF varit.

PARAMETERS: p_cl TYPE char1 DEFAULT ' ' AS CHECKBOX .

PARAMETERS: p_cld TYPE i DEFAULT '365'.

PARAMETERS: p_id TYPE char1 DEFAULT ' ' AS CHECKBOX .

PARAMETERS: p_idd TYPE i DEFAULT '365'.

PARAMETERS: p_al TYPE char1 DEFAULT ' ' AS CHECKBOX .

PARAMETERS: p_ald TYPE i DEFAULT '365'.

PARAMETERS: p_wi TYPE char1 DEFAULT ' ' AS CHECKBOX .

PARAMETERS: p_wid TYPE i DEFAULT '365'.

PARAMETERS: p_so TYPE char1 DEFAULT ' ' AS CHECKBOX .

PARAMETERS: p_sod TYPE i DEFAULT '365'.

PARAMETERS: p_rf TYPE char1 DEFAULT ' ' AS CHECKBOX .

PARAMETERS: p_rfd TYPE i DEFAULT '7'.

PARAMETERS: p_cd TYPE char1 DEFAULT ' ' AS CHECKBOX .

PARAMETERS: p_cdd TYPE i DEFAULT '365'.

PARAMETERS: p_cp TYPE char1 DEFAULT ' ' AS CHECKBOX .

PARAMETERS: p_cpd TYPE i DEFAULT '365'.

PARAMETERS: p_sl TYPE char1 DEFAULT ' ' AS CHECKBOX .

PARAMETERS: p_sld TYPE i DEFAULT '365'.

PARAMETERS: p_bj TYPE char1 DEFAULT ' ' AS CHECKBOX .

PARAMETERS: p_bwd TYPE i DEFAULT '30'.

PARAMETERS: p_bw TYPE char1 DEFAULT ' ' AS CHECKBOX .

PARAMETERS: p_bjd TYPE i DEFAULT '7'.

PARAMETERS: p_vh TYPE char1 DEFAULT ' ' AS CHECKBOX .

INITIALIZATION.

%_p_cl_%_app_%-text = 'Delete table change logging'.

%_p_cld_%_app_%-text = 'Days to keep table change logging'.

%_p_id_%_app_%-text = 'Delete Idocs'.

%_p_idd_%_app_%-text = 'Days to keep idocs'.

%_p_al_%_app_%-text = 'Delete application logs'.

%_p_ald_%_app_%-text = 'Days to keep application'.

%_p_wi_%_app_%-text = 'Delete Workflow items'.

%_p_wid_%_app_%-text = 'Days to keep Workflows'.

%_p_so_%_app_%-text = 'Delete SAP office items'.

%_p_sod_%_app_%-text = 'Days to keep SAP office items'.

%_p_rf_%_app_%-text = 'Delete old RFC data'.

%_p_rfd_%_app_%-text = 'Days to keep old RFC data'.

%_p_cd_%_app_%-text = 'Delete old Change Documents'.

%_p_cdd_%_app_%-text = 'Days to keep old Change Documents'.

%_p_cp_%_app_%-text = 'Delete old Change Pointers'.

%_p_cpd_%_app_%-text = 'Days to keep old Change Pointers'.

%_p_sl_%_app_%-text = 'Delete old Saved lists'.

%_p_sld_%_app_%-text = 'Days to keep old Saved lists'.

%_p_bj_%_app_%-text = 'Delete old batch jobs'.

%_p_bjd_%_app_%-text = 'Days to keep old batch jobs'.

%_p_bw_%_app_%-text = 'Delete old BW jobs'.

%_p_bwd_%_app_%-text = 'Days to keep old BW jobs'.

%_p_vh_%_app_%-text = 'WBCROSSGT table clean up'.

START-OF-SELECTION.

* check for basis adminstration access

AUTHORITY-CHECK OBJECT 'S_ADMI_FCD'

ID 'S_ADMI_FCD' FIELD zlv_fcode.

IF sy-subrc = 0.

"User has administration authorization for that function

* Fetch the client properties

SELECT SINGLE * FROM t000 INTO zls_t000 WHERE mandt = sy-mandt.

IF zls_t000-cccategory = 'P'.

* Forbid to run on productive client

WRITE: / 'Do not run on productive client: Productive (Production client)'.

ELSE.

IF p_cl EQ 'X'.

PERFORM cleanup_table_change_log USING p_cld.

ENDIF.

IF p_id EQ 'X'.

PERFORM cleanup_idocs USING p_idd.

ENDIF.

IF p_al EQ 'X'.

PERFORM cleanup_application_logs USING p_ald.

ENDIF.

IF p_wi EQ 'X'.

PERFORM cleanup_workflows USING p_wid.

ENDIF.

IF p_so EQ 'X'.

PERFORM cleanup_sapoffice USING p_sod.

ENDIF.

IF p_rf EQ 'X'.

PERFORM cleanup_rfcdata USING p_rfd.

ENDIF.

IF p_cd EQ 'X'.

PERFORM cleanup_changedocuments USING p_cdd.

ENDIF.

IF p_cp EQ 'X'.

PERFORM cleanup_changepointers USING p_cpd.

ENDIF.

IF p_bj EQ 'X'.

PERFORM cleanup_batchjobs USING p_bjd.

ENDIF.

IF p_sl EQ 'X'.

PERFORM cleanup_savedlists USING p_sld.

ENDIF.

IF p_bw EQ 'X'.

PERFORM cleanup_bwlogs USING p_bwd.

ENDIF.

IF p_vh EQ 'X'.

PERFORM cleanup_wbcrossgt USING p_cdd.

ENDIF.

ENDIF.

ELSE.

"No authorization

MESSAGE e398(00) WITH |No admin authorization (S_ADMI_FCD={ zlv_fcode })|.

ENDIF.

*&---------------------------------------------------------------------*

*& Form start_job

*&---------------------------------------------------------------------*

*& text

*&---------------------------------------------------------------------*

*& --> p1 text

*& <-- p2 text

*&---------------------------------------------------------------------*

FORM start_job

USING

ziv_progname TYPE progname

ziv_variant TYPE raldb_vari

ziv_timing TYPE char1.

DATA: ziv_jobname TYPE tbtcjob-jobname.

DATA: zcv_jobcount TYPE tbtcjob-jobcount.

DATA: zlv_prddays TYPE tbtcjob-prddays.

DATA: zlv_prdhours TYPE tbtcjob-prdhours.

ziv_jobname = ziv_progname+0(32).

"-------------------------------

" 1) Open job

"-------------------------------

CALL FUNCTION 'JOB_OPEN'

EXPORTING

jobname = ziv_jobname

IMPORTING

jobcount = zcv_jobcount

EXCEPTIONS

cant_create_job = 1

invalid_job_data = 2

jobname_missing = 3

OTHERS = 4.

IF sy-subrc <> 0.

MESSAGE ID sy-msgid TYPE 'E' NUMBER sy-msgno

WITH sy-msgv1 sy-msgv2 sy-msgv3 sy-msgv4.

EXIT.

ENDIF.

"-------------------------------

" 2) Add step (program + variant)

"-------------------------------

CALL FUNCTION 'JOB_SUBMIT'

EXPORTING

jobname = ziv_jobname

jobcount = zcv_jobcount

authcknam = sy-uname

report = ziv_progname

variant = ziv_variant

language = sy-langu

EXCEPTIONS

bad_priparams = 1

bad_xpgflags = 2

invalid_jobdata = 3

jobname_missing = 4

job_notex = 5

job_submit_failed = 6

lock_failed = 7

program_missing = 8

prog_abap_and_extpg = 9

OTHERS = 10.

IF sy-subrc <> 0.

"Best-effort cleanup: close job without releasing it

CALL FUNCTION 'JOB_CLOSE'

EXPORTING

jobname = ziv_jobname

jobcount = zcv_jobcount

strtimmed = space

EXCEPTIONS

OTHERS = 1.

MESSAGE ID sy-msgid TYPE 'E' NUMBER sy-msgno

WITH sy-msgv1 sy-msgv2 sy-msgv3 sy-msgv4.

EXIT.

ENDIF.

"-------------------------------

" 3) Close job and start immediately

"-------------------------------

IF ziv_timing EQ 'D'.

zlv_prddays = 1.

zlv_prdhours = 0.

ELSEIF ziv_timing EQ 'H'.

zlv_prddays = 0.

zlv_prdhours = 1.

ELSEIF ziv_timing EQ 'X'. "run only once

zlv_prddays = 0.

zlv_prdhours = 0.

ENDIF.

CALL FUNCTION 'JOB_CLOSE'

EXPORTING

jobname = ziv_jobname

jobcount = zcv_jobcount

prddays = zlv_prddays

prdhours = zlv_prdhours

strtimmed = 'X'

EXCEPTIONS

cant_start_immediate = 1

invalid_startdate = 2

jobname_missing = 3

job_close_failed = 4

job_nosteps = 5

job_notex = 6

lock_failed = 7

OTHERS = 8.

IF sy-subrc <> 0.

MESSAGE ID sy-msgid TYPE 'E' NUMBER sy-msgno

WITH sy-msgv1 sy-msgv2 sy-msgv3 sy-msgv4.

EXIT.

ENDIF.

WRITE: / 'Job',ziv_jobname, zcv_jobcount,' scheduled:', ziv_progname, ziv_variant, 'immediate'.

ENDFORM.

*&---------------------------------------------------------------------*

*& Form create_variant

*&---------------------------------------------------------------------*

*& text

*&---------------------------------------------------------------------*

*& --> p1 text

*& <-- p2 text

*&---------------------------------------------------------------------*

FORM create_variant

TABLES zit_params

USING

ziv_report TYPE progname

ziv_variant TYPE raldb_vari.

REFRESH zlt_vari_text.

zlv_variant_text-report = ziv_report.

zlv_variant_text-variant = ziv_variant.

zlv_variant_text-environmnt = 'A'.

zls_vari_text-vtext = zlv_variant_text.

zls_vari_text-report = ziv_report.

zls_vari_text-variant = ziv_variant.

APPEND zls_vari_text TO zlt_vari_text.

CALL FUNCTION 'RS_CREATE_VARIANT'

EXPORTING

curr_report = ziv_report

curr_variant = ziv_variant

vari_desc = zlv_variant_text

TABLES

vari_contents = zit_params

vari_text = zlt_vari_text

EXCEPTIONS

illegal_report_or_variant = 1

illegal_variantname = 2

not_authorized = 3

not_executed = 4

report_not_existent = 5

report_not_supplied = 6

variant_exists = 7

variant_locked = 8

OTHERS = 9.

IF sy-subrc = 0.

WRITE: / 'Variant created:', ziv_report, ziv_variant.

ELSE.

WRITE: / 'Variant creation failed. SY-SUBRC=', sy-subrc.

ENDIF.

ENDFORM.

*&---------------------------------------------------------------------*

*& Form cleanup_table_change_log

*&---------------------------------------------------------------------*

*& text

*&---------------------------------------------------------------------*

*& --> p1 text

*& <-- p2 text

*&---------------------------------------------------------------------*

FORM cleanup_table_change_log USING ziv_days.

DATA: zlv_variant TYPE raldb_vari.

CLEAR zls_param.

REFRESH zlt_params.

zls_param-selname = 'P_DAYS'. " amount of days to keep

zls_param-kind = 'P'.

zls_param-sign = 'I'.

zls_param-option = 'EQ'.

zls_param-low = ziv_days.

APPEND zls_param TO zlt_params.

zlv_variant = |ZCL{ ziv_days }|.

PERFORM create_variant TABLES zlt_params USING 'RSTBPDEL' zlv_variant.

PERFORM start_job USING 'RSTBPDEL' zlv_variant 'D'.

ENDFORM.

*&---------------------------------------------------------------------*

*& Form cleanup_idocs

*&---------------------------------------------------------------------*

*& text

*&---------------------------------------------------------------------*

*& --> P_IDD

*&---------------------------------------------------------------------*

FORM cleanup_idocs USING ziv_days.

DATA: zlv_variant TYPE raldb_vari.

DATA: zlv_date TYPE sy-datum.

CLEAR zls_param.

REFRESH zlt_params.

zls_param-selname = 'GP_TEST'. " test flag to be removed

zls_param-kind = 'P'.

zls_param-sign = 'I'.

zls_param-option = 'EQ'.

zls_param-low = ' '.

APPEND zls_param TO zlt_params.

zls_param-selname = 'P_COM'. " commit counter

zls_param-kind = 'P'.

zls_param-sign = 'I'.

zls_param-option = 'EQ'.

zls_param-low = '100'.

APPEND zls_param TO zlt_params.

zls_param-selname = 'GP_MAXCT'. " max amount of idocs

zls_param-kind = 'P'.

zls_param-sign = 'I'.

zls_param-option = 'EQ'.

zls_param-low = '1000000'.

APPEND zls_param TO zlt_params.

zls_param-selname = 'GS_CREDA'. " creation date

zls_param-kind = 'S'.

zls_param-sign = 'I'.

zls_param-option = 'BT'.

zlv_date = sy-datum - 9999.

zls_param-low = zlv_date.

zlv_date = sy-datum - ziv_days.

zls_param-high = zlv_date.

APPEND zls_param TO zlt_params.

zlv_variant = |ZCL{ ziv_days }|.

PERFORM create_variant TABLES zlt_params USING 'RSETESTD' zlv_variant.

PERFORM start_job USING 'RSETESTD' zlv_variant 'H'.

CLEAR zls_param.

REFRESH zlt_params.

zlv_date = sy-datum - 9999.

zls_param-selname = 'G_DSINCE'. " start date

zls_param-kind = 'P'.

zls_param-sign = 'I'.

zls_param-option = 'EQ'.

zls_param-low = zlv_date.

APPEND zls_param TO zlt_params.

zlv_date = sy-datum - ziv_days.

zls_param-selname = 'G_DUNTIL'. " to date

zls_param-kind = 'P'.

zls_param-sign = 'I'.

zls_param-option = 'EQ'.

zls_param-low = zlv_date.

APPEND zls_param TO zlt_params.

zls_param-selname = 'G_TABLE'. " table

zls_param-kind = 'P'.

zls_param-sign = 'I'.

zls_param-option = 'EQ'.

zls_param-low = 'IDOCREL'.

APPEND zls_param TO zlt_params.

PERFORM create_variant TABLES zlt_params USING 'RSRLDREL2' zlv_variant.

PERFORM start_job USING 'RSRLDREL2' zlv_variant 'D'.

CLEAR zls_param.

REFRESH zlt_params.

zlv_date = sy-datum - 9999.

zls_param-selname = 'G_DSINCE'. " start date

zls_param-kind = 'P'.

zls_param-sign = 'I'.

zls_param-option = 'EQ'.

zls_param-low = zlv_date.

APPEND zls_param TO zlt_params.

zlv_date = sy-datum - ziv_days.

zls_param-selname = 'G_DUNTIL'. " to date

zls_param-kind = 'P'.

zls_param-sign = 'I'.

zls_param-option = 'EQ'.

zls_param-low = zlv_date.

APPEND zls_param TO zlt_params.

zls_param-selname = 'G_OBJTYP'. " table

zls_param-kind = 'P'.

zls_param-sign = 'I'.

zls_param-option = 'EQ'.

zls_param-low = 'IDOC'.

APPEND zls_param TO zlt_params.

zls_param-selname = 'G_NONE'. " no existance check

zls_param-kind = 'P'.

zls_param-sign = 'I'.

zls_param-option = 'EQ'.

zls_param-low = 'X'.

APPEND zls_param TO zlt_params.

zls_param-selname = 'G_PKSZ'. " package size

zls_param-kind = 'P'.

zls_param-sign = 'I'.

zls_param-option = 'EQ'.

zls_param-low = 250.

APPEND zls_param TO zlt_params.

zls_param-selname = 'G_CTSZ'. " package size

zls_param-kind = 'P'.

zls_param-sign = 'I'.

zls_param-option = 'EQ'.

zls_param-low = 250.

APPEND zls_param TO zlt_params.

PERFORM create_variant TABLES zlt_params USING 'RSRLDREL' zlv_variant.

PERFORM start_job USING 'RSRLDREL' zlv_variant 'D'.

ENDFORM.

*&---------------------------------------------------------------------*

*& Form cleanup_application_logs

*&---------------------------------------------------------------------*

*& text

*&---------------------------------------------------------------------*

*& --> P_ALD

*&---------------------------------------------------------------------*

FORM cleanup_application_logs USING ziv_days.

DATA: zlv_variant TYPE raldb_vari.

DATA: zlv_date TYPE sy-datum.

CLEAR zls_param.

REFRESH zlt_params.

zls_param-selname = 'P_BEF_OK'. " delete all logs

zls_param-kind = 'P'.

zls_param-sign = 'I'.

zls_param-option = 'EQ'.

zls_param-low = 'X'.

APPEND zls_param TO zlt_params.

zls_param-selname = 'P_BCK'. " schedule background jobs

zls_param-kind = 'P'.

zls_param-sign = 'I'.

zls_param-option = 'EQ'.

zls_param-low = 'X'.

APPEND zls_param TO zlt_params.

zlv_date = sy-datum - 9999.

zls_param-selname = 'P_BEGDAT'. " start date

zls_param-kind = 'P'.

zls_param-sign = 'I'.

zls_param-option = 'EQ'.

zls_param-low = zlv_date.

APPEND zls_param TO zlt_params.

zlv_date = sy-datum - ziv_days.

zls_param-selname = 'P_ENDDAT'. " end date

zls_param-kind = 'P'.

zls_param-sign = 'I'.

zls_param-option = 'EQ'.

zls_param-low = zlv_date.

APPEND zls_param TO zlt_params.

zlv_variant = |ZCL{ ziv_days }|.

PERFORM create_variant TABLES zlt_params USING 'SBAL_DELETE' zlv_variant.

PERFORM start_job USING 'SBAL_DELETE' zlv_variant 'D'.

ENDFORM.

*&---------------------------------------------------------------------*

*& Form cleanup_workflows

*&---------------------------------------------------------------------*

*& text

*&---------------------------------------------------------------------*

*& --> P_WID

*&---------------------------------------------------------------------*

FORM cleanup_workflows USING ziv_days.

DATA: zlv_variant TYPE raldb_vari.

DATA: zlv_date TYPE sy-datum.

CLEAR zls_param.

REFRESH zlt_params.

zls_param-selname = 'I_DISPLY'. " delete immediately

zls_param-kind = 'P'.

zls_param-sign = 'I'.

zls_param-option = 'EQ'.

zls_param-low = 'X'.

APPEND zls_param TO zlt_params.

zls_param-selname = 'I_DELERR'. " delete errors as well

zls_param-kind = 'P'.

zls_param-sign = 'I'.

zls_param-option = 'EQ'.

zls_param-low = 'X'.

APPEND zls_param TO zlt_params.

zls_param-selname = 'I_DELLOG'. " delete log as well

zls_param-kind = 'P'.

zls_param-sign = 'I'.

zls_param-option = 'EQ'.

zls_param-low = 'X'.

APPEND zls_param TO zlt_params.

zls_param-selname = 'I_TOPLVL'. " top level only

zls_param-kind = 'P'.

zls_param-sign = 'I'.

zls_param-option = 'EQ'.

zls_param-low = 'X'.

APPEND zls_param TO zlt_params.

zls_param-selname = 'IT_CD'. " creation date

zls_param-kind = 'S'.

zls_param-sign = 'I'.

zls_param-option = 'BT'.

zlv_date = sy-datum - 9999.

zls_param-low = zlv_date.

zlv_date = sy-datum - ziv_days.

zls_param-high = zlv_date.

APPEND zls_param TO zlt_params.

zlv_variant = |ZCL{ ziv_days }|.

PERFORM create_variant TABLES zlt_params USING 'RSWWWIDE' zlv_variant.

PERFORM start_job USING 'RSWWWIDE' zlv_variant 'D'.

ENDFORM.

*&---------------------------------------------------------------------*

*& Form cleanup_sapoffice

*&---------------------------------------------------------------------*

*& text

*&---------------------------------------------------------------------*

*& --> P_SOD

*&---------------------------------------------------------------------*

FORM cleanup_sapoffice USING ziv_days.

DATA: zlv_variant TYPE raldb_vari.

DATA: zlv_date TYPE sy-datum.

CLEAR zls_param.

REFRESH zlt_params.

zls_param-selname = 'TESTMODE'. " remove test mode

zls_param-kind = 'P'.

zls_param-sign = 'I'.

zls_param-option = 'EQ'.

zls_param-low = ' '.

APPEND zls_param TO zlt_params.

zls_param-selname = 'PACKSIZE'. " package size

zls_param-kind = 'P'.

zls_param-sign = 'I'.

zls_param-option = 'EQ'.

zls_param-low = 500.

APPEND zls_param TO zlt_params.

zls_param-selname = 'SRDEL'. " send docs

zls_param-kind = 'P'.

zls_param-sign = 'I'.

zls_param-option = 'EQ'.

zls_param-low = 'X'.

APPEND zls_param TO zlt_params.

zls_param-selname = 'DOCDEL'. " not send docs

zls_param-kind = 'P'.

zls_param-sign = 'I'.

zls_param-option = 'EQ'.

zls_param-low = 'X'.

APPEND zls_param TO zlt_params.

zls_param-selname = 'CREATED'. " creation date

zls_param-kind = 'S'.

zls_param-sign = 'I'.

zls_param-option = 'BT'.

zlv_date = sy-datum - 9999.

zls_param-low = zlv_date.

zlv_date = sy-datum - ziv_days.

zls_param-high = zlv_date.

APPEND zls_param TO zlt_params.

zlv_variant = |ZCL{ ziv_days }|.

PERFORM create_variant TABLES zlt_params USING 'RSBCS_REORG' zlv_variant.

PERFORM start_job USING 'RSBCS_REORG' zlv_variant 'D'.

CLEAR zls_param.

REFRESH zlt_params.

zls_param-selname = 'TESTMODE'. " remove test mode

zls_param-kind = 'P'.

zls_param-sign = 'I'.

zls_param-option = 'EQ'.

zls_param-low = ' '.

APPEND zls_param TO zlt_params.

zls_param-selname = 'ADRVP'.

zls_param-kind = 'P'.

zls_param-sign = 'I'.

zls_param-option = 'EQ'.

zls_param-low = 'X'.

APPEND zls_param TO zlt_params.

zls_param-selname = 'ADRV'.

zls_param-kind = 'P'.

zls_param-sign = 'I'.

zls_param-option = 'EQ'.

zls_param-low = 'X'.

APPEND zls_param TO zlt_params.

zls_param-selname = 'PACKSIZE'.

zls_param-kind = 'P'.

zls_param-sign = 'I'.

zls_param-option = 'EQ'.

zls_param-low = 5000.

APPEND zls_param TO zlt_params.

PERFORM create_variant TABLES zlt_params USING 'RSBCS_ADRVP' zlv_variant.

PERFORM start_job USING 'RSBCS_ADRVP' zlv_variant 'D'.

CLEAR zls_param.

REFRESH zlt_params.

zls_param-selname = 'TESTMODE'. " remove test mode

zls_param-kind = 'P'.

zls_param-sign = 'I'.

zls_param-option = 'EQ'.

zls_param-low = ' '.

APPEND zls_param TO zlt_params.

zls_param-selname = 'FULL_LOG'. " detailled log

zls_param-kind = 'P'.

zls_param-sign = 'I'.

zls_param-option = 'EQ'.

zls_param-low = ' '.

APPEND zls_param TO zlt_params.

zls_param-selname = 'CR_DAT'. " creation date

zls_param-kind = 'S'.

zls_param-sign = 'I'.

zls_param-option = 'BT'.

zlv_date = sy-datum - 9999.

zls_param-low = zlv_date.

zlv_date = sy-datum - ziv_days.

zls_param-high = zlv_date.

APPEND zls_param TO zlt_params.

zlv_variant = |ZCL{ ziv_days }|.

PERFORM create_variant TABLES zlt_params USING 'RSBCS_SREQ_EXPIRE' zlv_variant.

PERFORM start_job USING 'RSBCS_SREQ_EXPIRE' zlv_variant 'D'.

CLEAR zls_param.

REFRESH zlt_params.

zls_param-selname = 'TESTMODE'. " remove test mode

zls_param-kind = 'P'.

zls_param-sign = 'I'.

zls_param-option = 'EQ'.

zls_param-low = ' '.

APPEND zls_param TO zlt_params.

zls_param-selname = 'CRDAT'. " creation date

zls_param-kind = 'S'.

zls_param-sign = 'I'.

zls_param-option = 'BT'.

zlv_date = sy-datum - 9999.

zls_param-low = zlv_date.

zlv_date = sy-datum - ziv_days.

zls_param-high = zlv_date.

APPEND zls_param TO zlt_params.

zlv_variant = |ZCL{ ziv_days }|.

PERFORM create_variant TABLES zlt_params USING 'RSBCS_SREQ_INITIAL_RELEASE' zlv_variant.

PERFORM start_job USING 'RSBCS_SREQ_INITIAL_RELEASE' zlv_variant 'D'.

CLEAR zls_param.

REFRESH zlt_params.

zls_param-selname = 'TESTMODE'. " remove test mode

zls_param-kind = 'P'.

zls_param-sign = 'I'.

zls_param-option = 'EQ'.

zls_param-low = ' '.

APPEND zls_param TO zlt_params.

zls_param-selname = 'SNDDAT'. " creation date

zls_param-kind = 'S'.

zls_param-sign = 'I'.

zls_param-option = 'BT'.

zlv_date = sy-datum - 9999.

zls_param-low = zlv_date.

zlv_date = sy-datum - ziv_days.

zls_param-high = zlv_date.

APPEND zls_param TO zlt_params.

zlv_variant = |ZCL{ ziv_days }|.

PERFORM create_variant TABLES zlt_params USING 'RSBCS_DELETE_QUEUE' zlv_variant.

PERFORM start_job USING 'RSBCS_DELETE_QUEUE' zlv_variant 'D'.

zls_param-selname = 'P_AGE'. " amount of days to keep

zls_param-kind = 'P'.

zls_param-sign = 'I'.

zls_param-option = 'EQ'.

zls_param-low = ziv_days.

APPEND zls_param TO zlt_params.

zls_param-selname = 'TESTMODE'. " remove test mode

zls_param-kind = 'P'.

zls_param-sign = 'I'.

zls_param-option = 'EQ'.

zls_param-low = ' '.

APPEND zls_param TO zlt_params.

zls_param-selname = 'DELETE'.

zls_param-kind = 'P'.

zls_param-sign = 'I'.

zls_param-option = 'EQ'.

zls_param-low = 'X'.

APPEND zls_param TO zlt_params.

zls_param-selname = 'NOT_SENT'.

zls_param-kind = 'P'.

zls_param-sign = 'I'.

zls_param-option = 'EQ'.

zls_param-low = 'X'.

APPEND zls_param TO zlt_params.

zls_param-selname = 'SENDER'.

zls_param-kind = 'S'.

zls_param-sign = 'I'.

zls_param-option = 'CP'.

zls_param-low = '*'.

APPEND zls_param TO zlt_params.

zlv_variant = |ZCL{ ziv_days }|.

PERFORM create_variant TABLES zlt_params USING 'RSSODFRE' zlv_variant.

PERFORM start_job USING 'RSSODFRE' zlv_variant 'D'.

zls_param-selname = 'USER'.

zls_param-kind = 'S'.

zls_param-sign = 'I'.

zls_param-option = 'CP'.

zls_param-low = '*'.

APPEND zls_param TO zlt_params.

zls_param-selname = 'INBOX'.

zls_param-kind = 'P'.

zls_param-sign = 'I'.

zls_param-option = 'EQ'.

zls_param-low = 'X'.

APPEND zls_param TO zlt_params.

zls_param-selname = 'ALL'.

zls_param-kind = 'P'.

zls_param-sign = 'I'.

zls_param-option = 'EQ'.

zls_param-low = 'X'.

APPEND zls_param TO zlt_params.

zls_param-selname = 'READONLY'.

zls_param-kind = 'P'.

zls_param-sign = 'I'.

zls_param-option = 'EQ'.

zls_param-low = ' '.

APPEND zls_param TO zlt_params.

zls_param-selname = 'RESUB'.

zls_param-kind = 'P'.

zls_param-sign = 'I'.

zls_param-option = 'EQ'.

zls_param-low = ' '.

APPEND zls_param TO zlt_params.

zls_param-selname = 'PRIVDOC'.

zls_param-kind = 'P'.

zls_param-sign = 'I'.

zls_param-option = 'EQ'.

zls_param-low = ' '.

APPEND zls_param TO zlt_params.

zls_param-selname = 'TRASH'.

zls_param-kind = 'P'.

zls_param-sign = 'I'.

zls_param-option = 'EQ'.

zls_param-low = ' '.

APPEND zls_param TO zlt_params.

zls_param-selname = 'REPLY'.

zls_param-kind = 'P'.

zls_param-sign = 'I'.

zls_param-option = 'EQ'.

zls_param-low = ' '.

APPEND zls_param TO zlt_params.

zls_param-selname = 'P_AGE'. " amount of days to keep

zls_param-kind = 'P'.

zls_param-sign = 'I'.

zls_param-option = 'EQ'.

zls_param-low = ziv_days.

APPEND zls_param TO zlt_params.

zls_param-selname = 'TEST'. " remove test mode

zls_param-kind = 'P'.

zls_param-sign = 'I'.

zls_param-option = 'EQ'.

zls_param-low = ' '.

APPEND zls_param TO zlt_params.

zls_param-selname = 'OUTDOCS'.

zls_param-kind = 'P'.

zls_param-sign = 'I'.

zls_param-option = 'EQ'.

zls_param-low = ' '.

APPEND zls_param TO zlt_params.

zls_param-selname = 'PACKSIZE'.

zls_param-kind = 'P'.

zls_param-sign = 'I'.

zls_param-option = 'EQ'.

zls_param-low = 100.

APPEND zls_param TO zlt_params.

zlv_variant = |ZCL{ ziv_days }|.

PERFORM create_variant TABLES zlt_params USING 'RSSO_DELETE_PRIVATE' zlv_variant.

PERFORM start_job USING 'RSSO_DELETE_PRIVATE' zlv_variant 'D'.

zls_param-selname = 'PPSIZE'.

zls_param-kind = 'P'.

zls_param-sign = 'I'.

zls_param-option = 'EQ'.

zls_param-low = 500.

APPEND zls_param TO zlt_params.

zls_param-selname = 'PTEST'.

zls_param-kind = 'P'.

zls_param-sign = 'I'.

zls_param-option = 'EQ'.

zls_param-low = 'X'.

APPEND zls_param TO zlt_params.

zlv_date = sy-datum - ziv_days.

zls_param-selname = 'P_UNTIL'. " end date

zls_param-kind = 'P'.

zls_param-sign = 'I'.

zls_param-option = 'EQ'.

zls_param-low = zlv_date.

APPEND zls_param TO zlt_params.

zlv_date = sy-datum - 9999.

zls_param-selname = 'PDDSINCE'. " start date

zls_param-kind = 'P'.

zls_param-sign = 'I'.

zls_param-option = 'EQ'.

zls_param-low = zlv_date.

APPEND zls_param TO zlt_params.

zlv_variant = |ZCL{ ziv_days }|.

PERFORM create_variant TABLES zlt_params USING 'RSGOSRE02' zlv_variant.

PERFORM start_job USING 'RSGOSRE02' zlv_variant 'D'.

ENDFORM.

*&---------------------------------------------------------------------*

*& Form cleanup_rfcdata

*&---------------------------------------------------------------------*

*& text

*&---------------------------------------------------------------------*

*& --> P_RFD

*&---------------------------------------------------------------------*

FORM cleanup_rfcdata USING ziv_days.

DATA: zlv_variant TYPE raldb_vari.

DATA: zlv_date TYPE sy-datum.

CLEAR zls_param.

REFRESH zlt_params.

zls_param-selname = 'PAKET'.

zls_param-kind = 'P'.

zls_param-sign = 'I'.

zls_param-option = 'EQ'.

zls_param-low = '10000'.

APPEND zls_param TO zlt_params.

zls_param-selname = 'CPIC'.

zls_param-kind = 'P'.

zls_param-sign = 'I'.

zls_param-option = 'EQ'.

zls_param-low = 'X'.

APPEND zls_param TO zlt_params.

zls_param-selname = 'REC'.

zls_param-kind = 'P'.

zls_param-sign = 'I'.

zls_param-option = 'EQ'.

zls_param-low = 'X'.

APPEND zls_param TO zlt_params.

zls_param-selname = 'FAIL'.

zls_param-kind = 'P'.

zls_param-sign = 'I'.

zls_param-option = 'EQ'.

zls_param-low = 'X'.

APPEND zls_param TO zlt_params.

zls_param-selname = 'SENT'.

zls_param-kind = 'P'.

zls_param-sign = 'I'.

zls_param-option = 'EQ'.

zls_param-low = 'X'.

APPEND zls_param TO zlt_params.

zls_param-selname = 'EXEC'.

zls_param-kind = 'P'.

zls_param-sign = 'I'.

zls_param-option = 'EQ'.

zls_param-low = 'X'.

APPEND zls_param TO zlt_params.

zls_param-selname = 'LOAD'.

zls_param-kind = 'P'.

zls_param-sign = 'I'.

zls_param-option = 'EQ'.

zls_param-low = 'X'.

APPEND zls_param TO zlt_params.

zls_param-selname = 'RETRY'.

zls_param-kind = 'P'.

zls_param-sign = 'I'.

zls_param-option = 'EQ'.

zls_param-low = 'X'.

APPEND zls_param TO zlt_params.

zls_param-selname = 'NORETRY'.

zls_param-kind = 'P'.

zls_param-sign = 'I'.

zls_param-option = 'EQ'.

zls_param-low = 'X'.

APPEND zls_param TO zlt_params.

zls_param-selname = 'PCLNT'.

zls_param-kind = 'P'.

zls_param-sign = 'I'.

zls_param-option = 'EQ'.

zls_param-low = sy-mandt.

APPEND zls_param TO zlt_params.

zls_param-selname = 'DAY'. " creation date

zls_param-kind = 'S'.

zls_param-sign = 'I'.

zls_param-option = 'BT'.

zlv_date = sy-datum - 9999.

zls_param-low = zlv_date.

zlv_date = sy-datum - ziv_days.

zls_param-high = zlv_date.

APPEND zls_param TO zlt_params.

zlv_variant = |ZCL{ ziv_days }|.

PERFORM create_variant TABLES zlt_params USING 'RSARFCER' zlv_variant.

PERFORM start_job USING 'RSARFCER' zlv_variant 'D'.

CLEAR zls_param.

REFRESH zlt_params.

zls_param-selname = 'NRDAYS'.

zls_param-kind = 'P'.

zls_param-sign = 'I'.

zls_param-option = 'EQ'.

zls_param-low = ziv_days.

APPEND zls_param TO zlt_params.

zls_param-selname = 'BLK_SIZE'.

zls_param-kind = 'P'.

zls_param-sign = 'I'.

zls_param-option = 'EQ'.

zls_param-low = 5000.

APPEND zls_param TO zlt_params.

zls_param-selname = 'DELSTATE'.

zls_param-kind = 'P'.

zls_param-sign = 'I'.

zls_param-option = 'EQ'.

zls_param-low = 'SYSFAIL'.

APPEND zls_param TO zlt_params.

PERFORM create_variant TABLES zlt_params USING 'RSTRFCES' zlv_variant.

PERFORM start_job USING 'RSTRFCES' zlv_variant 'D'.

ENDFORM.

*&---------------------------------------------------------------------*

*& Form cleanup_changedocuments

*&---------------------------------------------------------------------*

*& text

*&---------------------------------------------------------------------*

*& --> P_CDD

*&---------------------------------------------------------------------*

FORM cleanup_changedocuments USING ziv_days.

DATA: zlv_variant TYPE raldb_vari.

DATA: zlv_date TYPE sy-datum.

CLEAR zls_param.

REFRESH zlt_params.

zls_param-selname = 'OBJECT'.

zls_param-kind = 'S'.

zls_param-sign = 'I'.

zls_param-option = 'CP'.

zls_param-low = '*'.

APPEND zls_param TO zlt_params.

zlv_date = sy-datum - ziv_days.

zls_param-selname = 'TO_DATE'.

zls_param-kind = 'P'.

zls_param-sign = 'I'.

zls_param-option = 'EQ'.

zls_param-low = zlv_date.

APPEND zls_param TO zlt_params.

zls_param-selname = 'TESTRUN'. "testrun

zls_param-kind = 'P'.

zls_param-sign = 'I'.

zls_param-option = 'EQ'.

zls_param-low = ' '.

APPEND zls_param TO zlt_params.

zls_param-selname = 'P_ALV'. "display

zls_param-kind = 'P'.

zls_param-sign = 'I'.

zls_param-option = 'EQ'.

zls_param-low = ' '.

APPEND zls_param TO zlt_params.

zls_param-selname = 'P_STAT'. "counting

zls_param-kind = 'P'.

zls_param-sign = 'I'.

zls_param-option = 'EQ'.

zls_param-low = ' '.

APPEND zls_param TO zlt_params.

zls_param-selname = 'P_SIZE'. "package size

zls_param-kind = 'P'.

zls_param-sign = 'I'.

zls_param-option = 'EQ'.

zls_param-low = 1500.

APPEND zls_param TO zlt_params.

zls_param-selname = 'P_LINSHW'. "package size

zls_param-kind = 'P'.

zls_param-sign = 'I'.

zls_param-option = 'EQ'.

zls_param-low = 1500.

APPEND zls_param TO zlt_params.

zls_param-selname = 'AUD_DATE'. " creation date

zls_param-kind = 'S'.

zls_param-sign = 'I'.

zls_param-option = 'BT'.

zlv_date = sy-datum - 9999.

zls_param-low = zlv_date.

zlv_date = sy-datum - ziv_days.

zls_param-high = zlv_date.

APPEND zls_param TO zlt_params.

zlv_variant = |ZCL{ ziv_days }|.

PERFORM create_variant TABLES zlt_params USING 'RSCDOK99' zlv_variant.

PERFORM start_job USING 'RSCDOK99' zlv_variant 'D'.

ENDFORM.

*&---------------------------------------------------------------------*

*& Form cleanup_WBCROSSGT

*&---------------------------------------------------------------------*

*& text

*&---------------------------------------------------------------------*

*& --> P_CDD

*&---------------------------------------------------------------------*

FORM cleanup_wbcrossgt USING p_p_cdd.

DATA: zlv_variant TYPE raldb_vari.

CLEAR zlv_variant.

PERFORM start_job USING 'RS_DEL_WBCROSSGT' zlv_variant 'X'.

PERFORM start_job USING 'SAPRSEUB' zlv_variant 'X'.

ENDFORM.

*&---------------------------------------------------------------------*

*& Form cleanup_batchjobs

*&---------------------------------------------------------------------*

*& text

*&---------------------------------------------------------------------*

*& --> P_BJD

*&---------------------------------------------------------------------*

FORM cleanup_batchjobs USING ziv_days.

DATA: zlv_variant TYPE raldb_vari.

DATA: zlv_date TYPE sy-datum.

CLEAR zls_param.

REFRESH zlt_params.

zls_param-selname = 'PRELIM'.

zls_param-kind = 'P'.

zls_param-sign = 'I'.

zls_param-option = 'EQ'.

zls_param-low = 'X'.

APPEND zls_param TO zlt_params.

zls_param-selname = 'SCHED'.

zls_param-kind = 'P'.

zls_param-sign = 'I'.

zls_param-option = 'EQ'.

zls_param-low = 'X'.

APPEND zls_param TO zlt_params.

zls_param-selname = 'FIN'.

zls_param-kind = 'P'.

zls_param-sign = 'I'.

zls_param-option = 'EQ'.

zls_param-low = 'X'.

APPEND zls_param TO zlt_params.

zls_param-selname = 'ABORT'.

zls_param-kind = 'P'.

zls_param-sign = 'I'.

zls_param-option = 'EQ'.

zls_param-low = 'X'.

APPEND zls_param TO zlt_params.

zls_param-selname = 'PORTION'. "commit counter

zls_param-kind = 'P'.

zls_param-sign = 'I'.

zls_param-option = 'EQ'.

zls_param-low = 1000.

APPEND zls_param TO zlt_params.

zls_param-selname = 'TESTRUN'. "testrun

zls_param-kind = 'P'.

zls_param-sign = 'I'.

zls_param-option = 'EQ'.

zls_param-low = ' '.

APPEND zls_param TO zlt_params.

zls_param-selname = 'APREDAYS'.

zls_param-kind = 'P'.

zls_param-sign = 'I'.

zls_param-option = 'EQ'.

zls_param-low = ziv_days.

APPEND zls_param TO zlt_params.

zls_param-selname = 'BPREDAYS'.

zls_param-kind = 'P'.

zls_param-sign = 'I'.

zls_param-option = 'EQ'.

zls_param-low = ziv_days.

APPEND zls_param TO zlt_params.

zls_param-selname = 'CPREDAYS'.

zls_param-kind = 'P'.

zls_param-sign = 'I'.

zls_param-option = 'EQ'.

zls_param-low = ziv_days.

APPEND zls_param TO zlt_params.

zls_param-selname = 'ASCHDAYS'.

zls_param-kind = 'P'.

zls_param-sign = 'I'.

zls_param-option = 'EQ'.

zls_param-low = ziv_days.

APPEND zls_param TO zlt_params.

zls_param-selname = 'BSCHDAYS'.

zls_param-kind = 'P'.

zls_param-sign = 'I'.

zls_param-option = 'EQ'.

zls_param-low = ziv_days.

APPEND zls_param TO zlt_params.

zls_param-selname = 'CSCHDAYS'.

zls_param-kind = 'P'.

zls_param-sign = 'I'.

zls_param-option = 'EQ'.

zls_param-low = ziv_days.

APPEND zls_param TO zlt_params.

zls_param-selname = 'AFINDAYS'.

zls_param-kind = 'P'.

zls_param-sign = 'I'.

zls_param-option = 'EQ'.

zls_param-low = ziv_days.

APPEND zls_param TO zlt_params.

zls_param-selname = 'BFINDAYS'.

zls_param-kind = 'P'.

zls_param-sign = 'I'.

zls_param-option = 'EQ'.

zls_param-low = ziv_days.

APPEND zls_param TO zlt_params.

zls_param-selname = 'CFINDAYS'.

zls_param-kind = 'P'.

zls_param-sign = 'I'.

zls_param-option = 'EQ'.

zls_param-low = ziv_days.

APPEND zls_param TO zlt_params.

zls_param-selname = 'AABODAYS'.

zls_param-kind = 'P'.

zls_param-sign = 'I'.

zls_param-option = 'EQ'.

zls_param-low = ziv_days.

APPEND zls_param TO zlt_params.

zls_param-selname = 'BABODAYS'.

zls_param-kind = 'P'.

zls_param-sign = 'I'.

zls_param-option = 'EQ'.

zls_param-low = ziv_days.

APPEND zls_param TO zlt_params.

zls_param-selname = 'CABODAYS'.

zls_param-kind = 'P'.

zls_param-sign = 'I'.

zls_param-option = 'EQ'.

zls_param-low = ziv_days.

APPEND zls_param TO zlt_params.

zlv_variant = |ZCL{ ziv_days }|.

PERFORM create_variant TABLES zlt_params USING 'RSBTCDEL2' zlv_variant.

PERFORM start_job USING 'RSBTCDEL2' zlv_variant 'D'.

ENDFORM.

*&---------------------------------------------------------------------*

*& Form cleanup_savedlists

*&---------------------------------------------------------------------*

*& text

*&---------------------------------------------------------------------*

*& --> P_SLD

*&---------------------------------------------------------------------*

FORM cleanup_savedlists USING ziv_days.

DATA: zlv_variant TYPE raldb_vari.

DATA: zlv_date TYPE sy-datum.

CLEAR zls_param.

REFRESH zlt_params.

zls_param-selname = 'P_DATE'. " up to date

zls_param-kind = 'P'.

zls_param-sign = 'I'.

zls_param-option = 'EQ'.

zlv_date = sy-datum - ziv_days.

zls_param-low = zlv_date.

APPEND zls_param TO zlt_params.

zls_param-selname = 'P_TEST'. "testrun

zls_param-kind = 'P'.

zls_param-sign = 'I'.

zls_param-option = 'EQ'.

zls_param-low = ' '.

APPEND zls_param TO zlt_params.

zls_param-selname = 'P_WSID'. "local

zls_param-kind = 'P'.

zls_param-sign = 'I'.

zls_param-option = 'EQ'.

zls_param-low = ' '.

APPEND zls_param TO zlt_params.

zlv_variant = |ZCL{ ziv_days }|.

PERFORM create_variant TABLES zlt_params USING 'RSAQQLRE_MASS' zlv_variant.

PERFORM start_job USING 'RSAQQLRE_MASS' zlv_variant 'D'.

CLEAR zls_param.

REFRESH zlt_params.

zls_param-selname = 'P_DATE'. " up to date

zls_param-kind = 'P'.

zls_param-sign = 'I'.

zls_param-option = 'EQ'.

zlv_date = sy-datum - ziv_days.

zls_param-low = zlv_date.

APPEND zls_param TO zlt_params.

zls_param-selname = 'P_TEST'. "testrun

zls_param-kind = 'P'.

zls_param-sign = 'I'.

zls_param-option = 'EQ'.

zls_param-low = ' '.

APPEND zls_param TO zlt_params.

zls_param-selname = 'P_WSID'. "global

zls_param-kind = 'P'.

zls_param-sign = 'I'.

zls_param-option = 'EQ'.

zls_param-low = 'X'.

APPEND zls_param TO zlt_params.

zlv_variant = |ZCL{ ziv_days }2|.

PERFORM create_variant TABLES zlt_params USING 'RSAQQLRE_MASS' zlv_variant.

PERFORM start_job USING 'RSAQQLRE_MASS' zlv_variant 'D'.

ENDFORM.

*&---------------------------------------------------------------------*

*& Form cleanup_bwlogs

*&---------------------------------------------------------------------*

*& text

*&---------------------------------------------------------------------*

*& --> P_BWD

*&---------------------------------------------------------------------*

FORM cleanup_bwlogs USING ziv_days.

DATA: zlv_variant TYPE raldb_vari.

DATA: zlv_date TYPE sy-datum.

CLEAR zls_param.

REFRESH zlt_params.

zls_param-selname = 'P_DATE'. " up to date

zls_param-kind = 'P'.

zls_param-sign = 'I'.

zls_param-option = 'EQ'.

zlv_date = sy-datum - ziv_days.

zls_param-low = zlv_date.

APPEND zls_param TO zlt_params.

zls_param-selname = 'P_OWN'. "

zls_param-kind = 'P'.

zls_param-sign = 'I'.

zls_param-option = 'EQ'.

zls_param-low = 'X'.

APPEND zls_param TO zlt_params.

zls_param-selname = 'P_QUERY'.

zls_param-kind = 'P'.

zls_param-sign = 'I'.

zls_param-option = 'EQ'.

zls_param-low = 'X'.

APPEND zls_param TO zlt_params.

zls_param-selname = 'P_LOGG'.

APPEND zls_param TO zlt_params.

zls_param-selname = 'P_AGHPA'.

APPEND zls_param TO zlt_params.

zls_param-selname = 'P_DELE'.

APPEND zls_param TO zlt_params.

zls_param-selname = 'P_BIA'.

APPEND zls_param TO zlt_params.

zls_param-selname = 'P_DTP'.

APPEND zls_param TO zlt_params.

zls_param-selname = 'P_WHM'.

APPEND zls_param TO zlt_params.

zls_param-selname = 'P_WSP'.

APPEND zls_param TO zlt_params.

zls_param-selname = 'P_AP'.

APPEND zls_param TO zlt_params.

zls_param-selname = 'P_SL'.

APPEND zls_param TO zlt_params.

zlv_variant = |ZCL{ ziv_days }|.

PERFORM create_variant TABLES zlt_params USING 'RSDDSTAT_DATA_DELETE' zlv_variant.

PERFORM start_job USING 'RSDDSTAT_DATA_DELETE' zlv_variant 'D'.

CLEAR zls_param.

REFRESH zlt_params.

zls_param-selname = 'DEL_MSG'.

zls_param-kind = 'P'.

zls_param-sign = 'I'.

zls_param-option = 'EQ'.

zls_param-low = ziv_days.

APPEND zls_param TO zlt_params.

zls_param-selname = 'DEL_PAR'.

zls_param-kind = 'P'.

zls_param-sign = 'I'.

zls_param-option = 'EQ'.

zls_param-low = ziv_days.

APPEND zls_param TO zlt_params.

zls_param-selname = 'DEL_DTP'.

zls_param-kind = 'P'.

zls_param-sign = 'I'.

zls_param-option = 'EQ'.

zls_param-low = 'X'.

APPEND zls_param TO zlt_params.

zlv_variant = |ZCL{ ziv_days }|.

PERFORM create_variant TABLES zlt_params USING 'RSBATCH_DEL_MSG_PARM_DTPTEMP' zlv_variant.

PERFORM start_job USING 'RSBATCH_DEL_MSG_PARM_DTPTEMP' zlv_variant 'D'.

CLEAR zls_param.

REFRESH zlt_params.

zls_param-selname = 'P_DAYS'.

zls_param-kind = 'P'.

zls_param-sign = 'I'.

zls_param-option = 'EQ'.

zls_param-low = ziv_days.

APPEND zls_param TO zlt_params.

zls_param-selname = 'P_SIMU3'.

zls_param-kind = 'P'.

zls_param-sign = 'I'.

zls_param-option = 'EQ'.

zls_param-low = 'X'.

APPEND zls_param TO zlt_params.

zls_param-selname = 'P_REORG'.

zls_param-kind = 'P'.

zls_param-sign = 'I'.

zls_param-option = 'EQ'.

zls_param-low = 'X'.

APPEND zls_param TO zlt_params.

zls_param-selname = 'P_PKG'.

zls_param-kind = 'P'.

zls_param-sign = 'I'.

zls_param-option = 'EQ'.

zls_param-low = 10000.

APPEND zls_param TO zlt_params.

zlv_variant = |ZCL{ ziv_days }|.

PERFORM create_variant TABLES zlt_params USING 'RSPM_HOUSEKEEPING' zlv_variant.

PERFORM start_job USING 'RSPM_HOUSEKEEPING' zlv_variant 'D'.

CLEAR zls_param.

REFRESH zlt_params.

zls_param-selname = 'DATE_S'. " from date

zls_param-kind = 'P'.

zls_param-sign = 'I'.

zls_param-option = 'EQ'.

zlv_date = sy-datum - 9999.

zls_param-low = zlv_date.

APPEND zls_param TO zlt_params.

zls_param-selname = 'DATE_E'. " to date

zls_param-kind = 'P'.

zls_param-sign = 'I'.

zls_param-option = 'EQ'.

zlv_date = sy-datum - ziv_days.

zls_param-low = zlv_date.

APPEND zls_param TO zlt_params.

zls_param-selname = 'CROSS'.

zls_param-kind = 'P'.

zls_param-sign = 'I'.

zls_param-option = 'EQ'.

zls_param-low = 'X'.

APPEND zls_param TO zlt_params.

zlv_variant = |ZCL{ ziv_days }|.

PERFORM create_variant TABLES zlt_params USING 'RSBKCLEANUPBUFFER' zlv_variant.

PERFORM start_job USING 'RSBKCLEANUPBUFFER' zlv_variant 'D'.

CLEAR zls_param.

REFRESH zlt_params.

zls_param-selname = 'P_CL0RT'.

zls_param-kind = 'P'.

zls_param-sign = 'I'.

zls_param-option = 'EQ'.

zls_param-low = ziv_days.

APPEND zls_param TO zlt_params.

zls_param-selname = 'P_CL1RT'.

zls_param-kind = 'P'.

zls_param-sign = 'I'.

zls_param-option = 'EQ'.

zls_param-low = ziv_days.

APPEND zls_param TO zlt_params.

zls_param-selname = 'P_SIMU'.

zls_param-kind = 'P'.

zls_param-sign = 'I'.

zls_param-option = ' '.

zls_param-low = ziv_days.

APPEND zls_param TO zlt_params.

zls_param-selname = 'P_RECRT'.

zls_param-kind = 'P'.

zls_param-sign = 'I'.

zls_param-option = 'EQ'.

zls_param-low = 24.

APPEND zls_param TO zlt_params.

zlv_variant = |ZCL{ ziv_days }|.

PERFORM create_variant TABLES zlt_params USING 'ODQ_CLEANUP' zlv_variant.

PERFORM start_job USING 'ODQ_CLEANUP' zlv_variant 'D'.

ENDFORM.

*&---------------------------------------------------------------------*

*& Form cleanup_changepointers

*&---------------------------------------------------------------------*

*& text

*&---------------------------------------------------------------------*

*& --> P_CPD

*&---------------------------------------------------------------------*

FORM cleanup_changepointers USING ziv_days.

DATA: zlv_variant TYPE raldb_vari.

DATA: zlv_date TYPE sy-datum.

CLEAR zls_param.

REFRESH zlt_params.

zls_param-selname = 'P2_DATE'. " creation date

zls_param-kind = 'S'.

zls_param-sign = 'I'.

zls_param-option = 'BT'.

zlv_date = sy-datum - 9999.

zls_param-low = zlv_date.

zlv_date = sy-datum - ziv_days.

zls_param-high = zlv_date.

APPEND zls_param TO zlt_params.

zls_param-selname = 'P_TEST'.

zls_param-kind = 'P'.

zls_param-sign = 'I'.

zls_param-option = 'EQ'.

zls_param-low = ' '.

APPEND zls_param TO zlt_params.

zls_param-selname = 'P1_OLD'.

zls_param-kind = 'P'.

zls_param-sign = 'I'.

zls_param-option = 'EQ'.

zls_param-low = 'X'.

APPEND zls_param TO zlt_params.

zls_param-selname = 'P_PACK'.

zls_param-kind = 'P'.

zls_param-sign = 'I'.

zls_param-option = 'EQ'.

zls_param-low = 'X'.

APPEND zls_param TO zlt_params.

zlv_variant = |ZCL{ ziv_days }|.

PERFORM create_variant TABLES zlt_params USING 'RBDCPCLR2' zlv_variant.

PERFORM start_job USING 'RBDCPCLR2' zlv_variant 'D'.

ENDFORM.