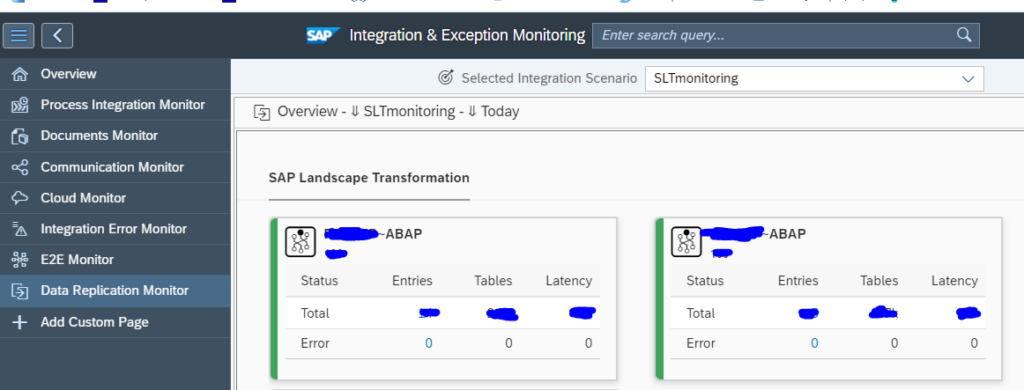

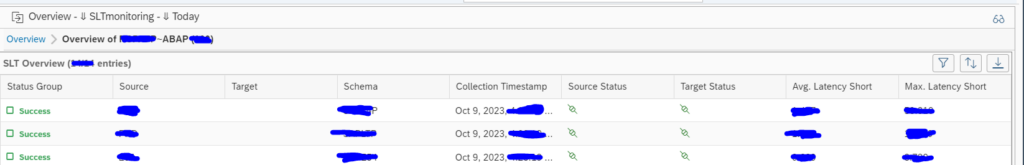

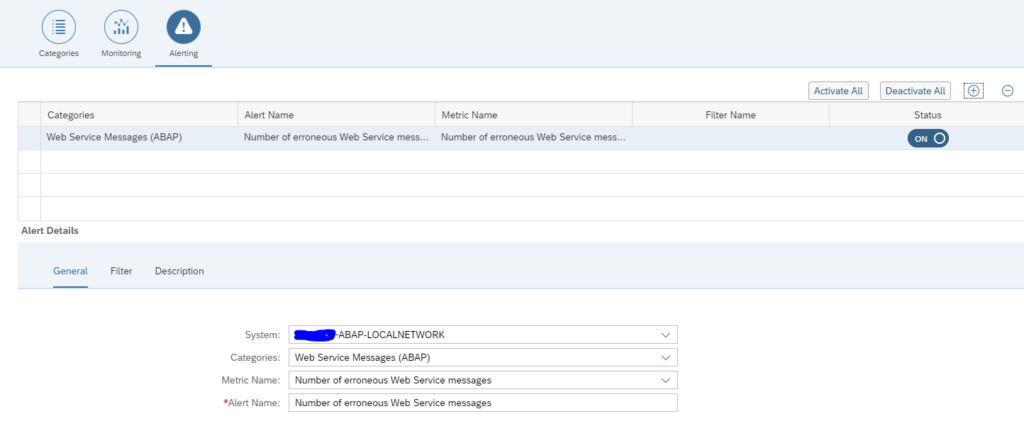

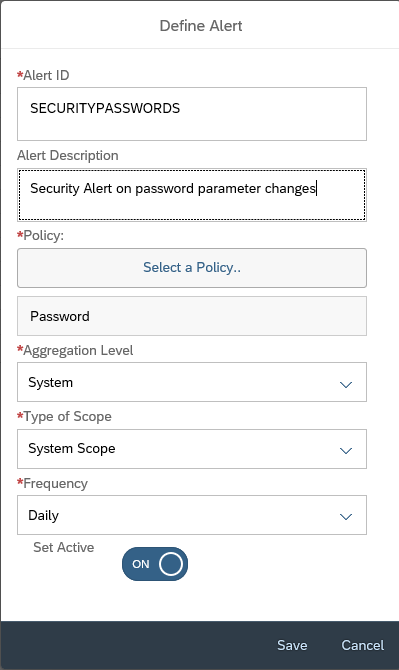

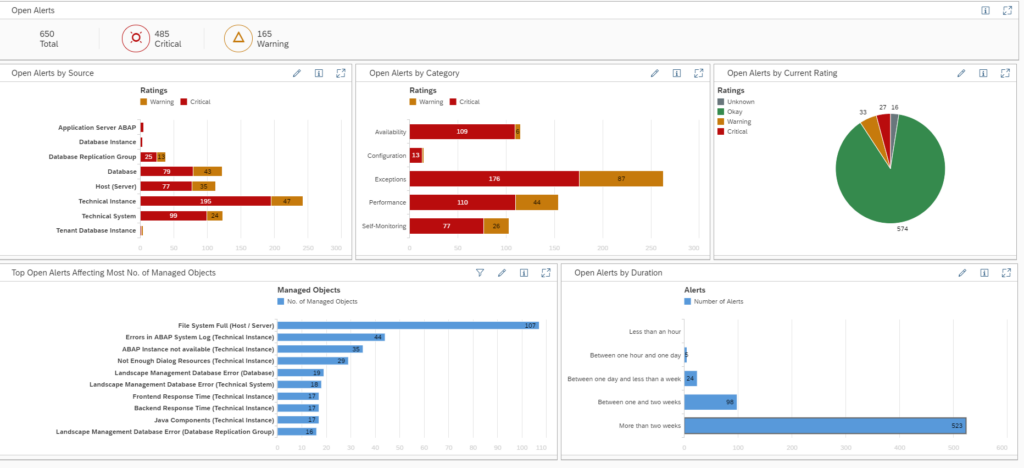

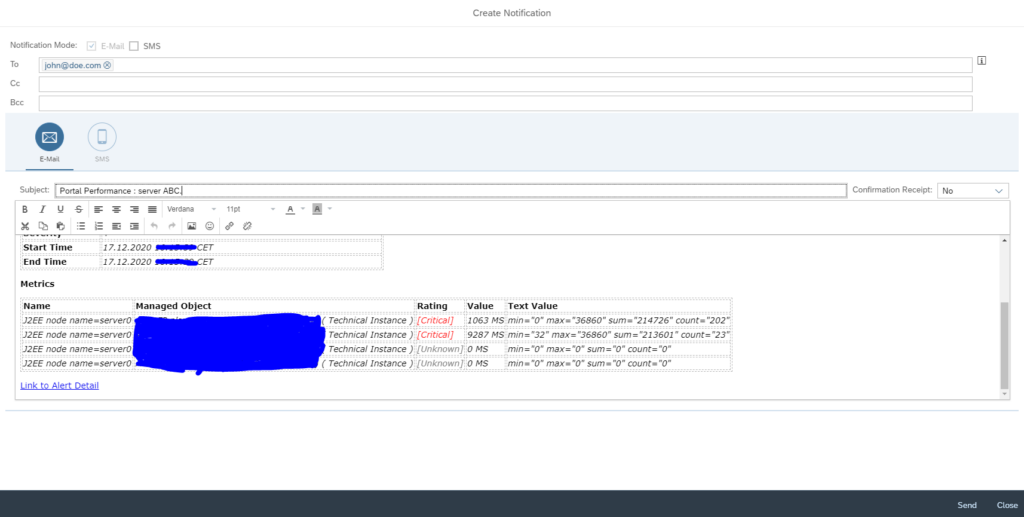

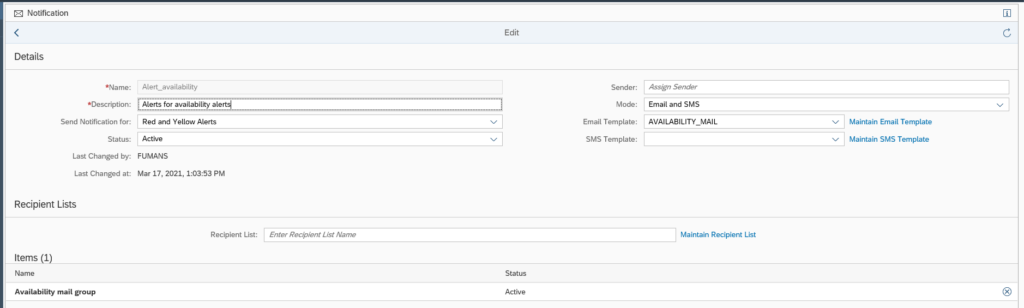

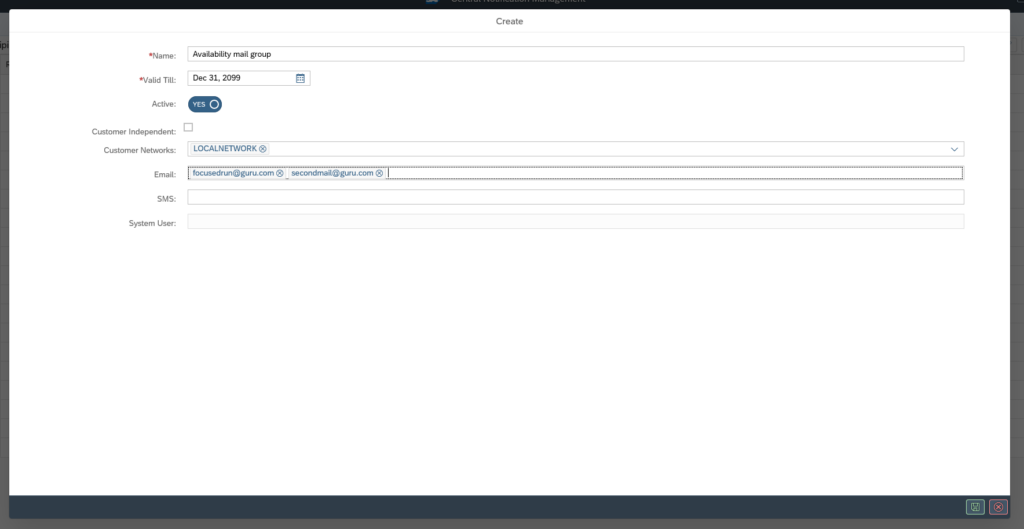

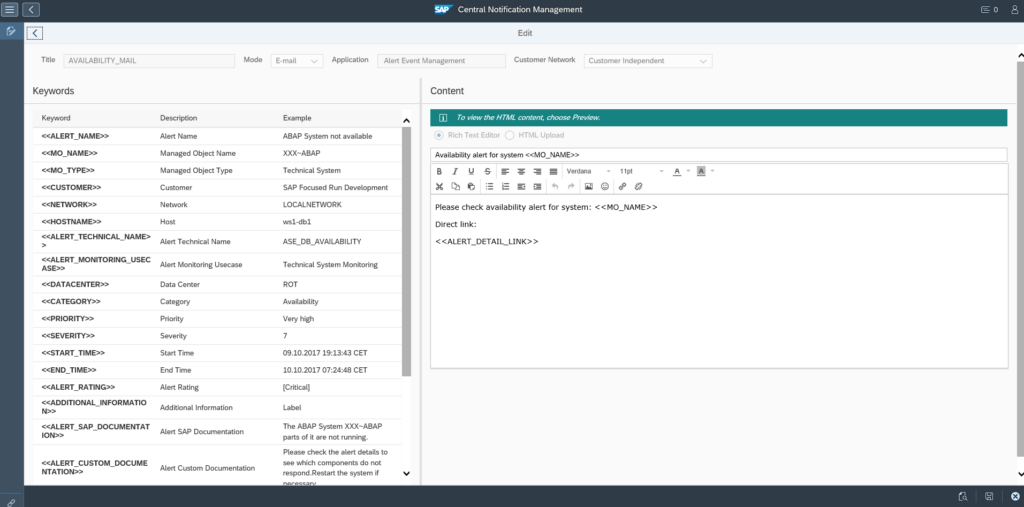

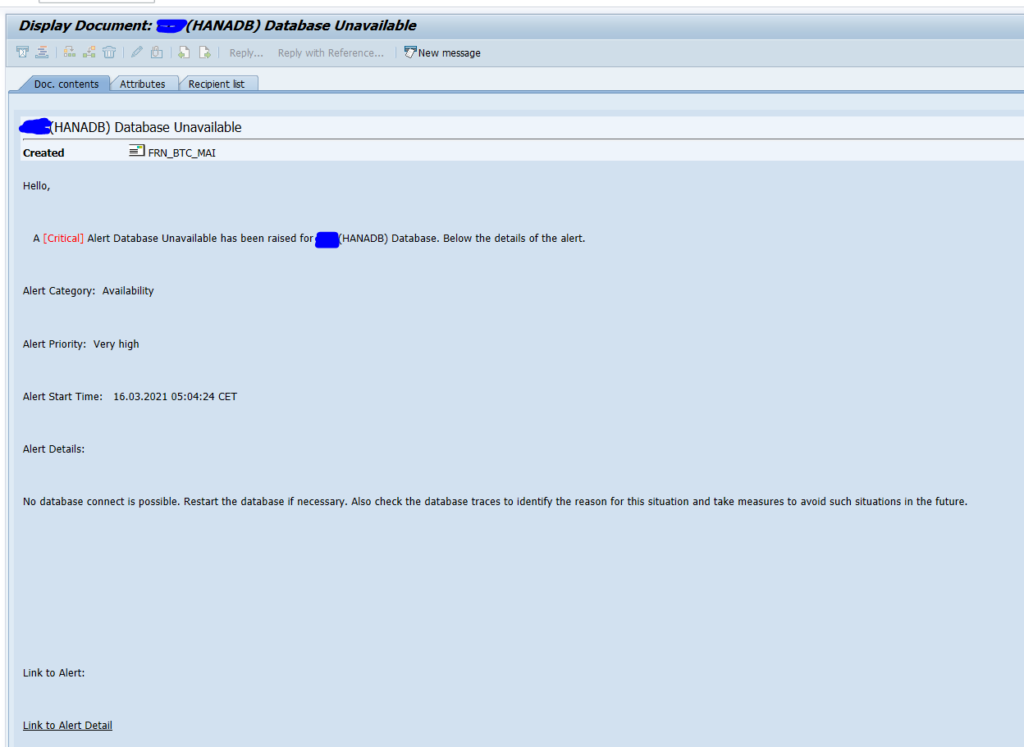

SAP Focused Run alert management function can send out mails to alert to mail addresses (see this blog).

SAP Focused Run can also call an outbound integration to a ITIL tool like ServiceNow. This can help to speed up incident creation.

It needs implementation on ABAP level. The coding is given at the end of the blog.

Questions that will be answered in this blog are:

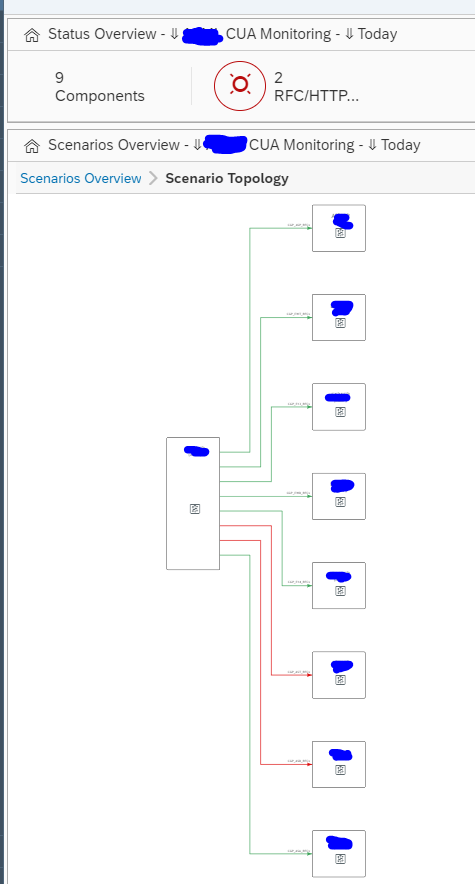

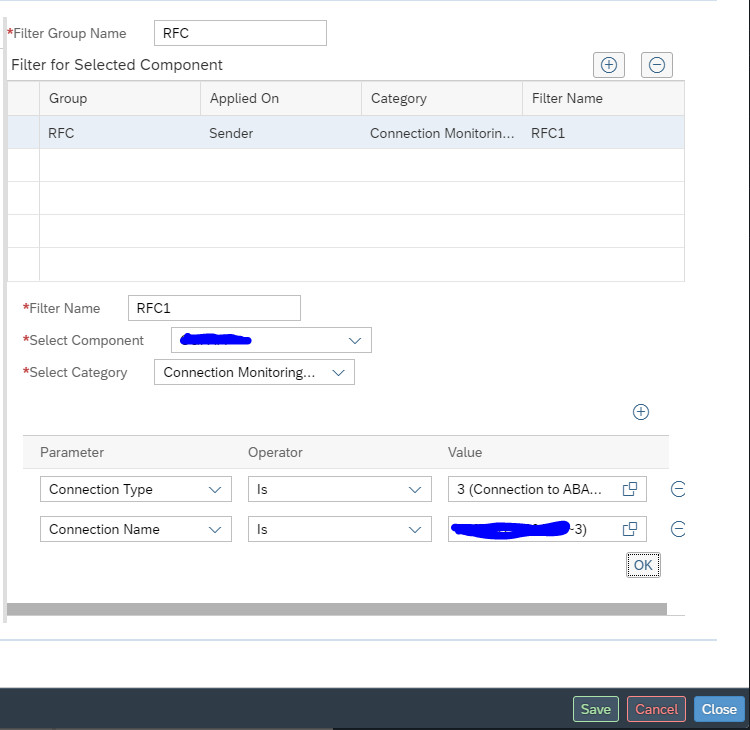

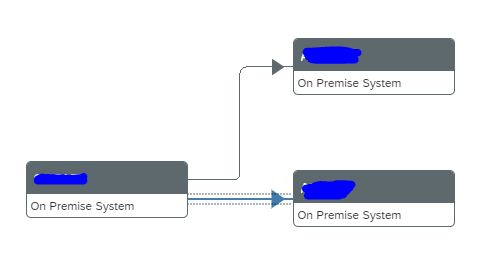

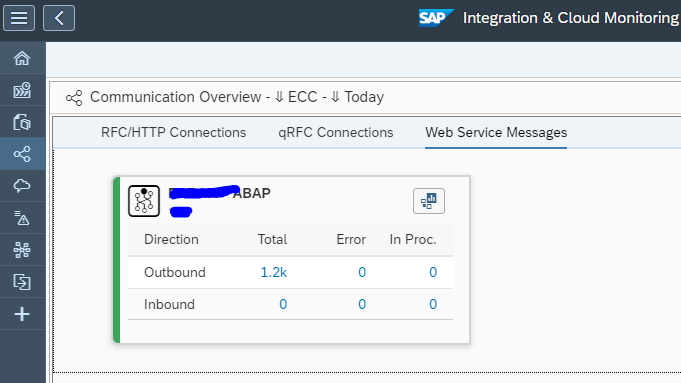

- How does the high level integration between SAP Focused Run and ServiceNow look like?

- Where can I find information on the to-be-implemented ABAP BADI?

- How can I send an alert directly to ServiceNow from the Alert management detailed page?

- How can I automate in template settings to send an alert via outbound integration towards ServiceNow?

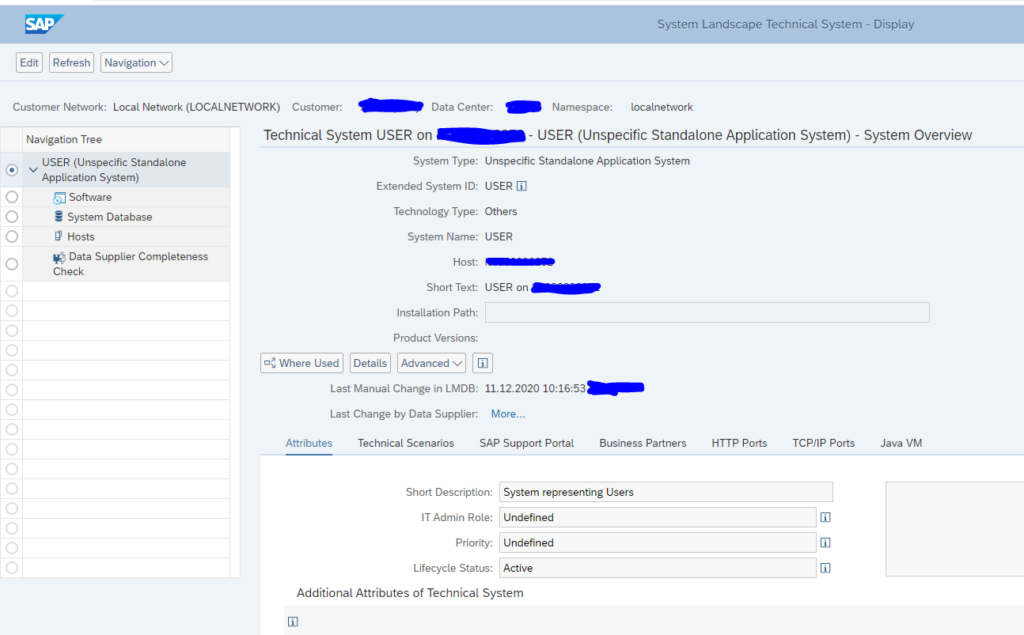

- How do I connect from the ABAP stack towards the midserver?

- Which BADI do I need to activate for the outbound integration?

- How do I call the midserver connection from the BADI?

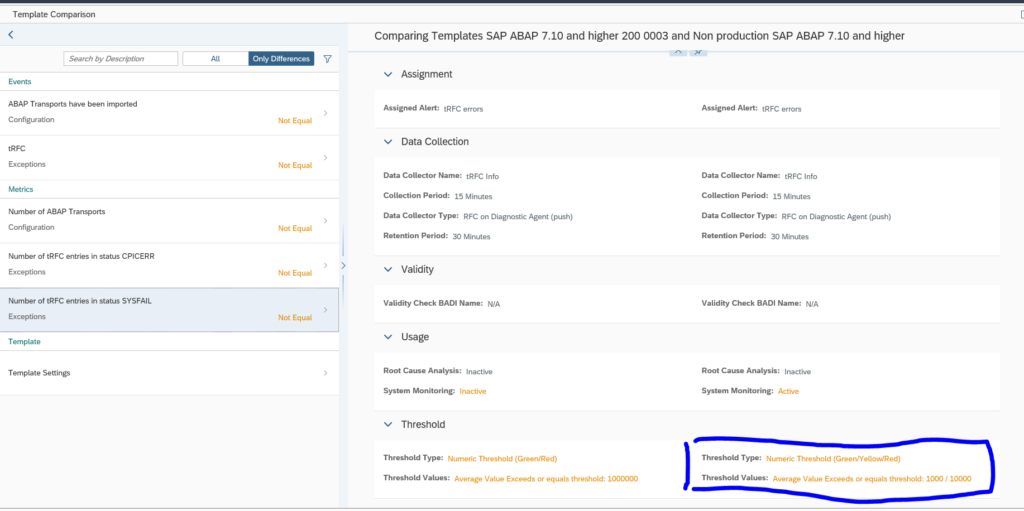

- How do I deal with the differences in severity definition between ServiceNow and SAP Focused Run?

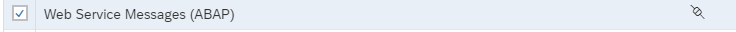

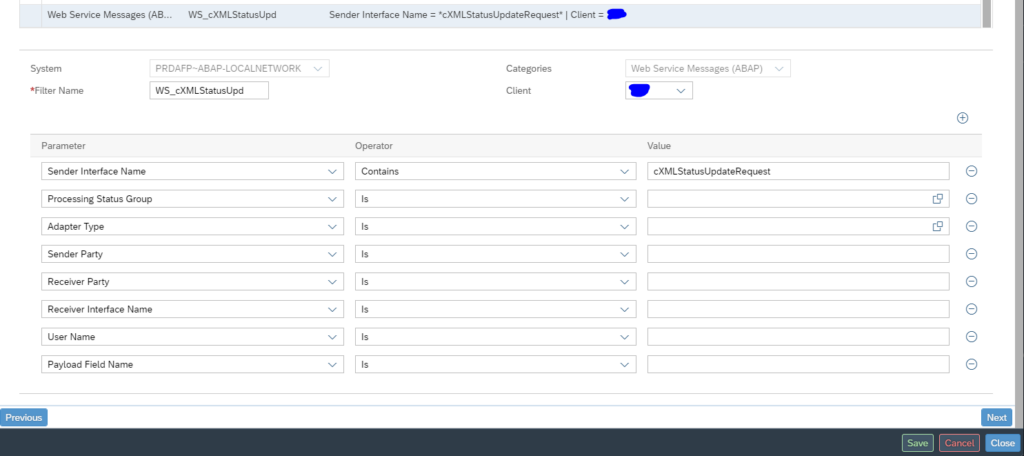

- If I want to set up the connection via web services, what do I need to do?

- How can I include application logging in such a way that I can monitor the calls and issues in SLG1?

- Where can I find the ABAP code needed?

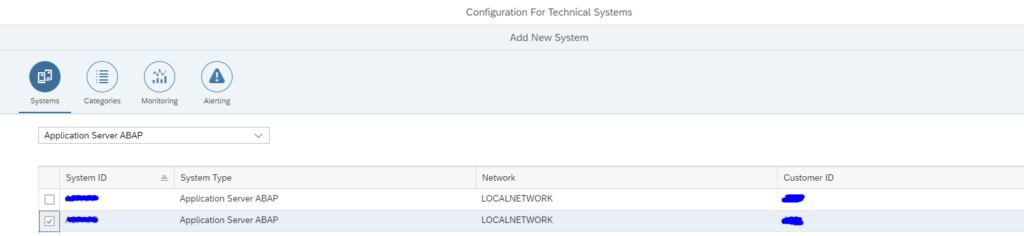

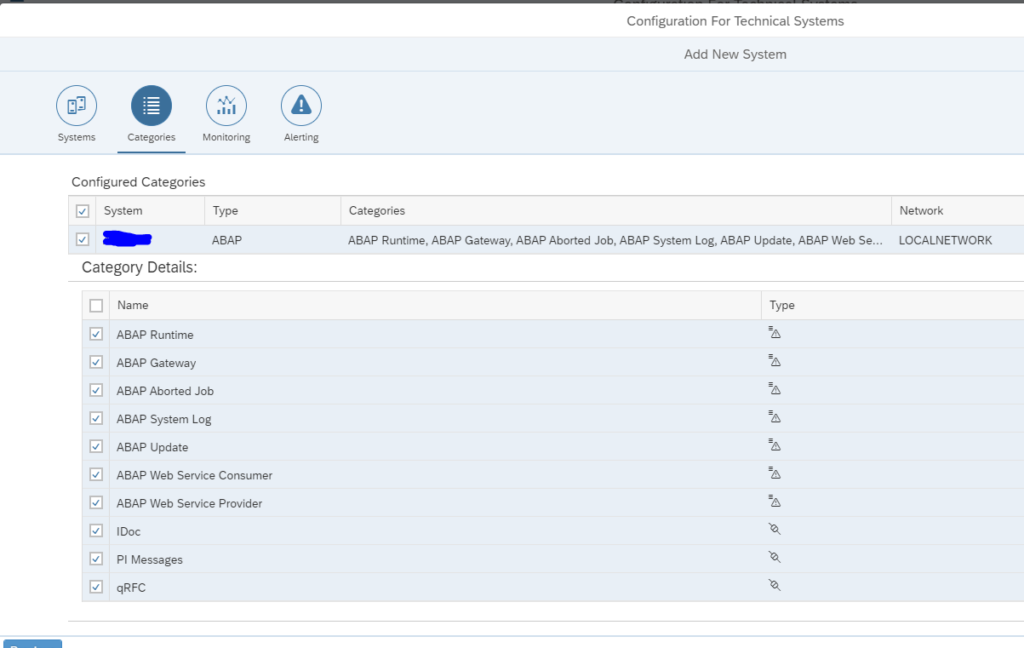

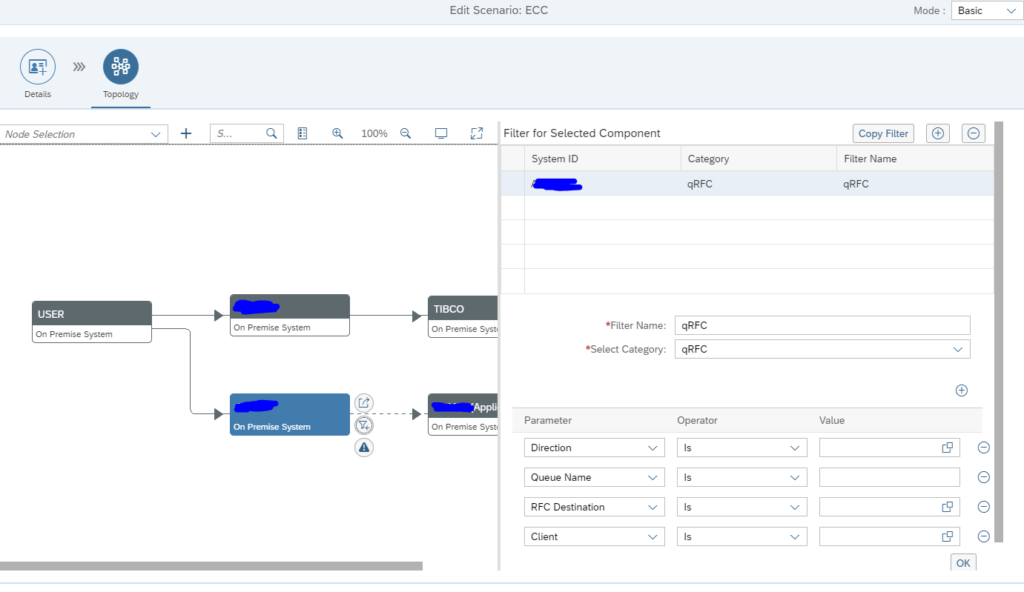

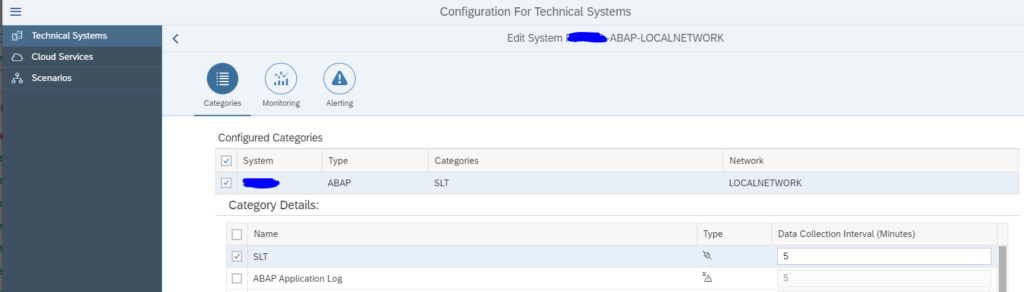

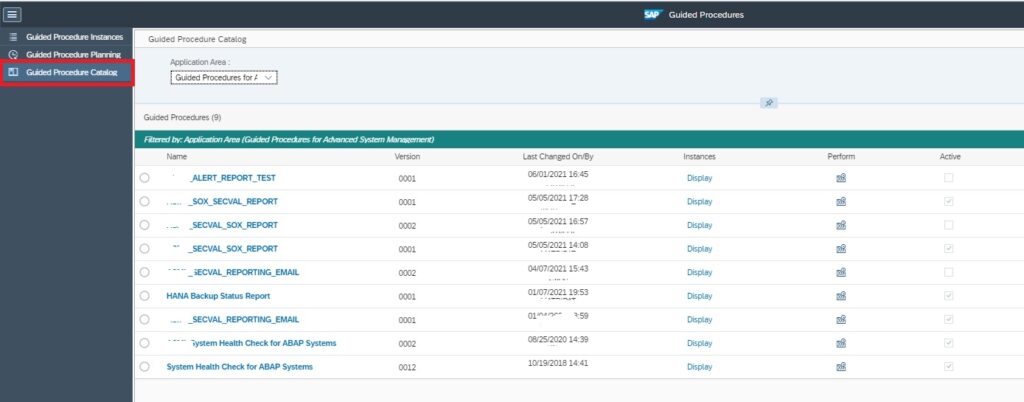

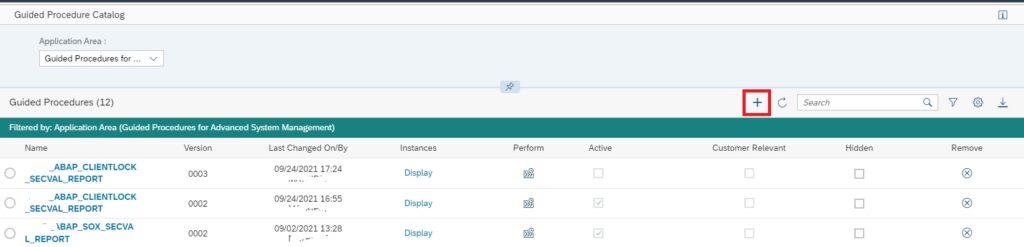

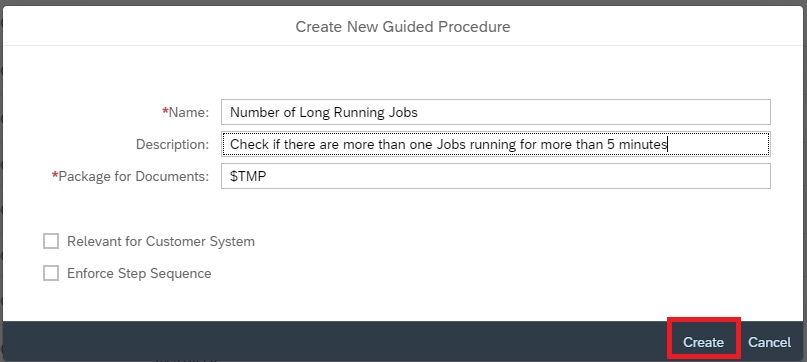

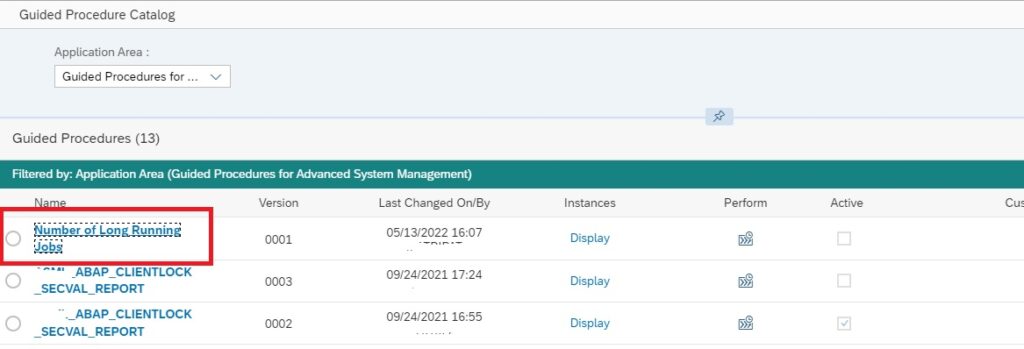

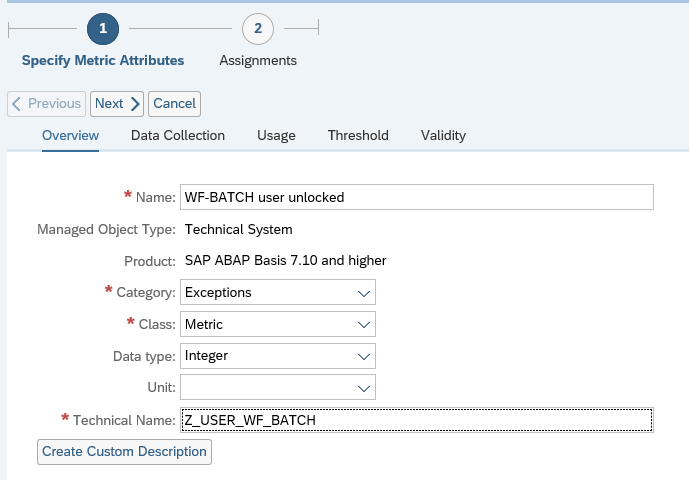

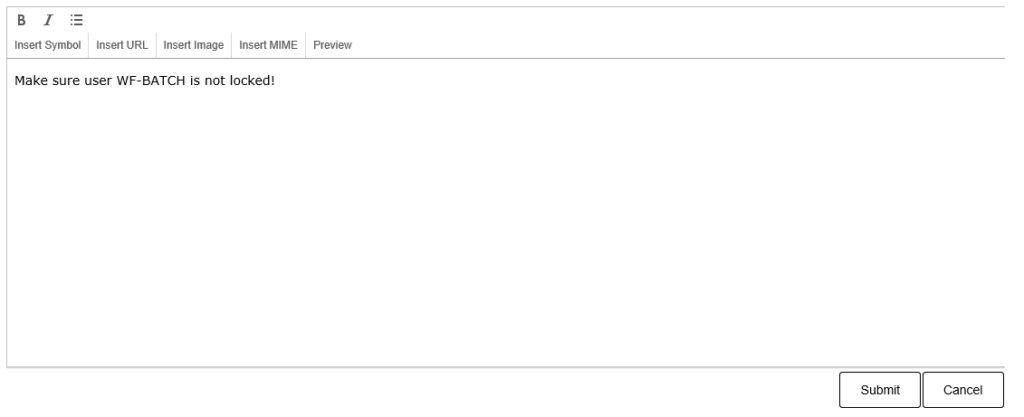

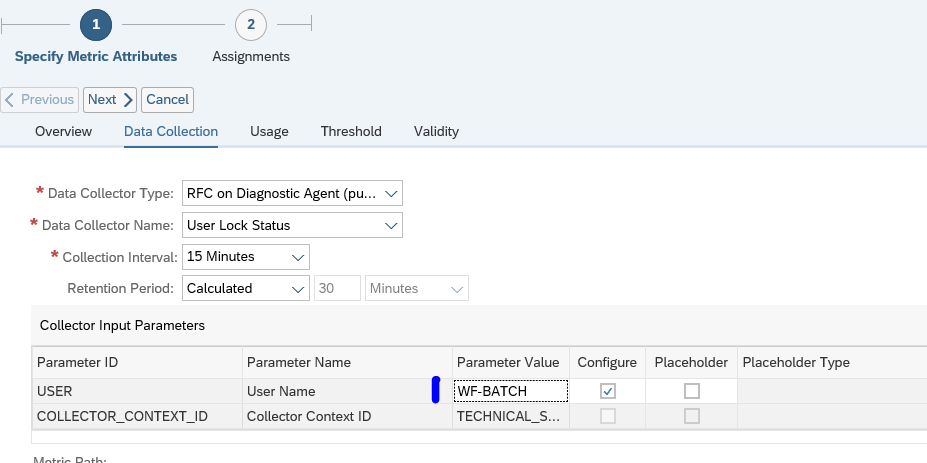

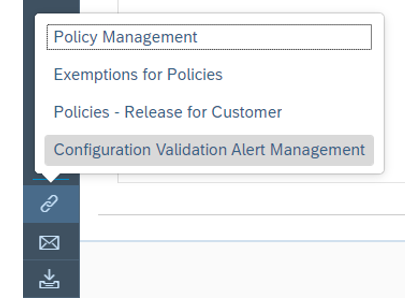

Setting up the integration

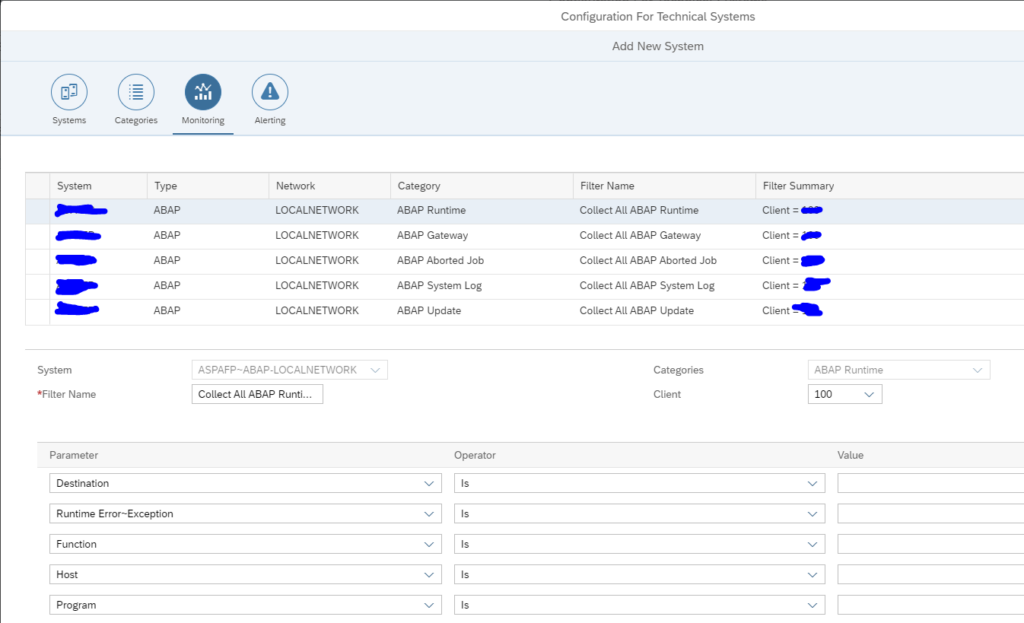

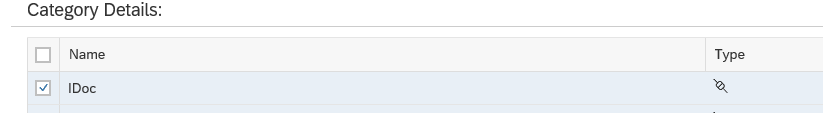

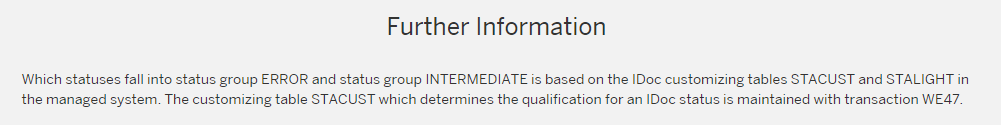

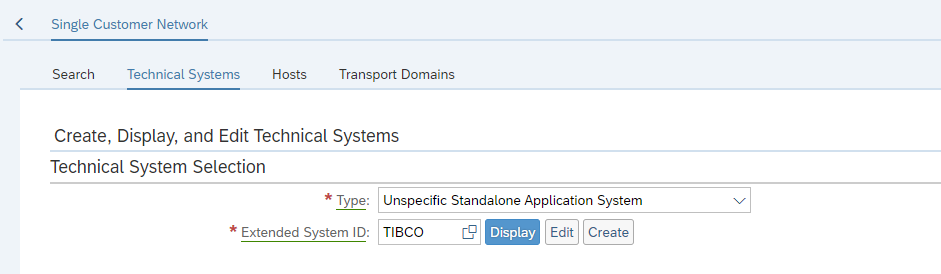

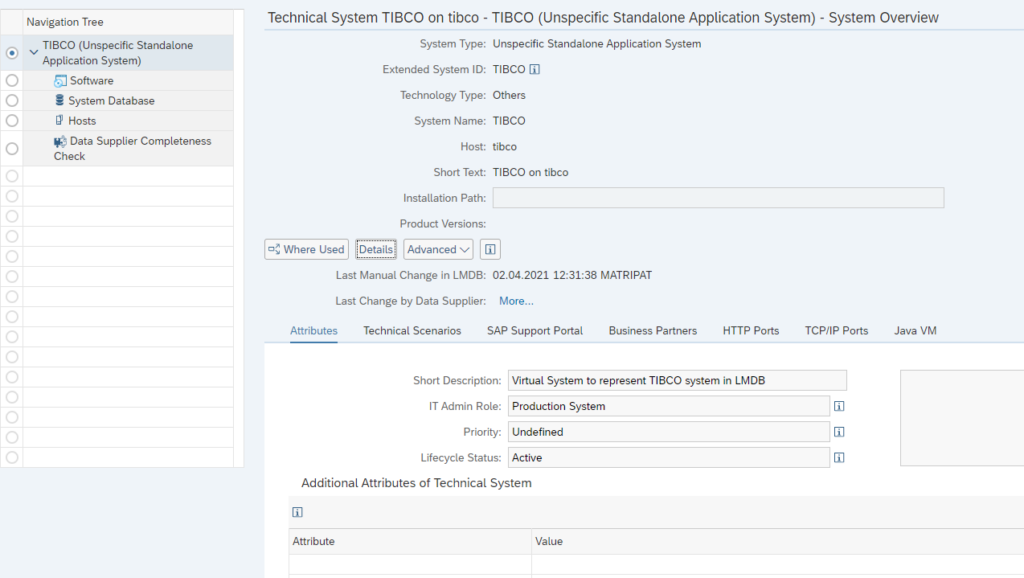

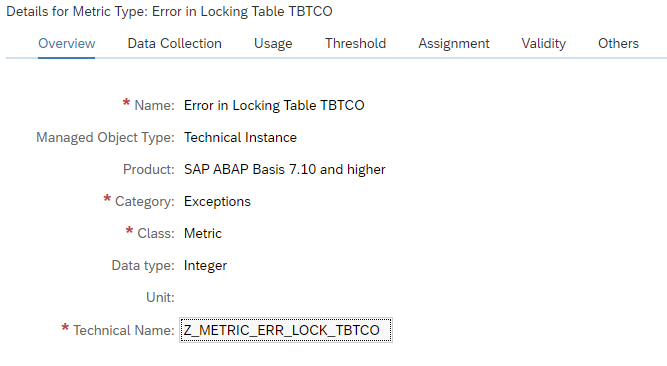

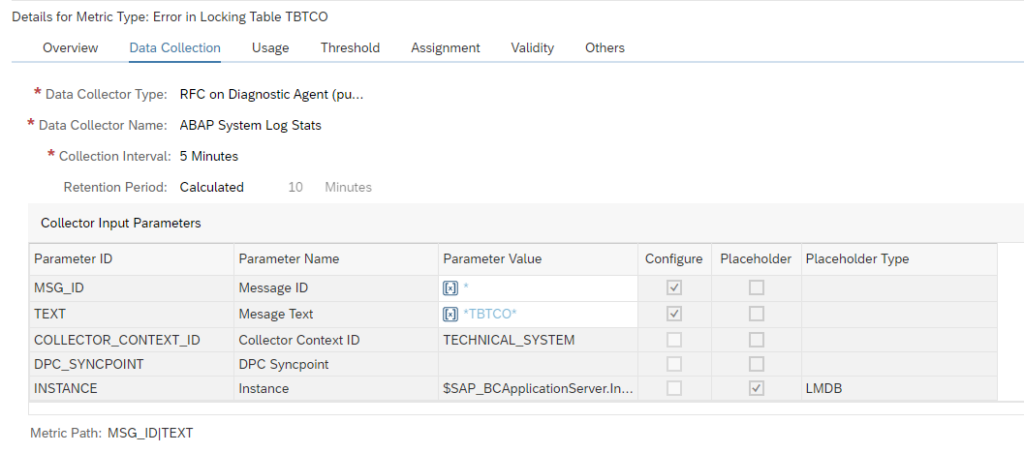

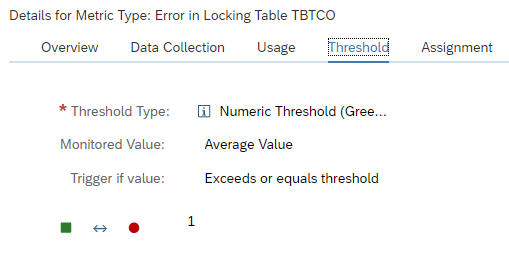

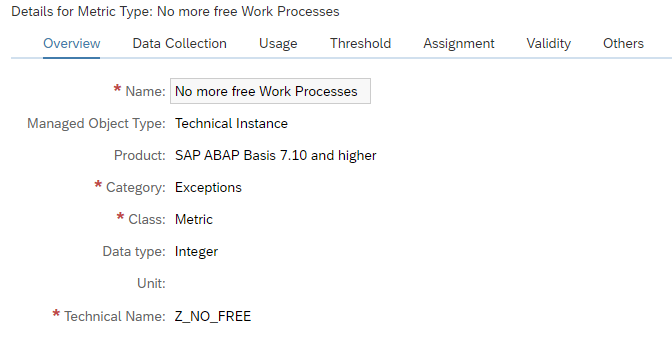

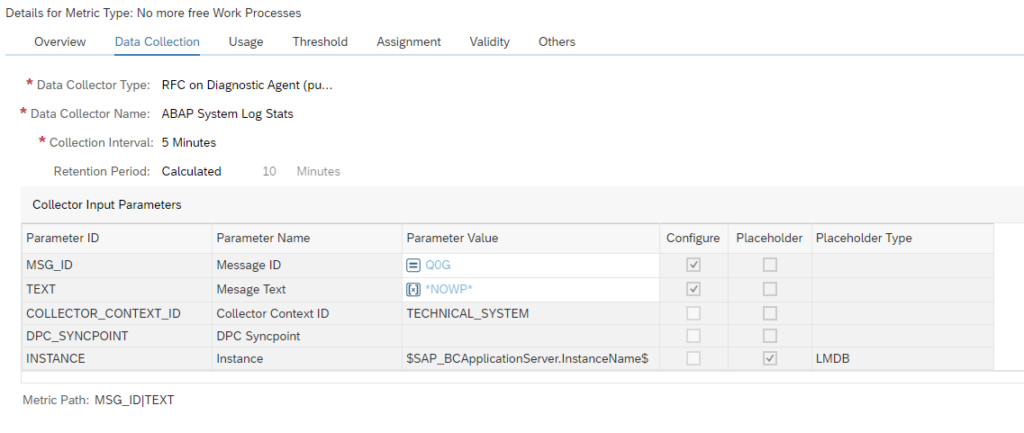

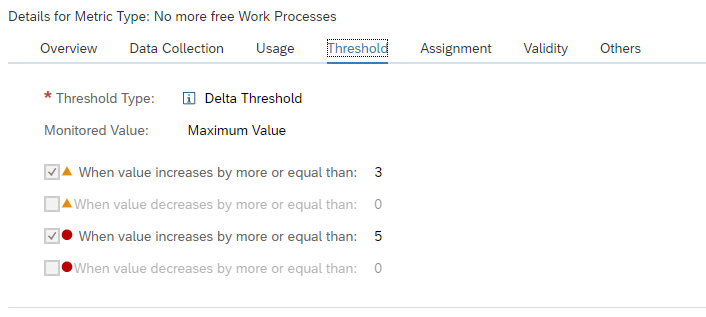

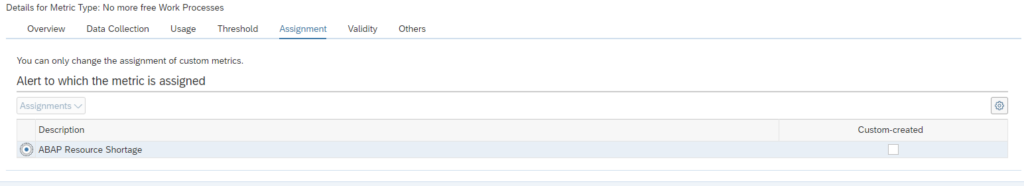

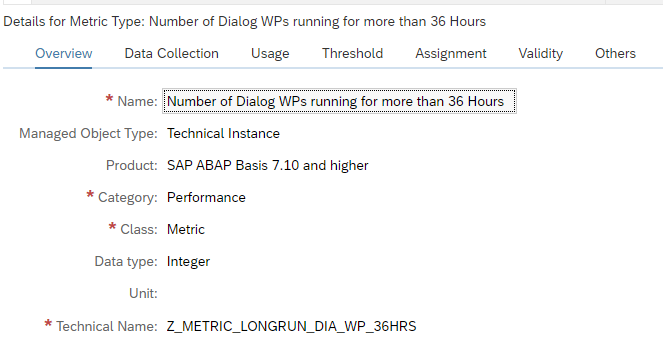

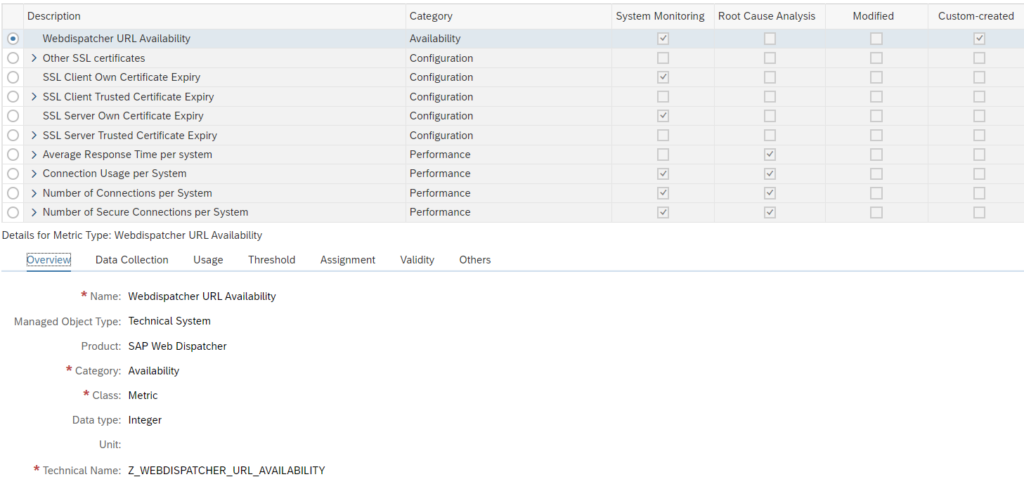

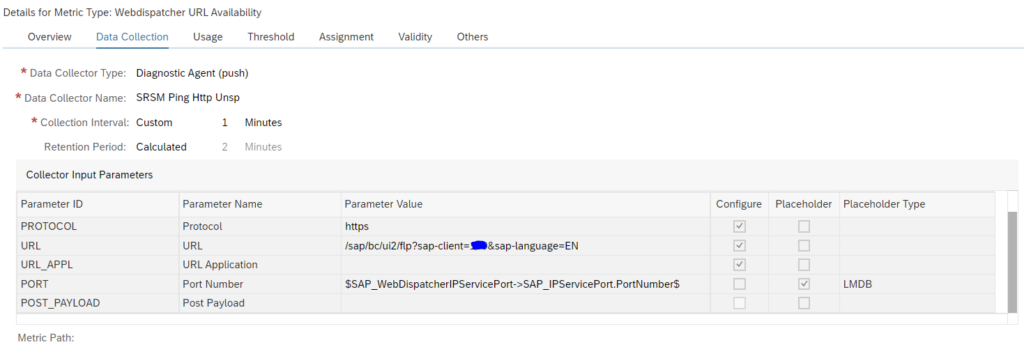

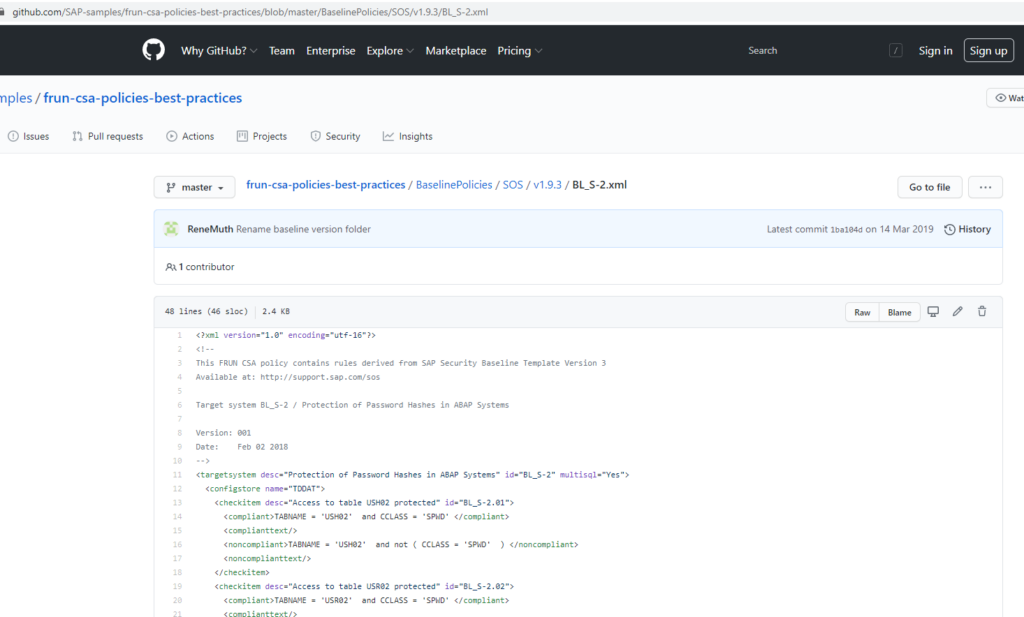

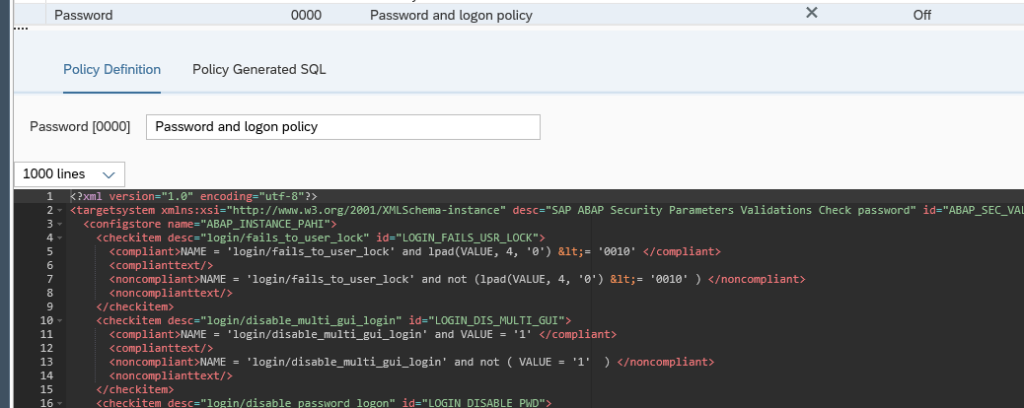

For setting up the integration to ServiceNow the AEM third party consumer connection BADI must be implemented. The full manual for the BADI itself can be found on the SAP Focused Run Expert portal.

The documents describes the BADI in generic way.

To call ServiceNow you have to use one of the following 2 integration methods:

- Call webservice: in this case you import the WSDL from ServiceNow and generate the proxy and execute the SOAMANAGER settings to logon to ServiceNow. You need ABAP code in the BADI to call the proxy. See this blog for generic use of setting up webservice consumption in ABAP stack. Available webservices for ServiceNow can be found on the ServiceNow page.

- Call the ServiceNow midserver: in this case you call a REST interface. In this case you need to setup a HTTP RFC connection to the midserver. ABAP code in the BADI is needed to make the REST call. See this blog for generic use of REST call in ABAP stack. REST API references from ServiceNow can be found on the ServiceNow page.

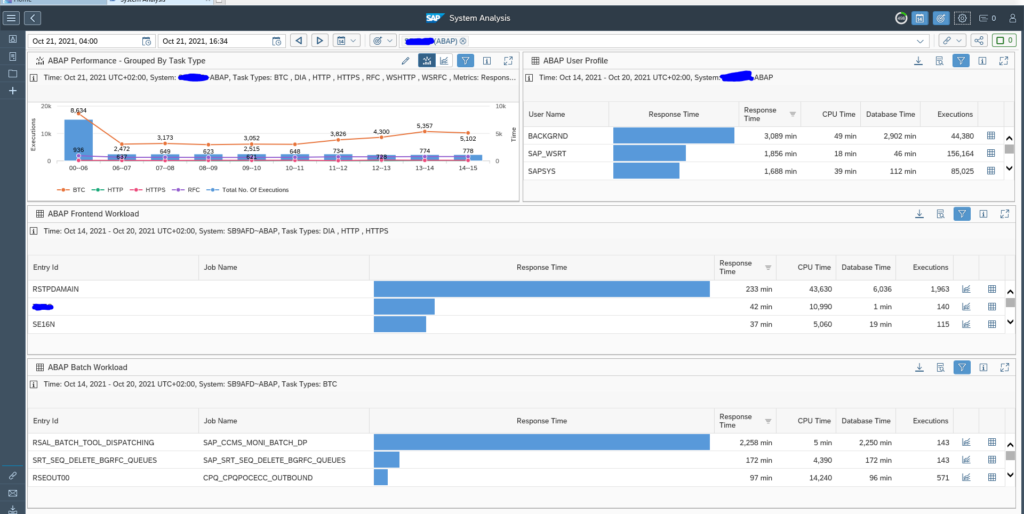

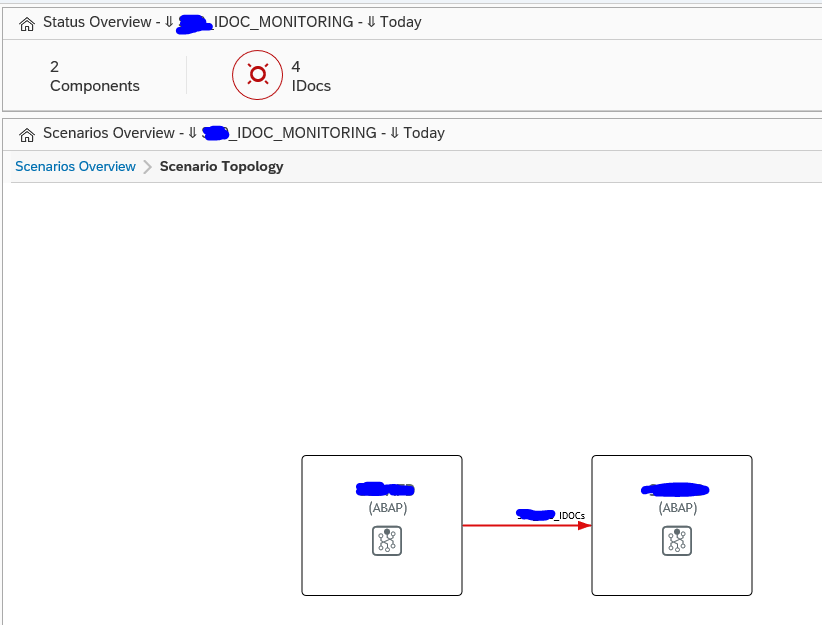

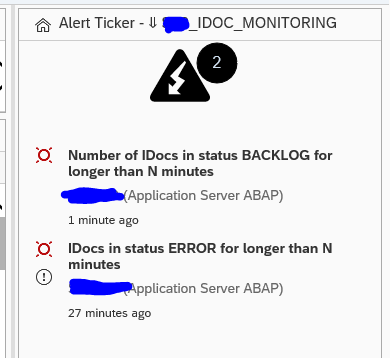

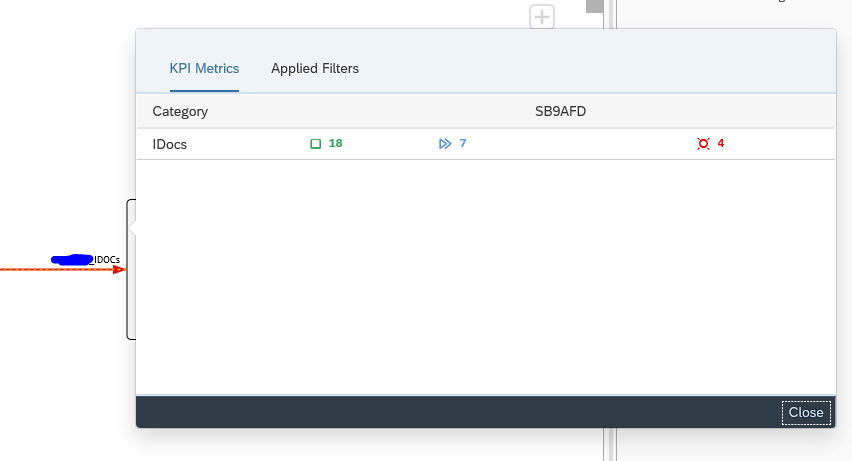

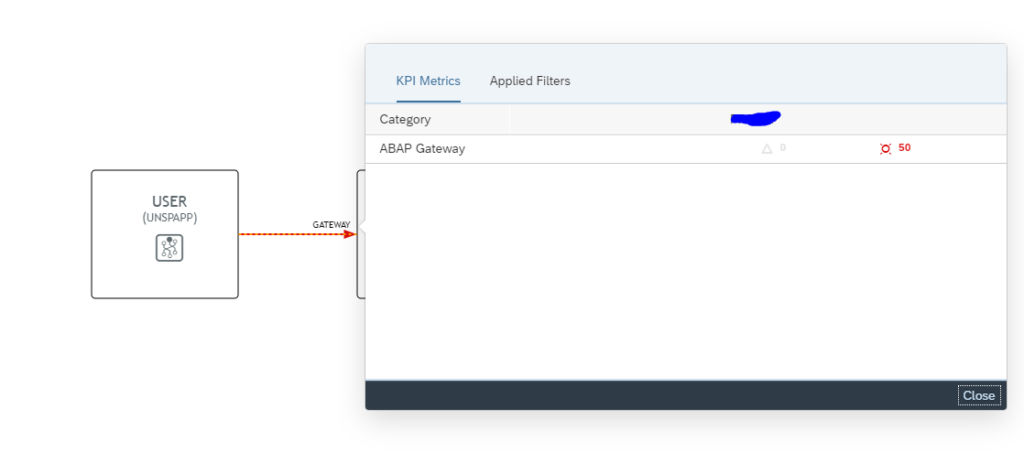

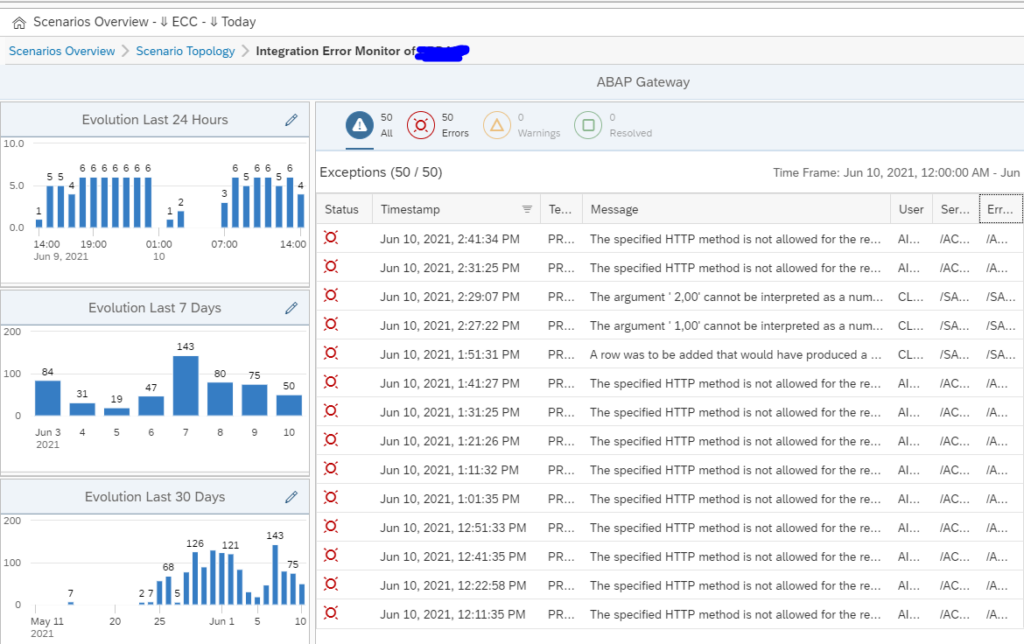

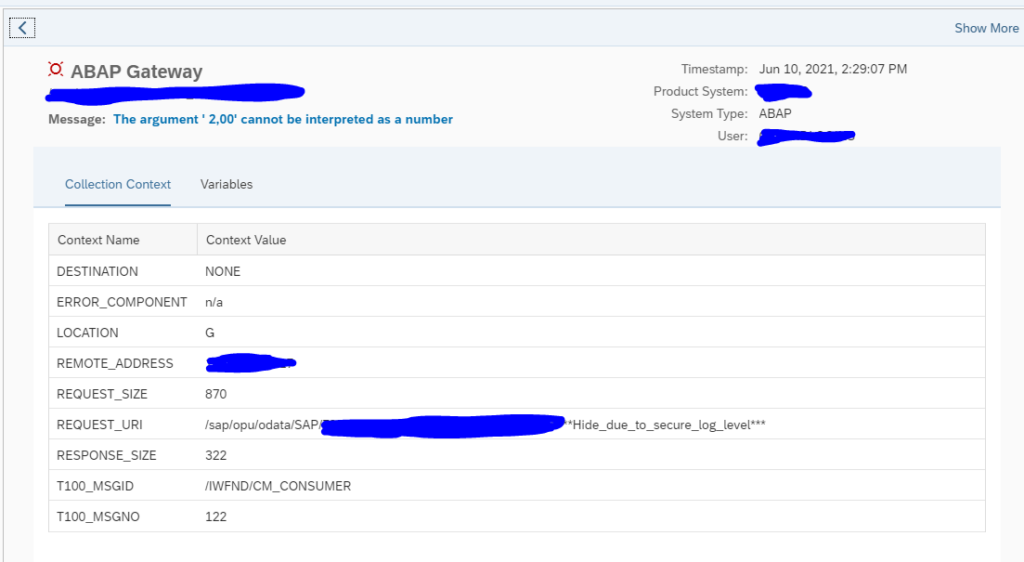

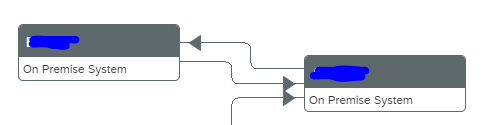

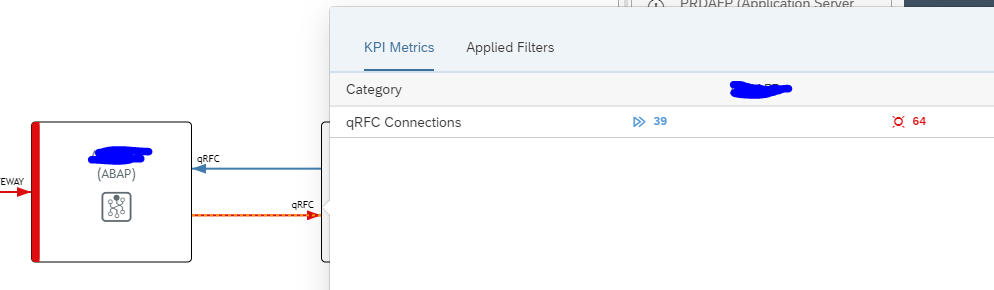

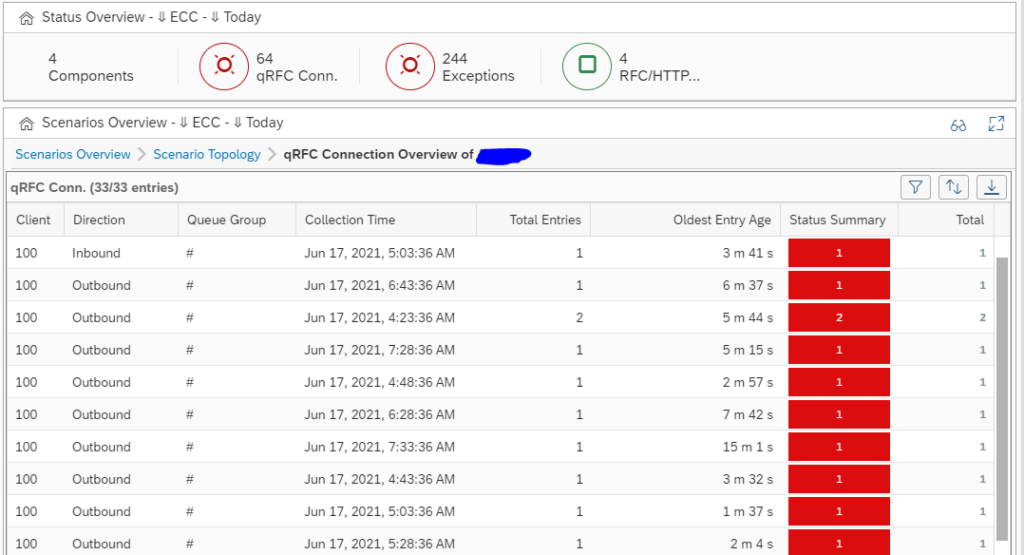

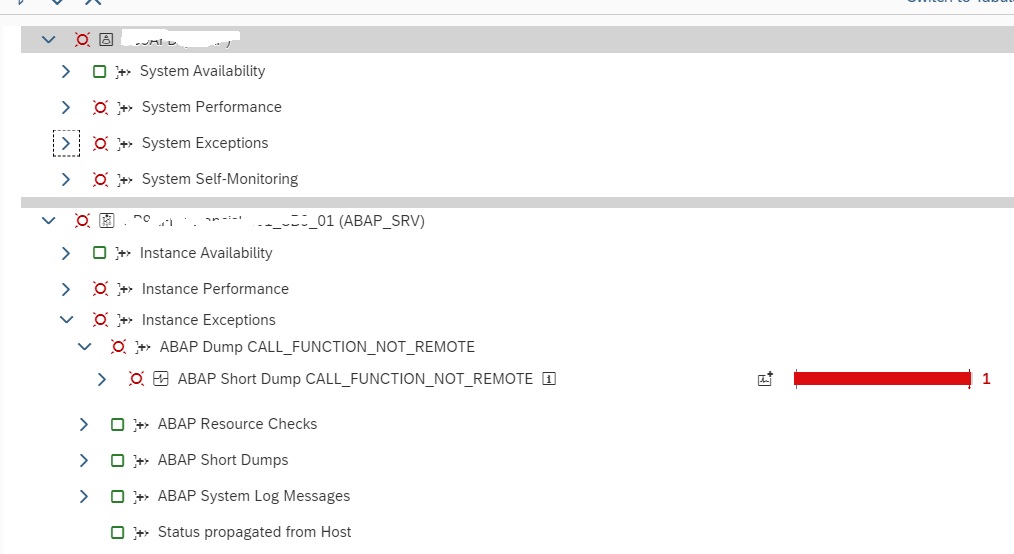

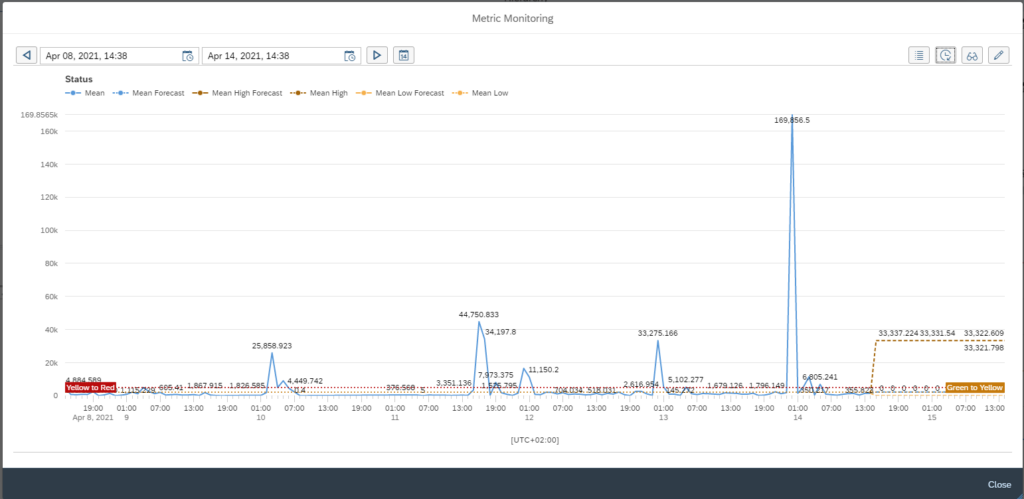

Alert trigger integration

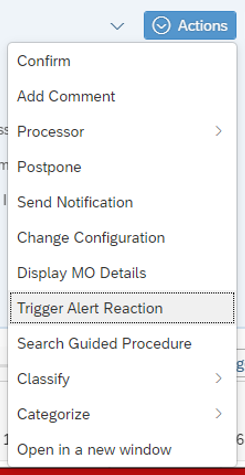

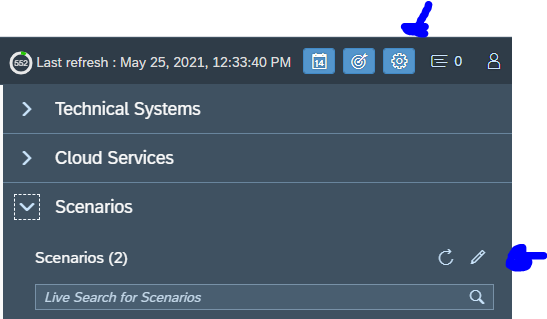

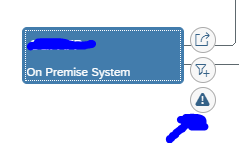

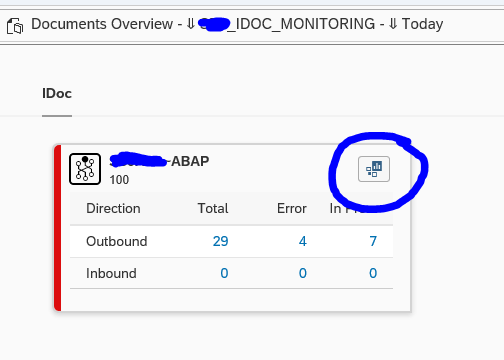

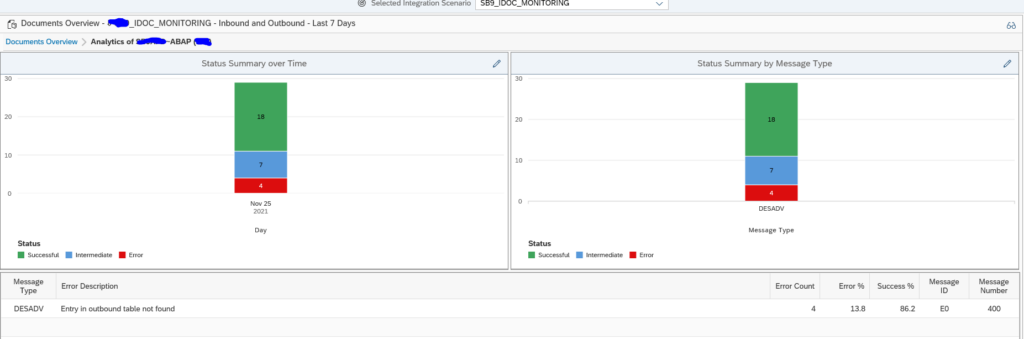

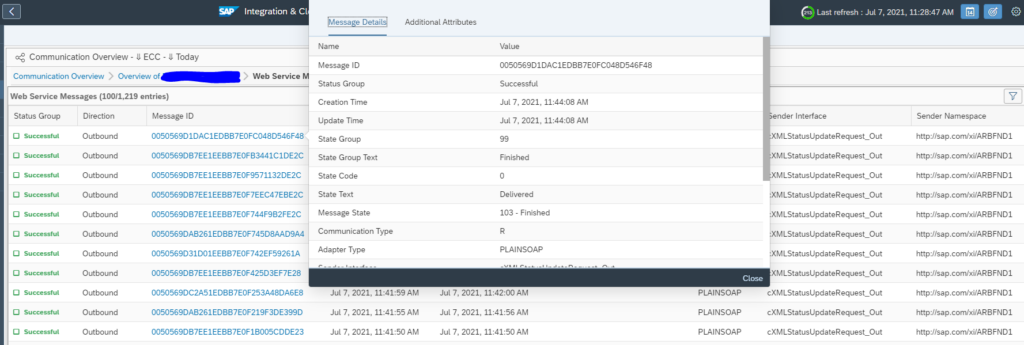

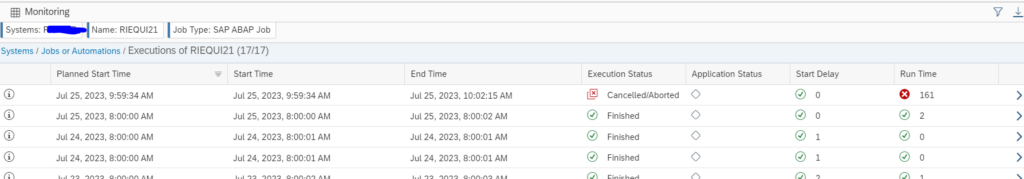

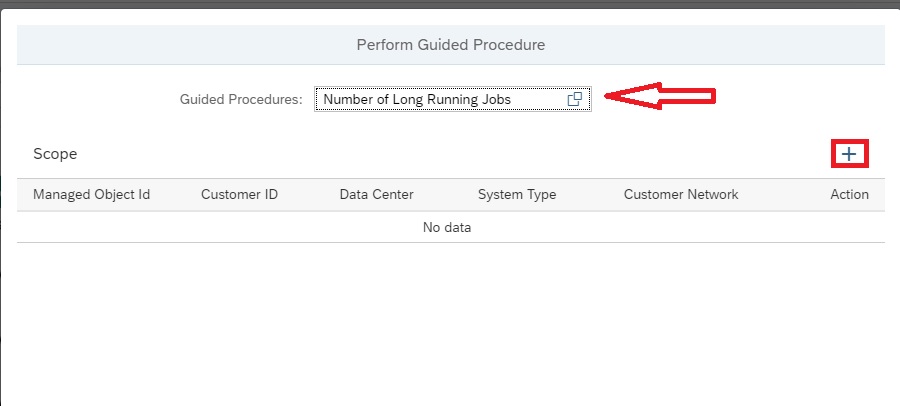

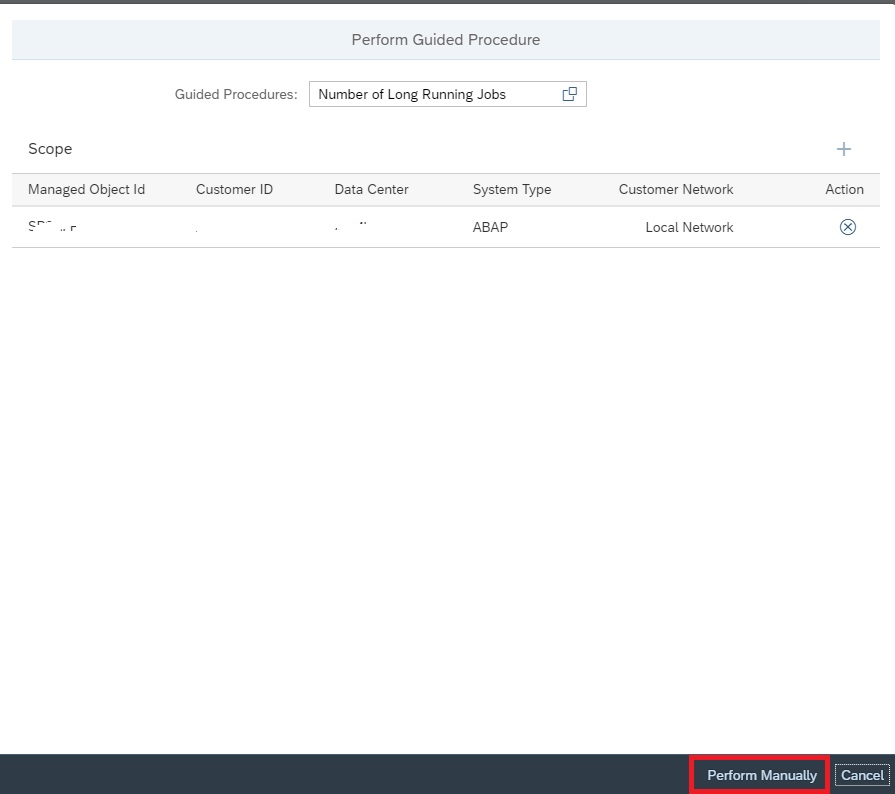

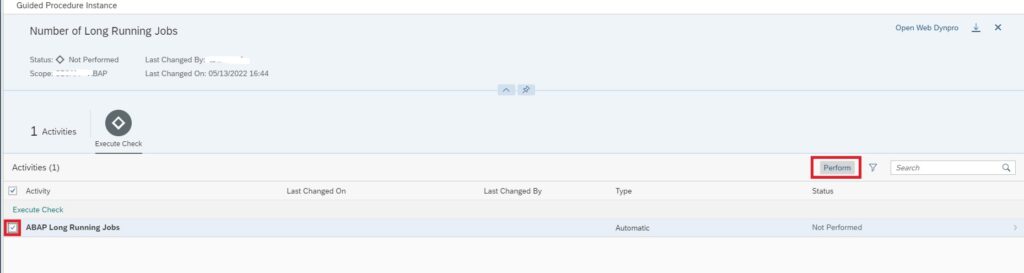

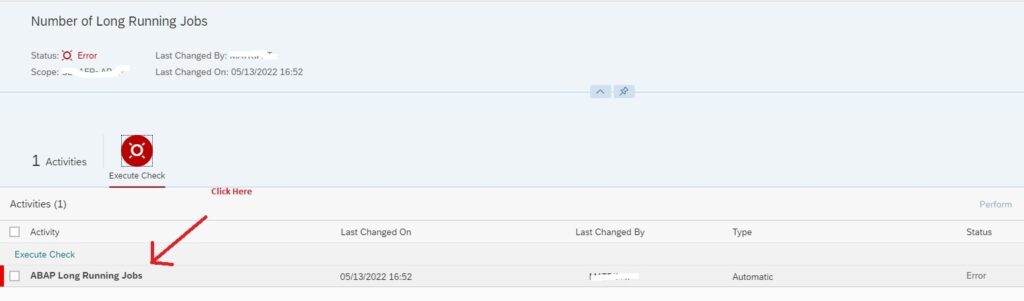

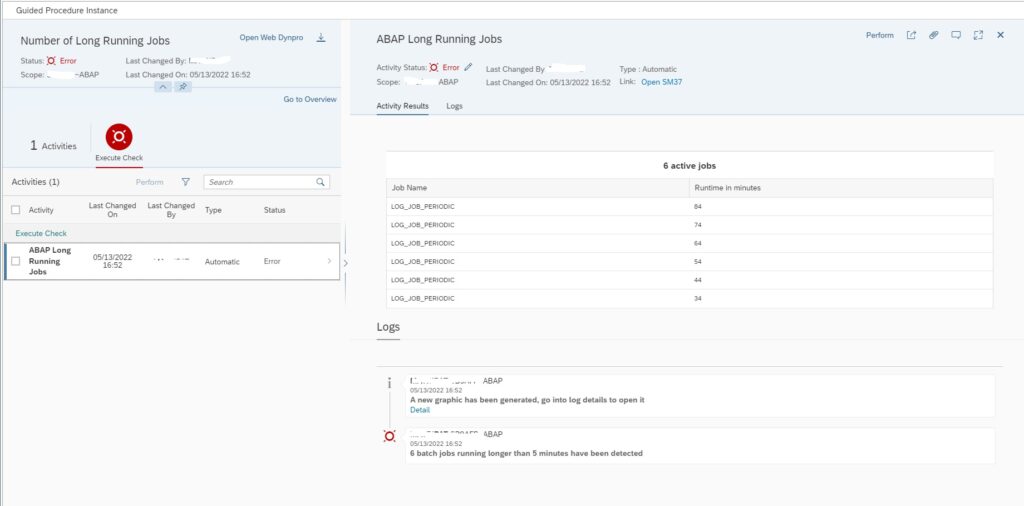

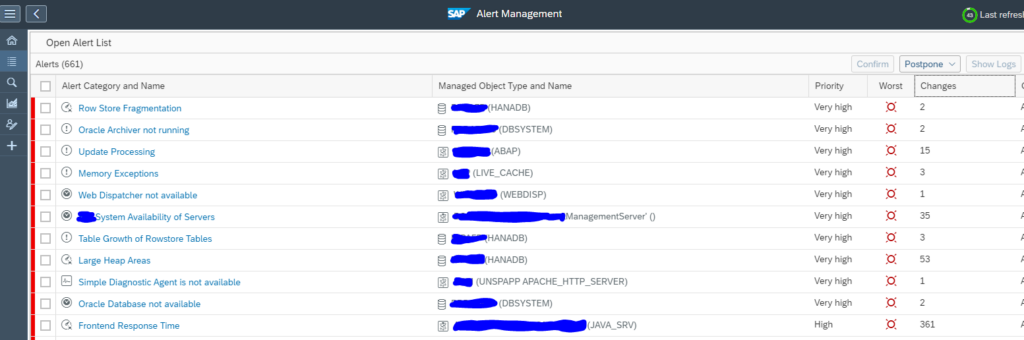

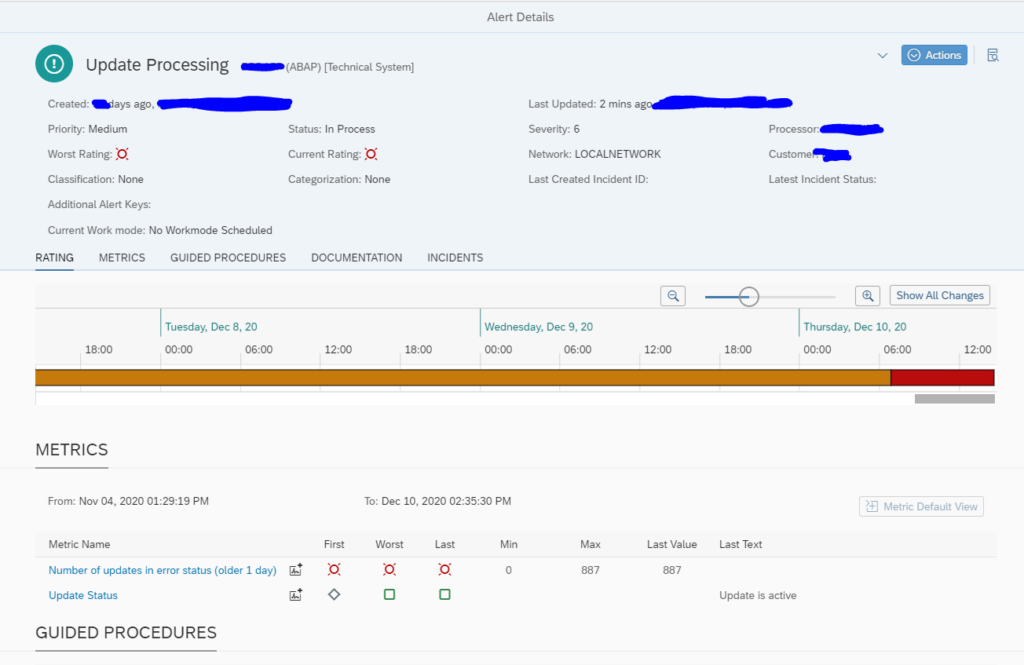

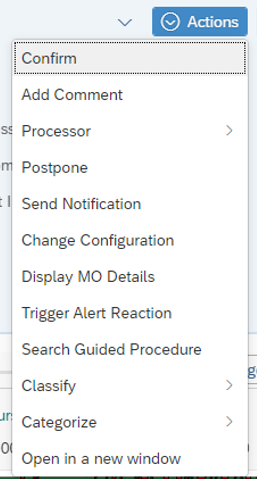

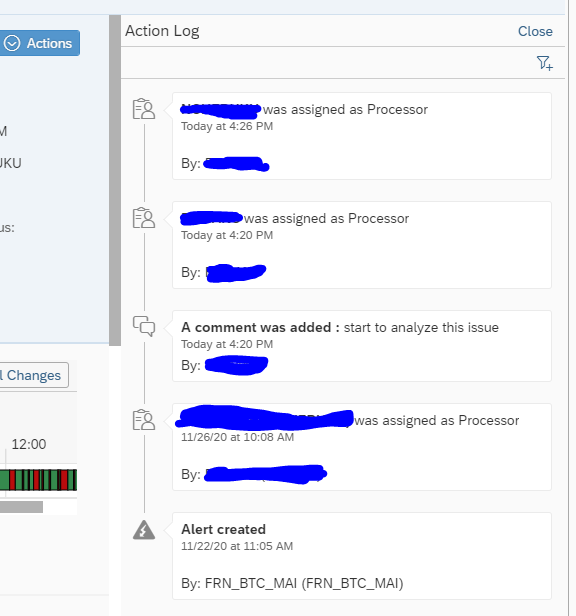

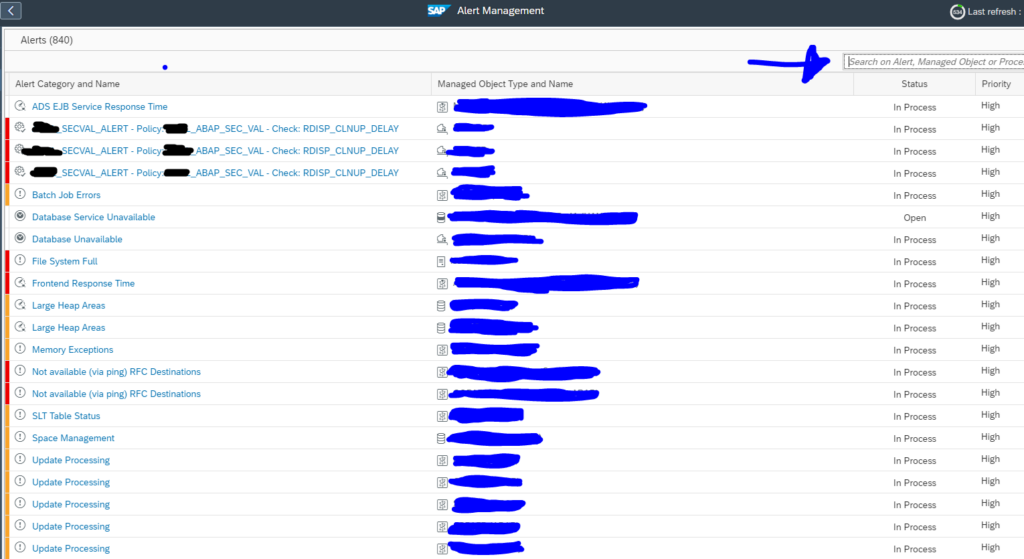

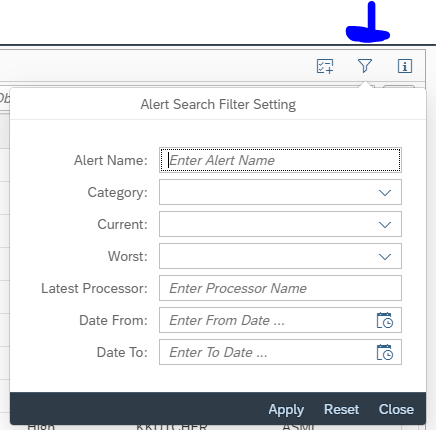

If you are inside an alert, you can trigger the alert reaction:

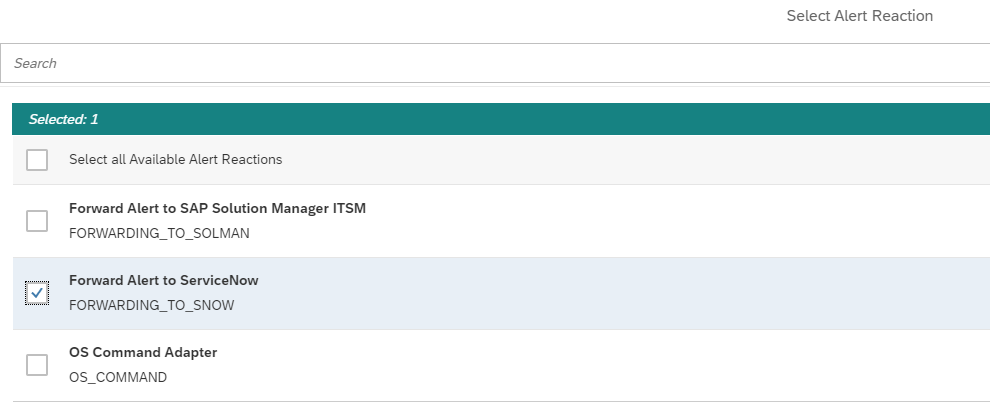

Then select the reaction to forward to ServiceNow:

Within few seconds the alert in ServiceNow is created:

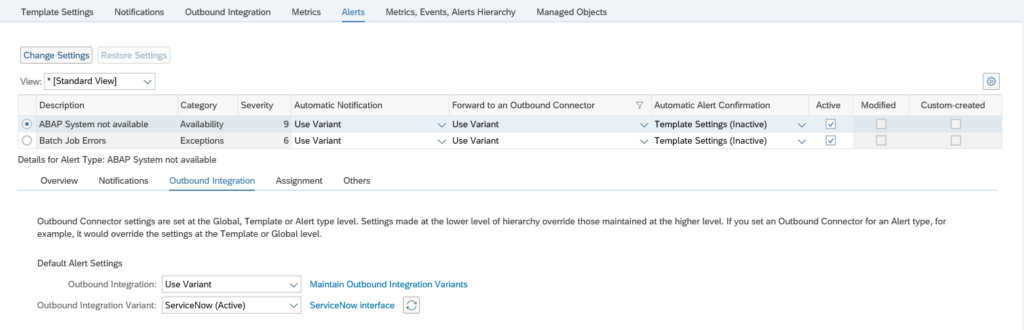

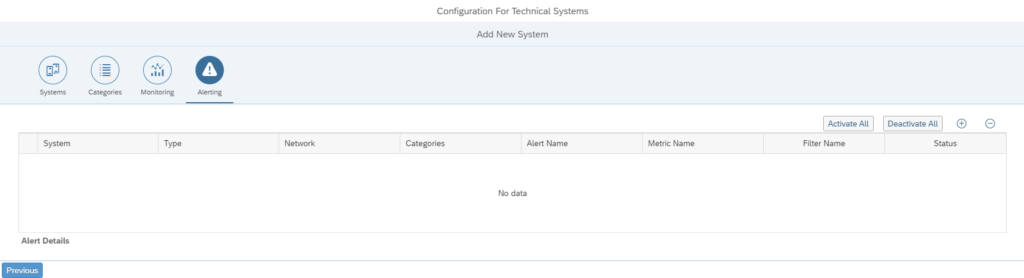

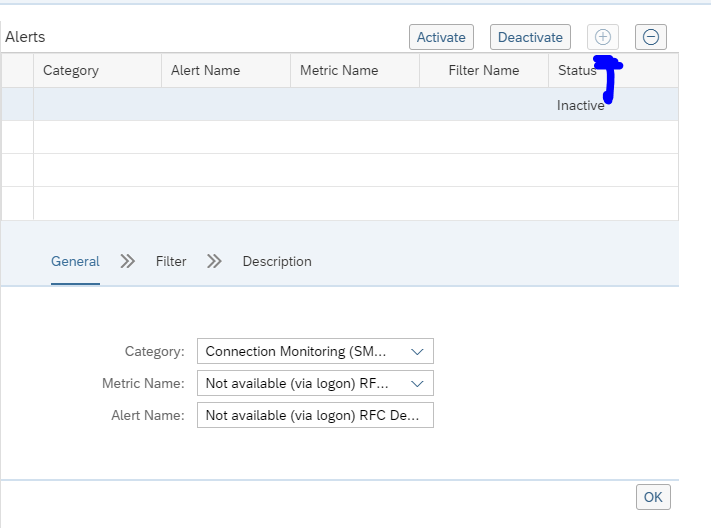

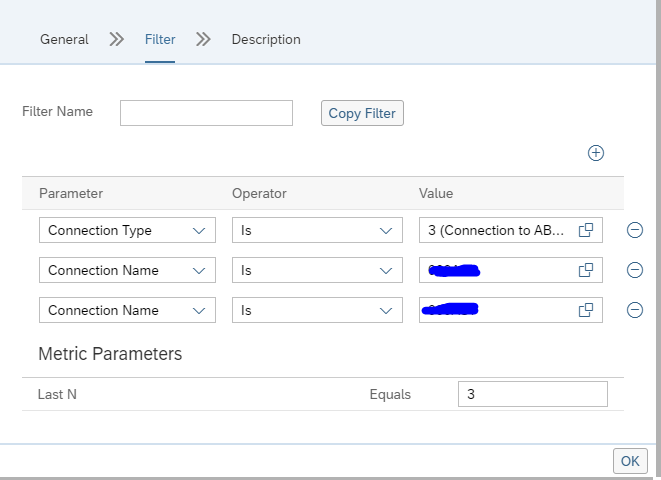

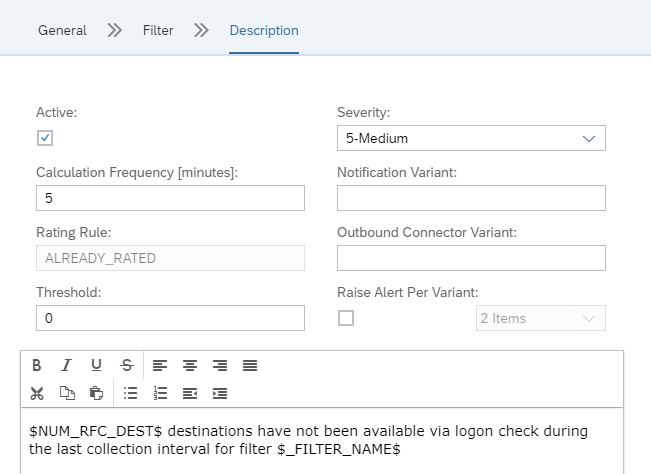

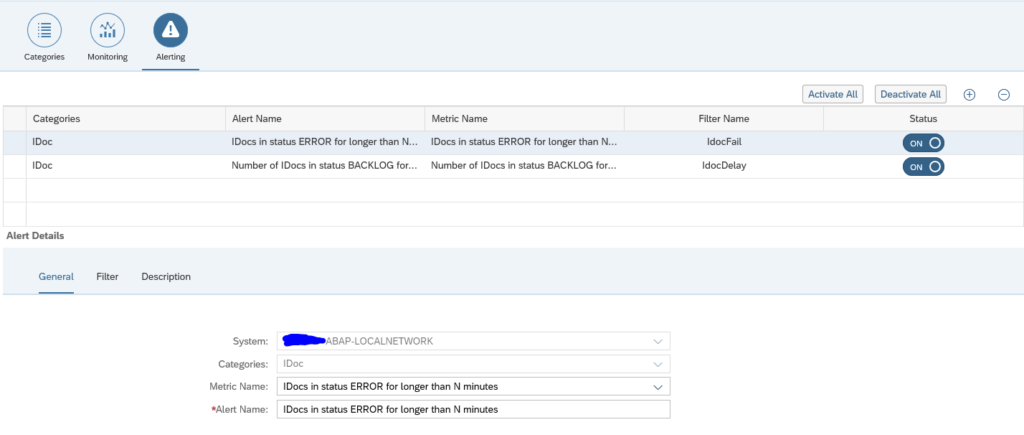

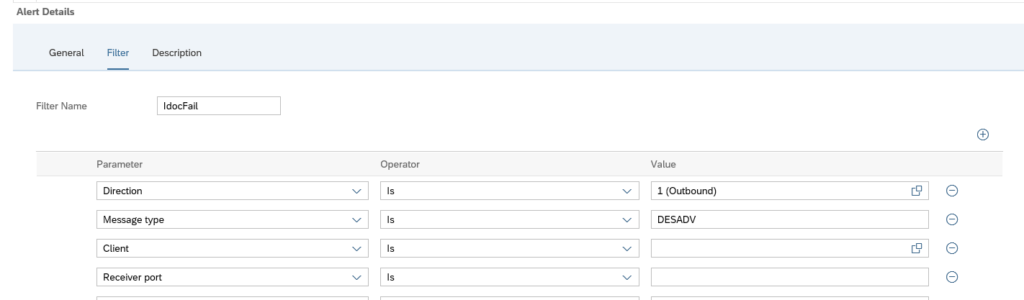

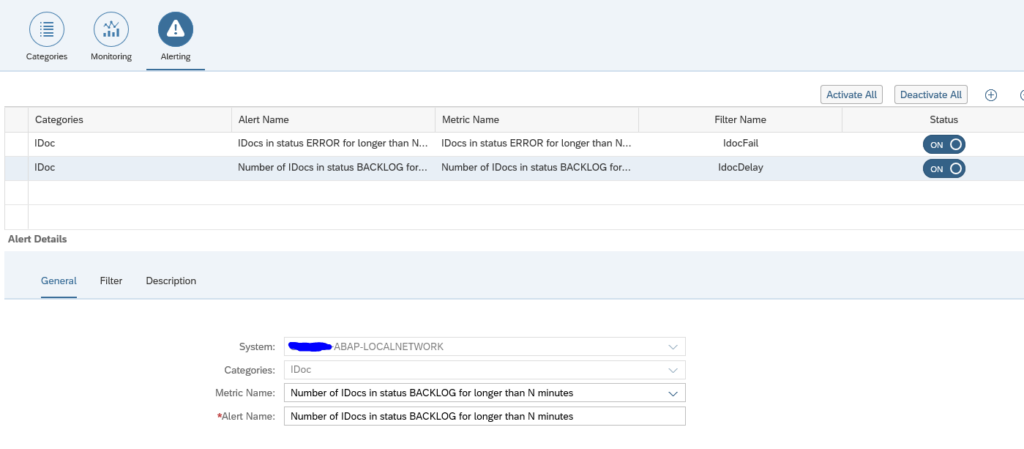

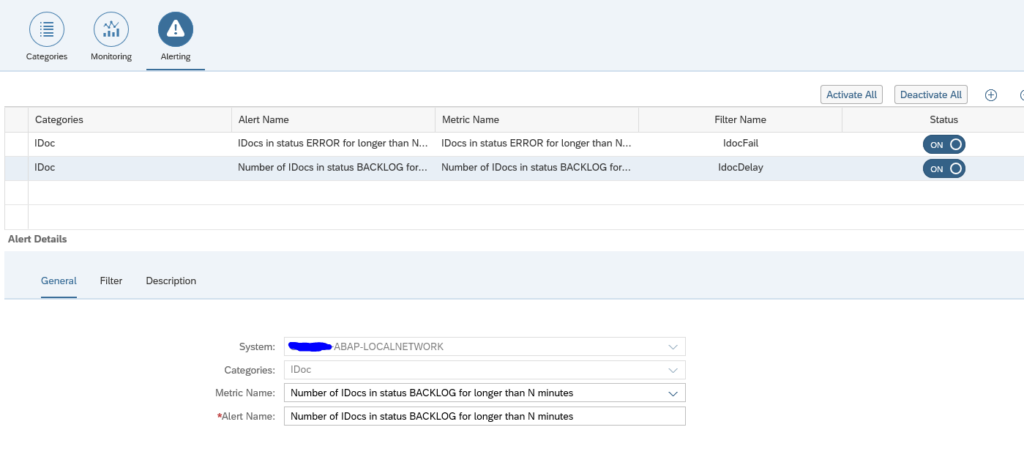

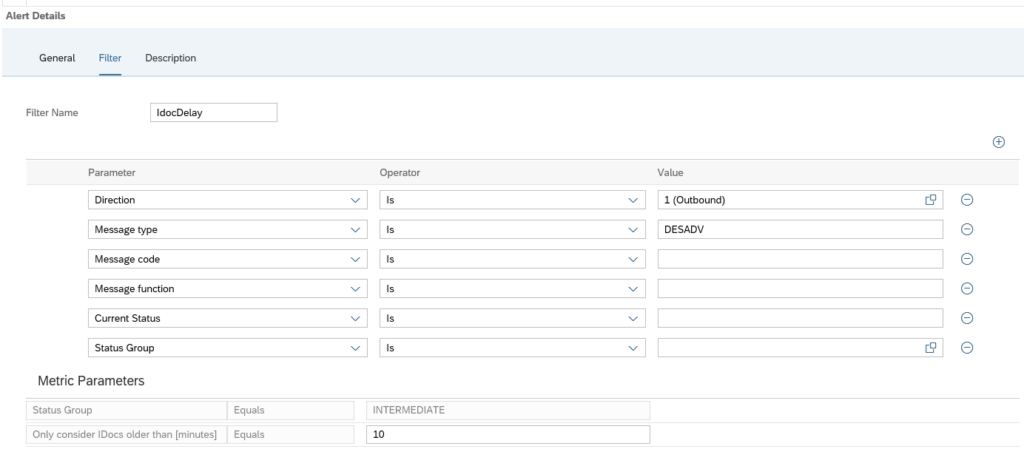

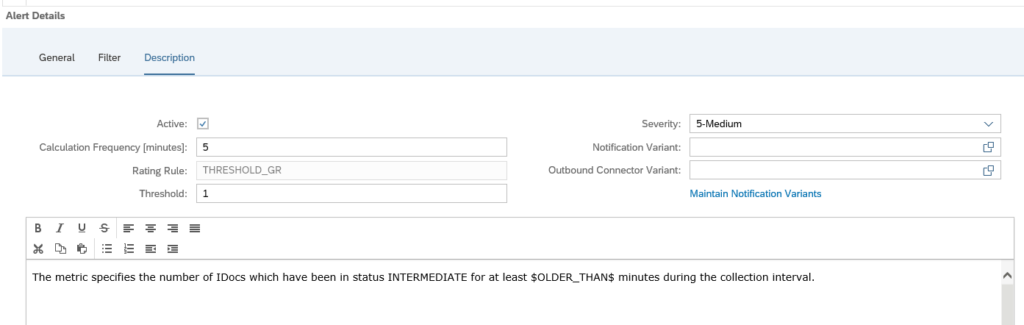

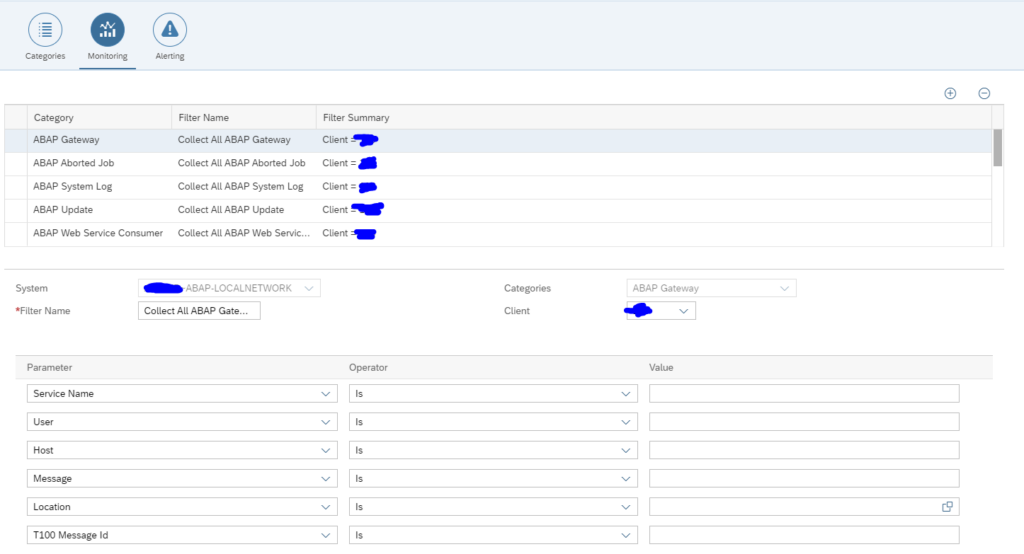

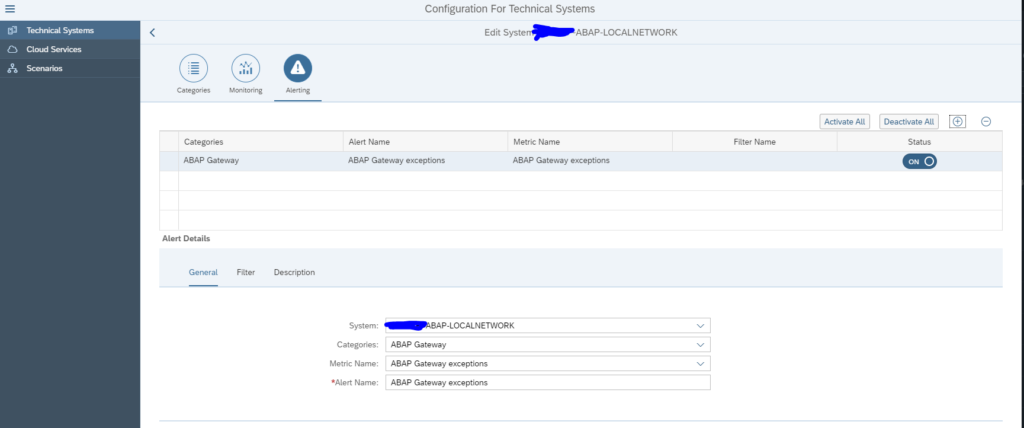

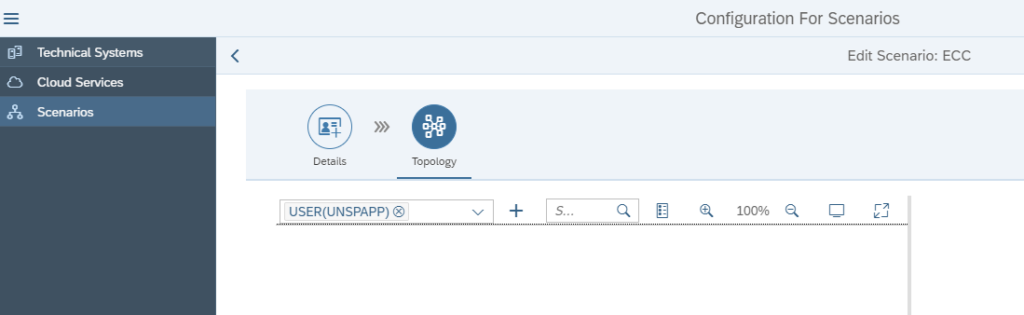

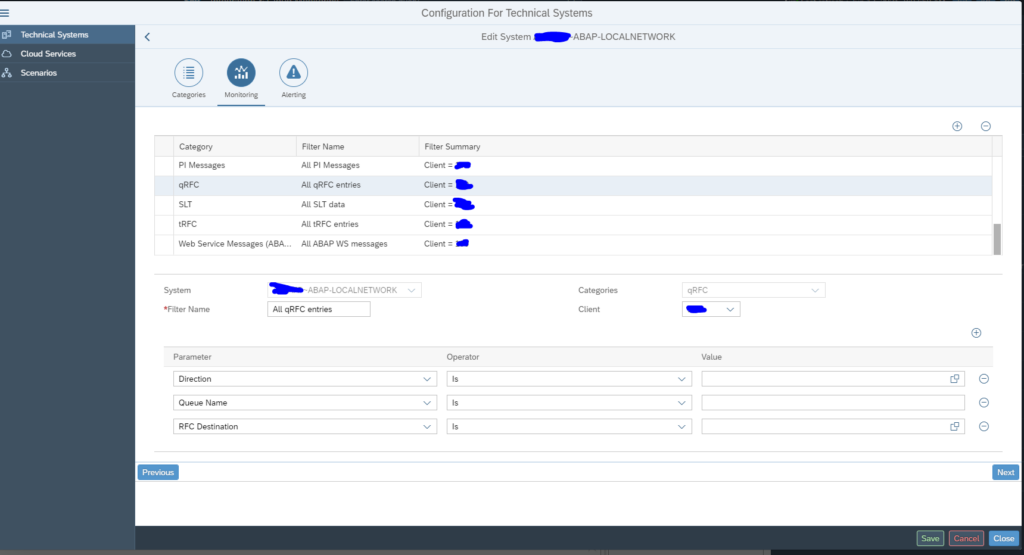

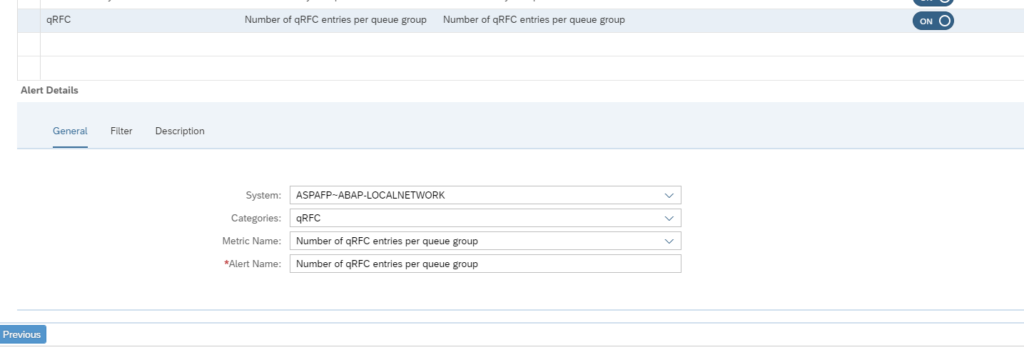

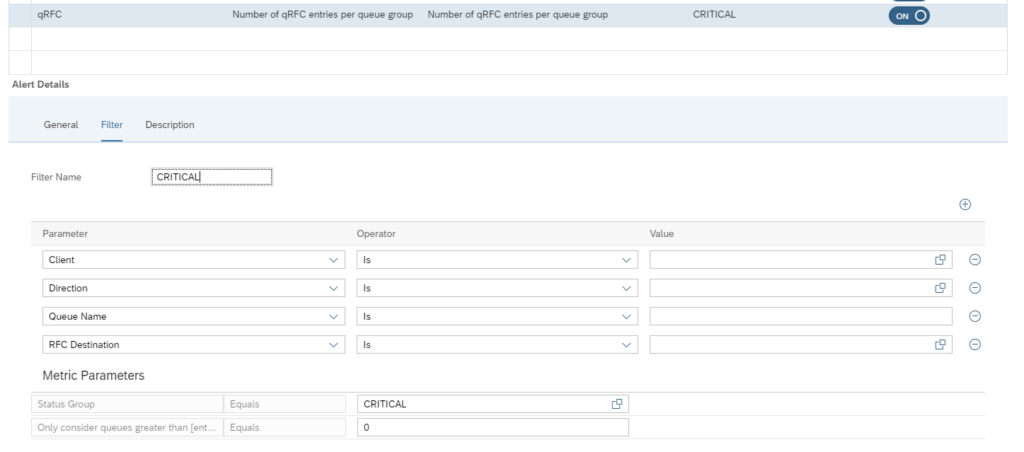

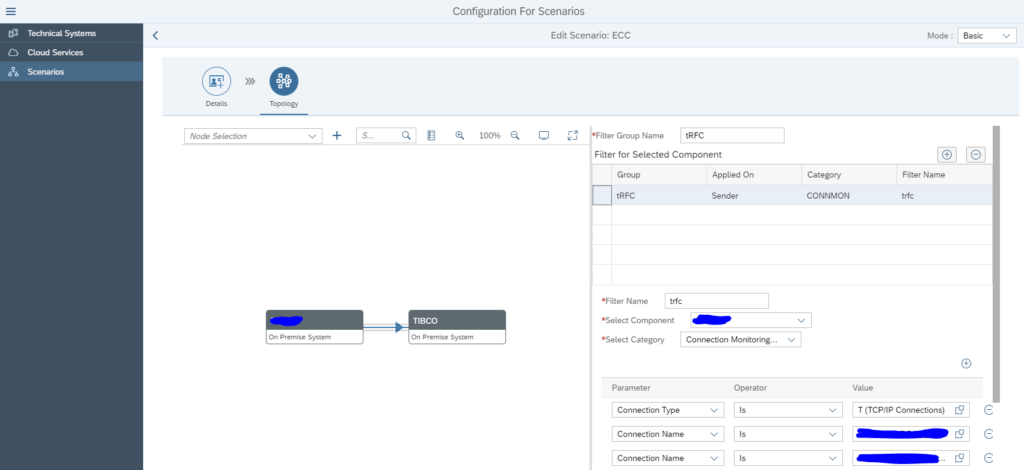

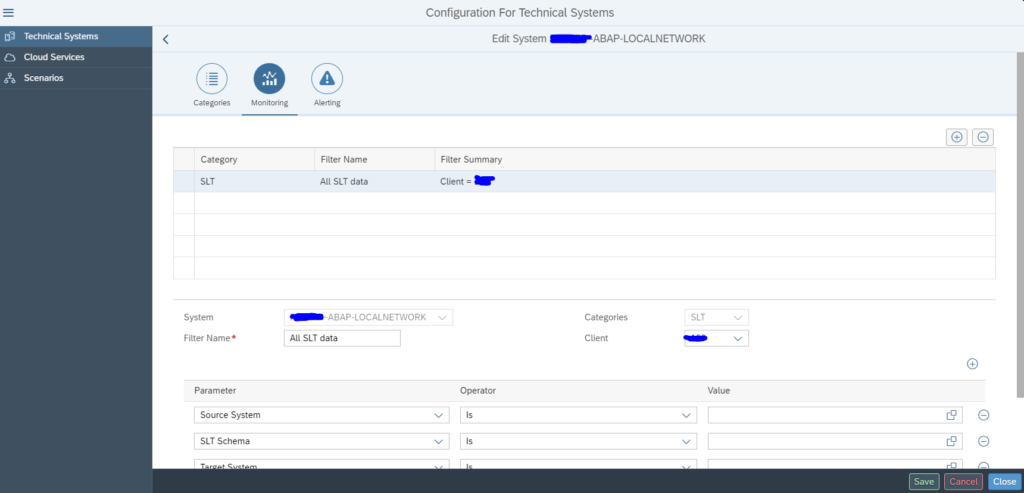

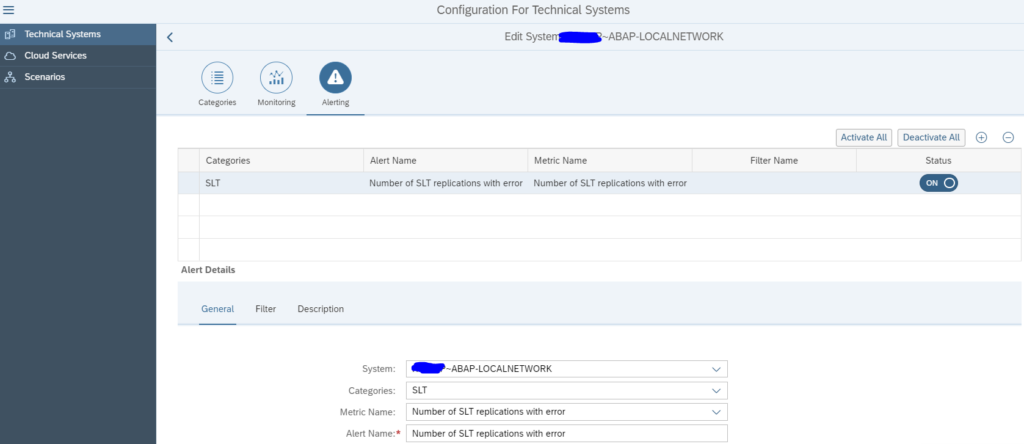

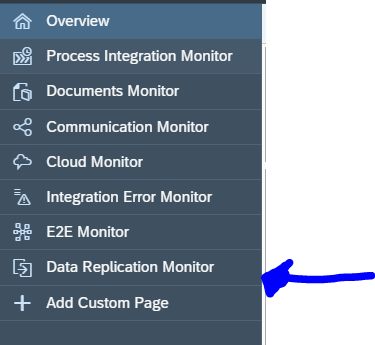

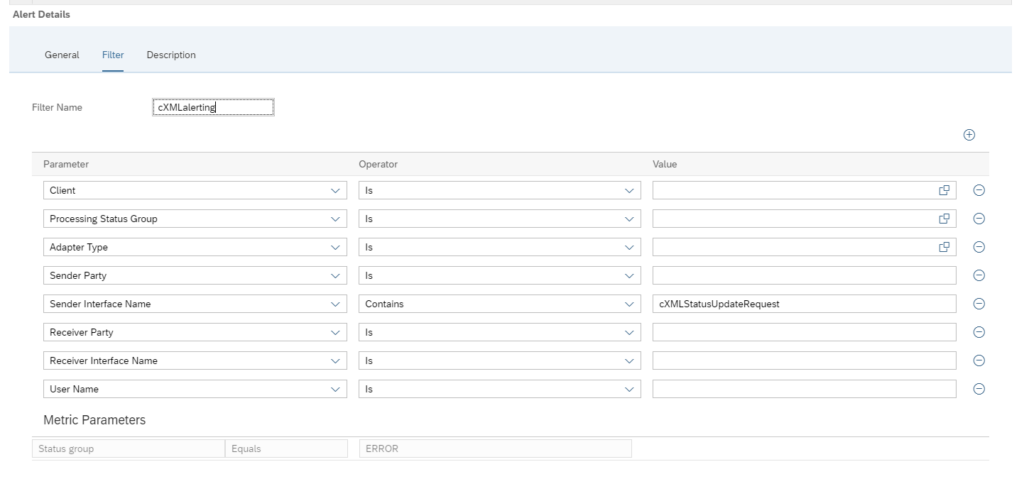

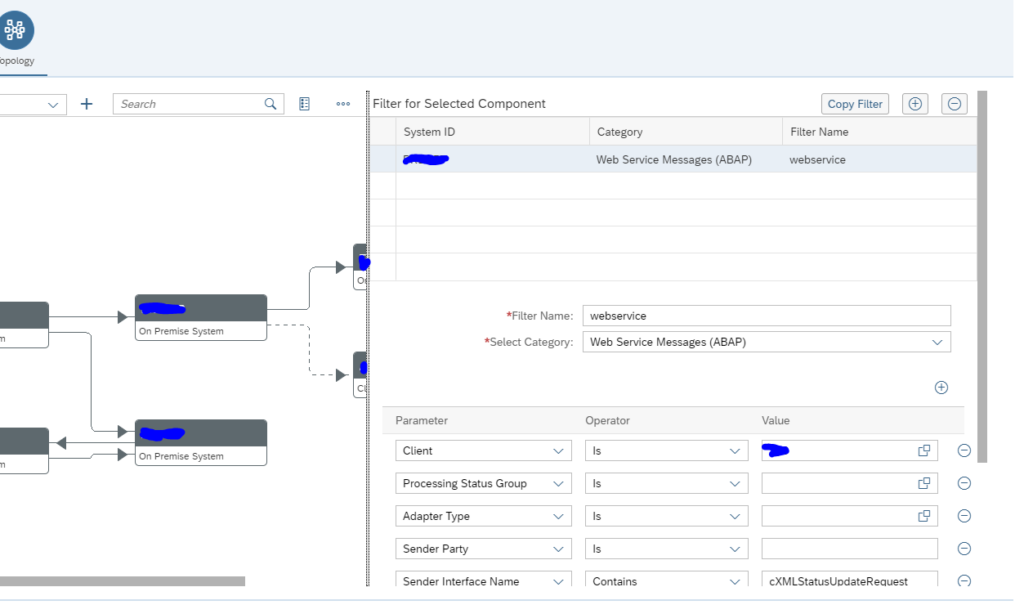

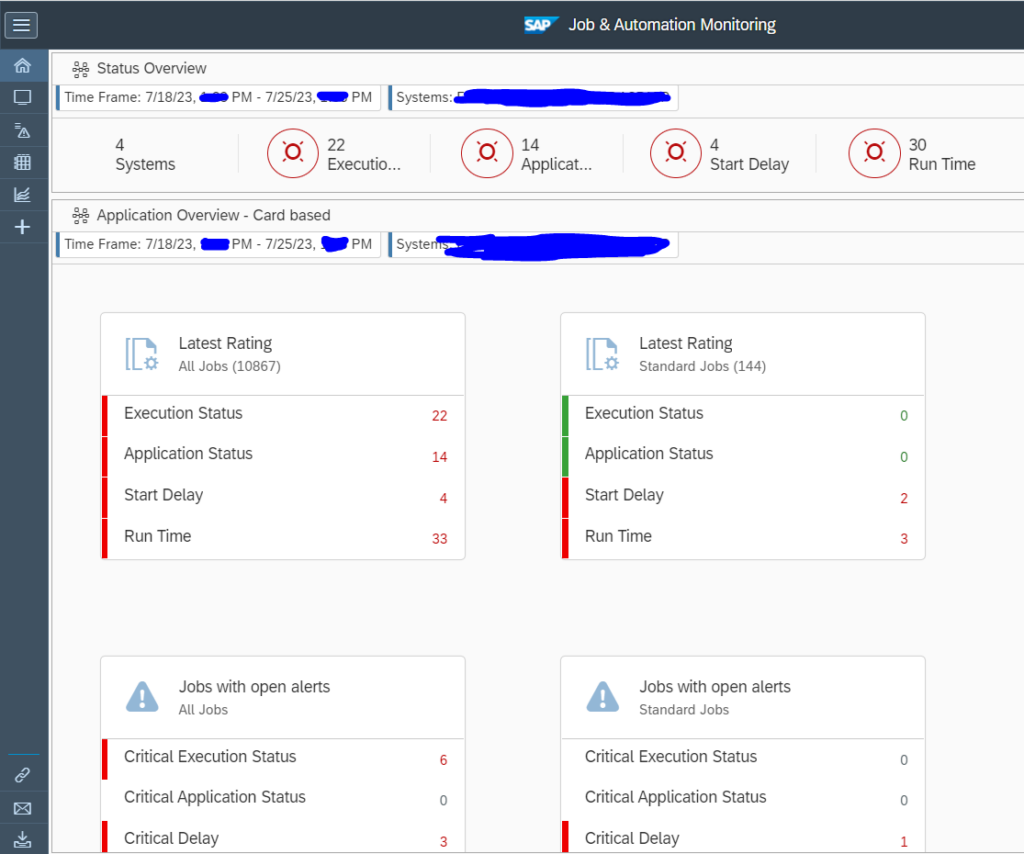

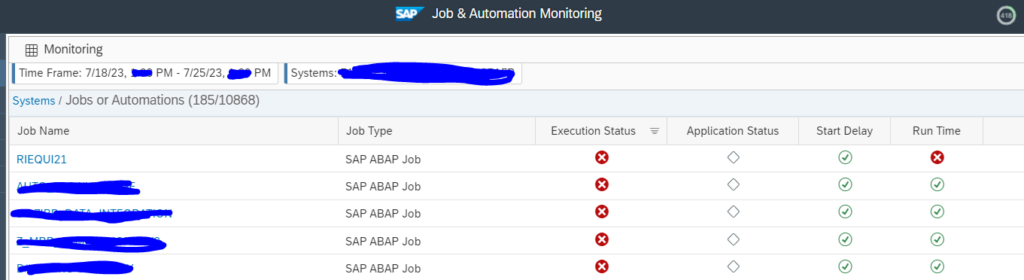

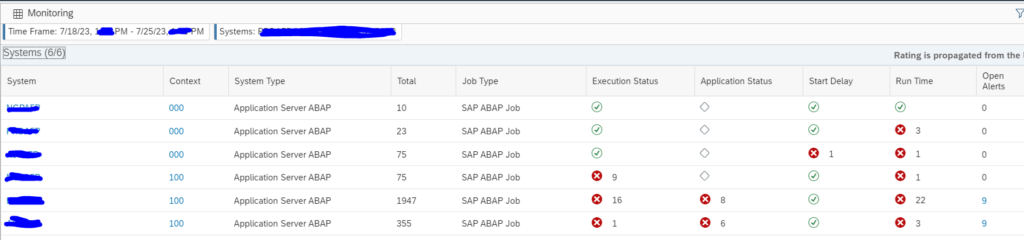

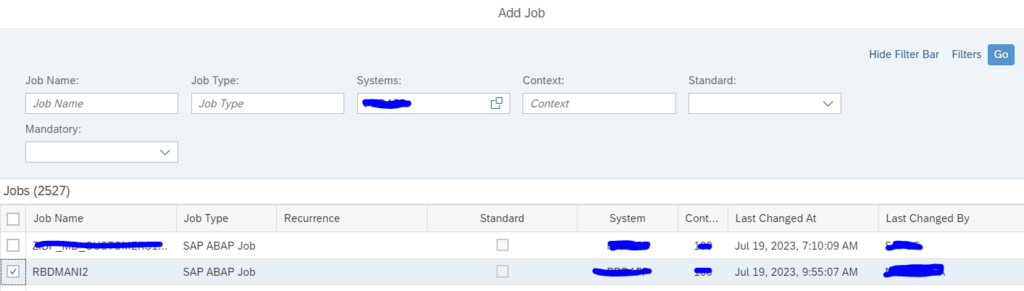

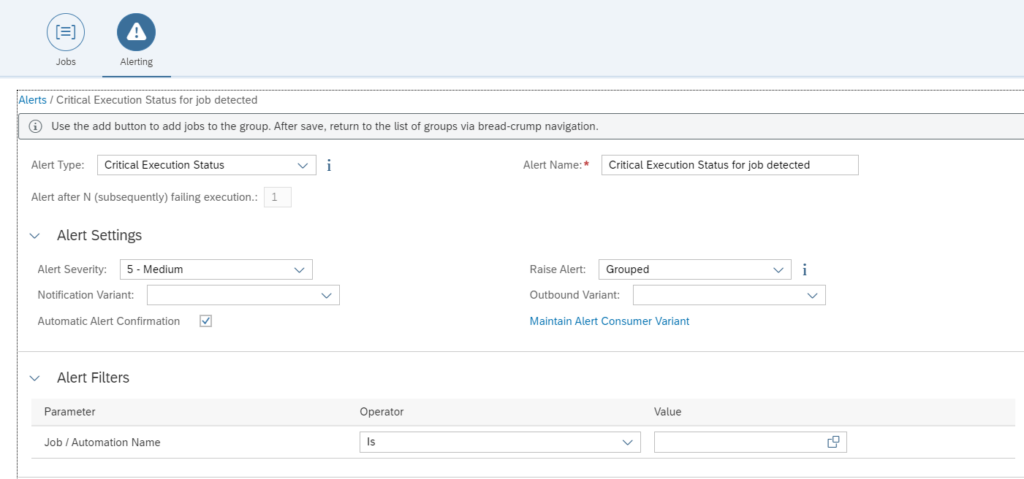

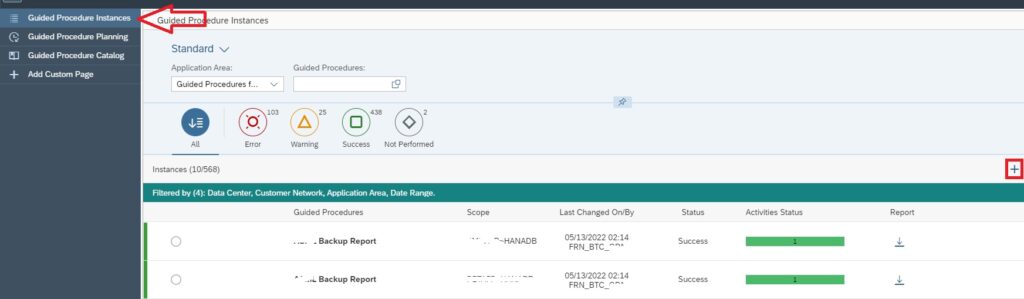

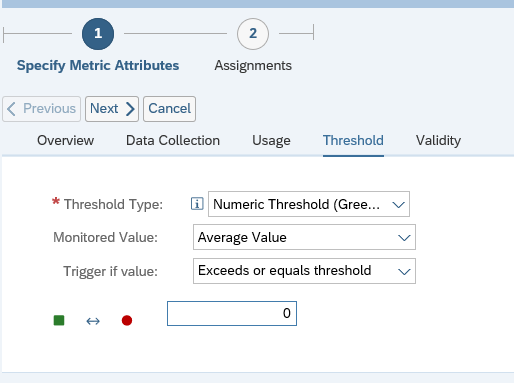

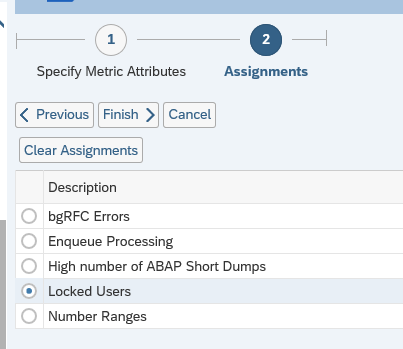

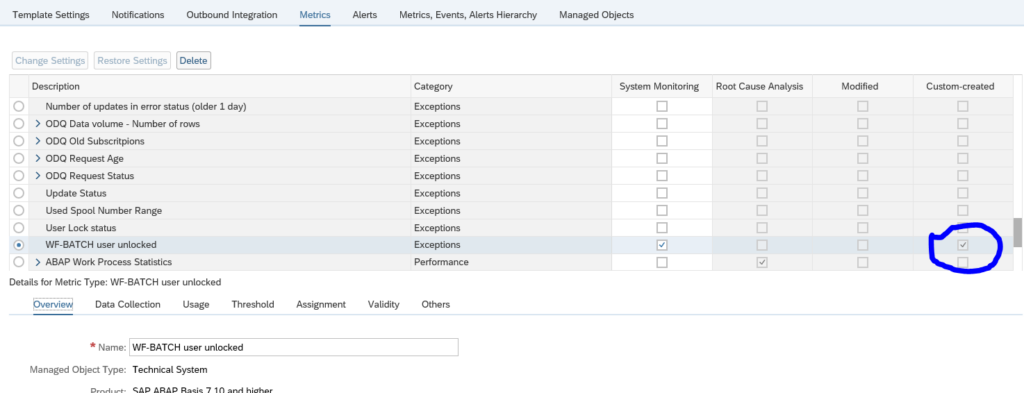

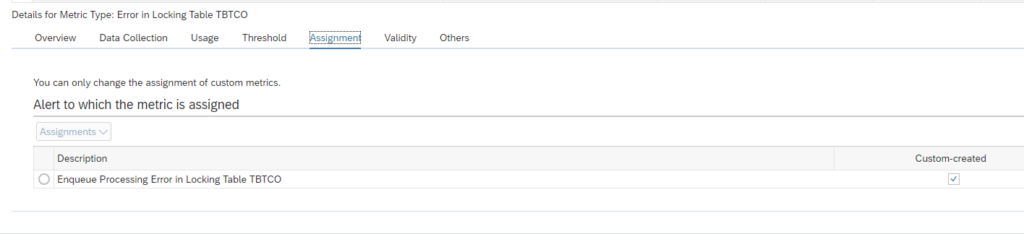

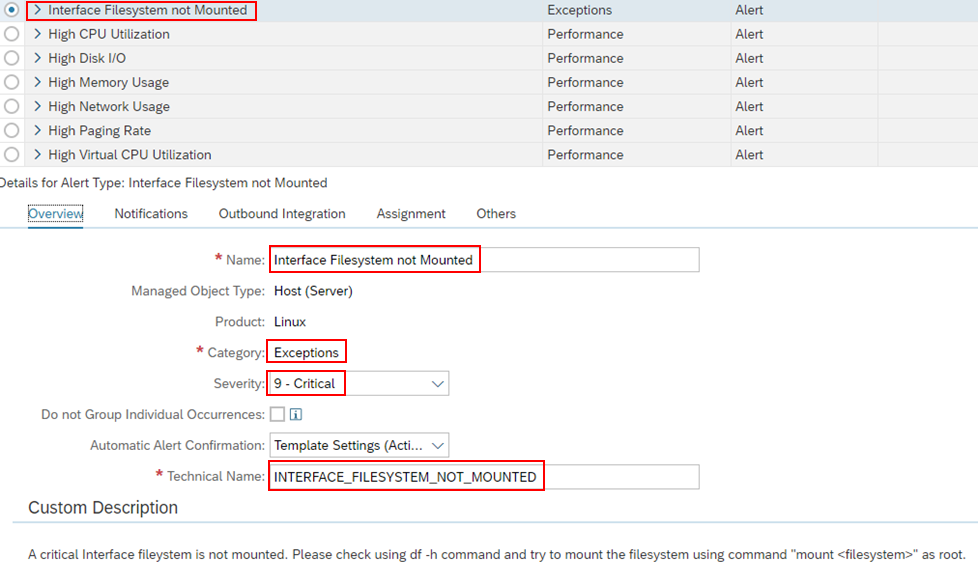

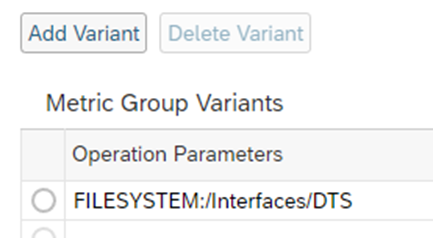

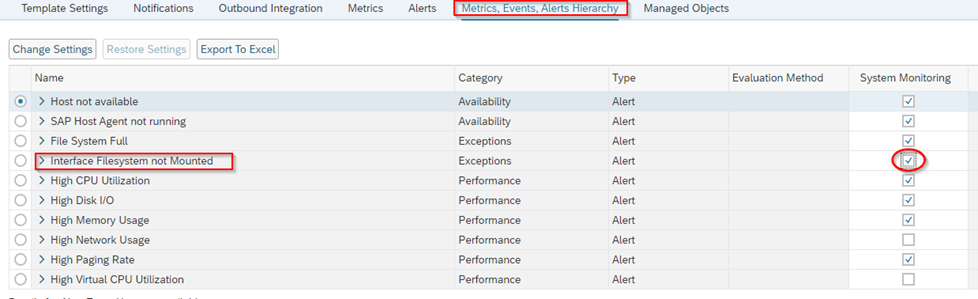

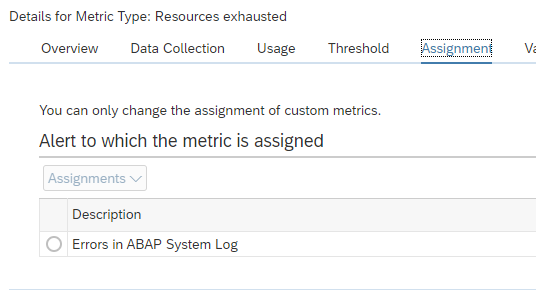

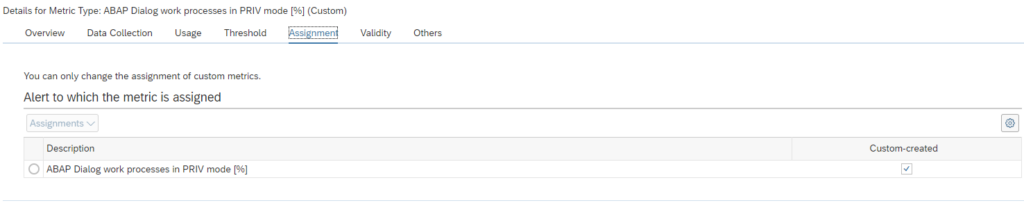

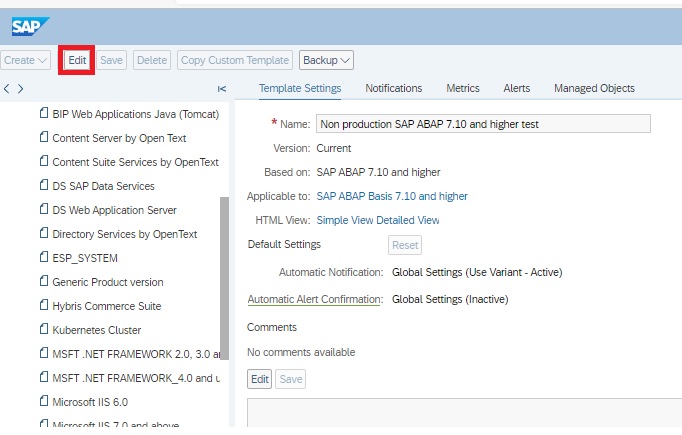

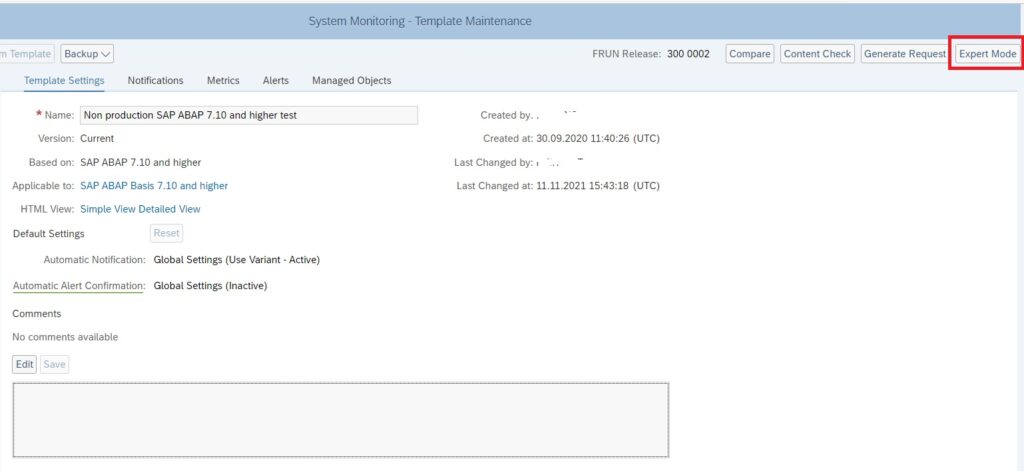

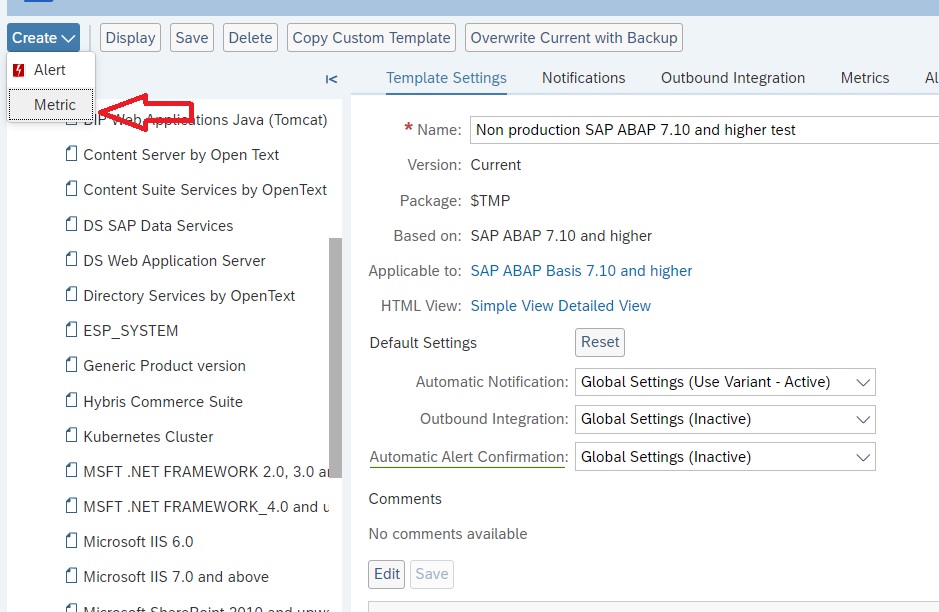

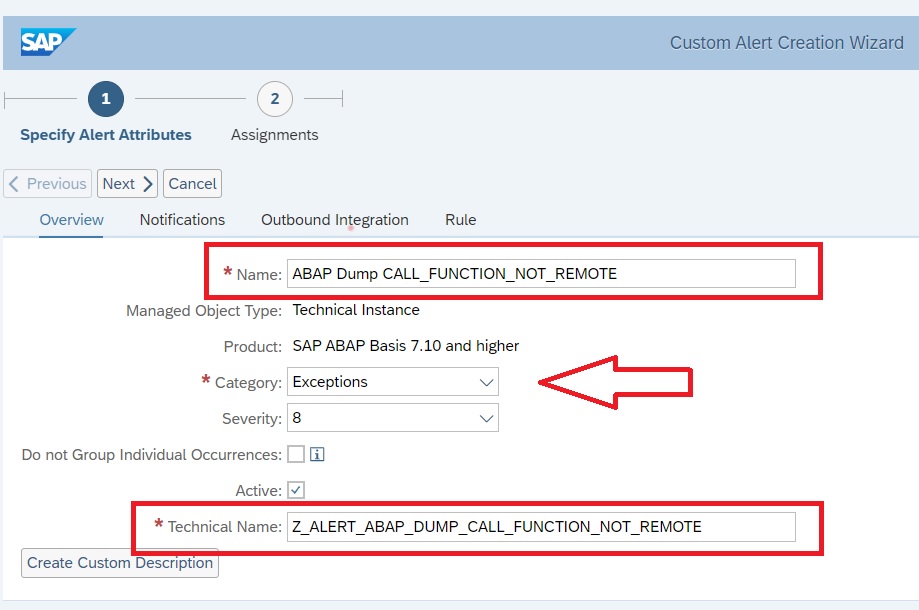

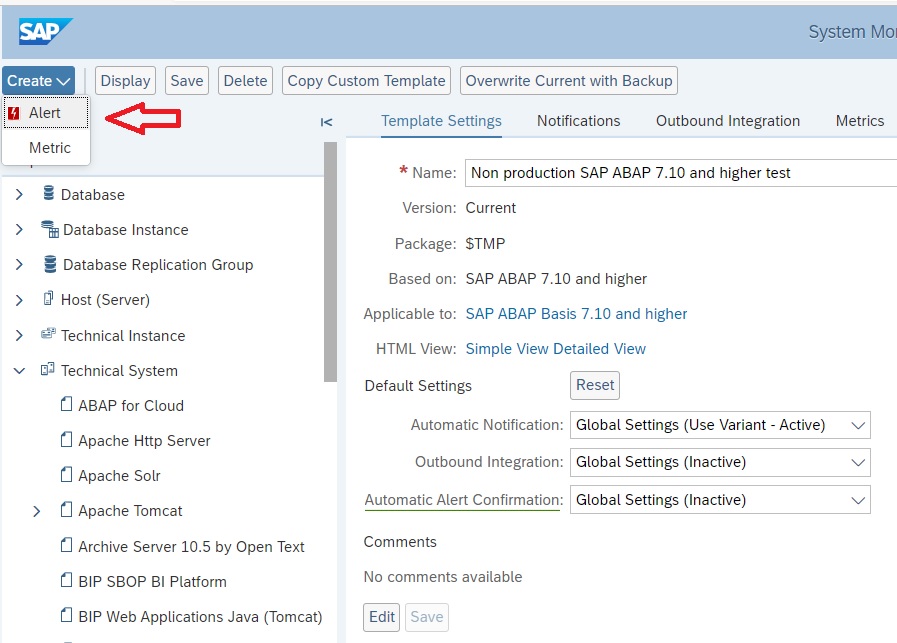

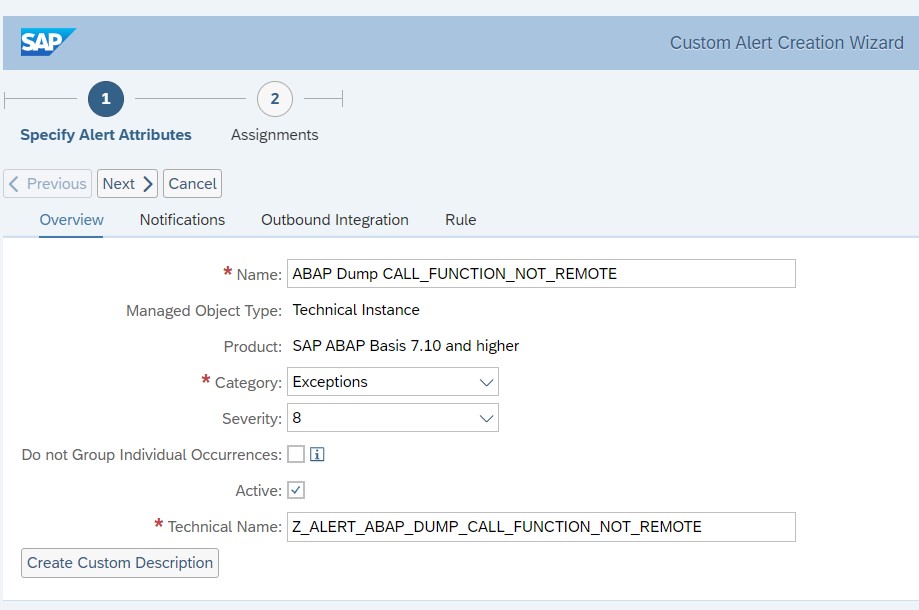

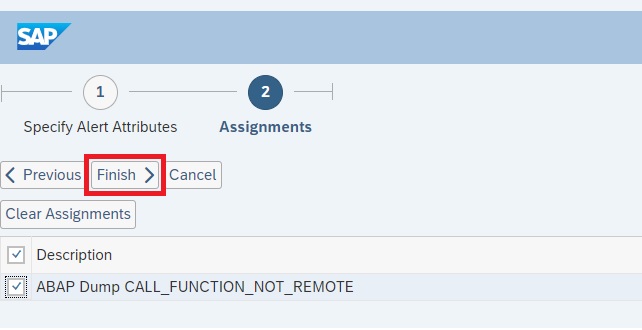

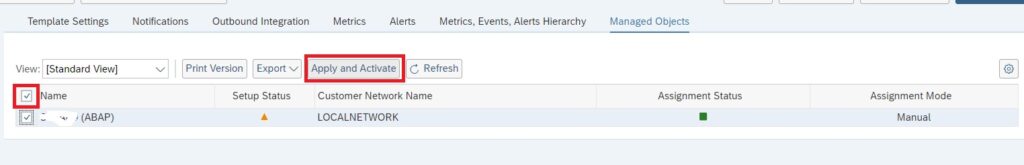

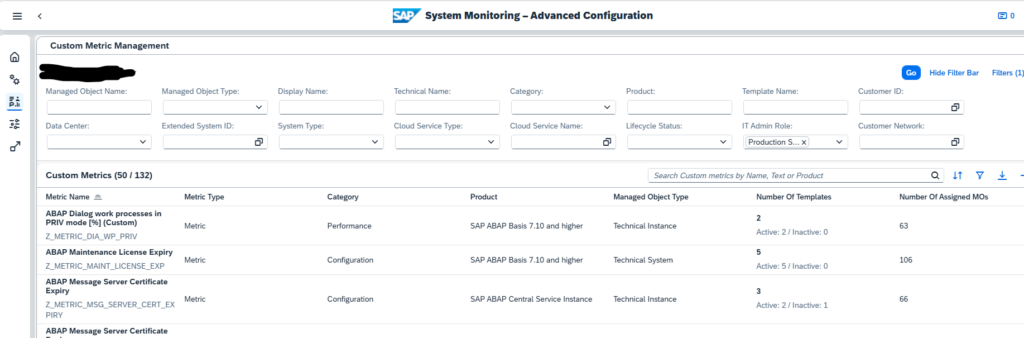

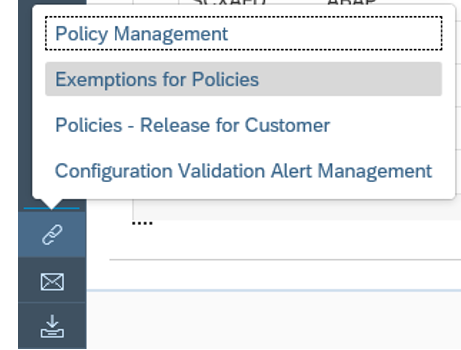

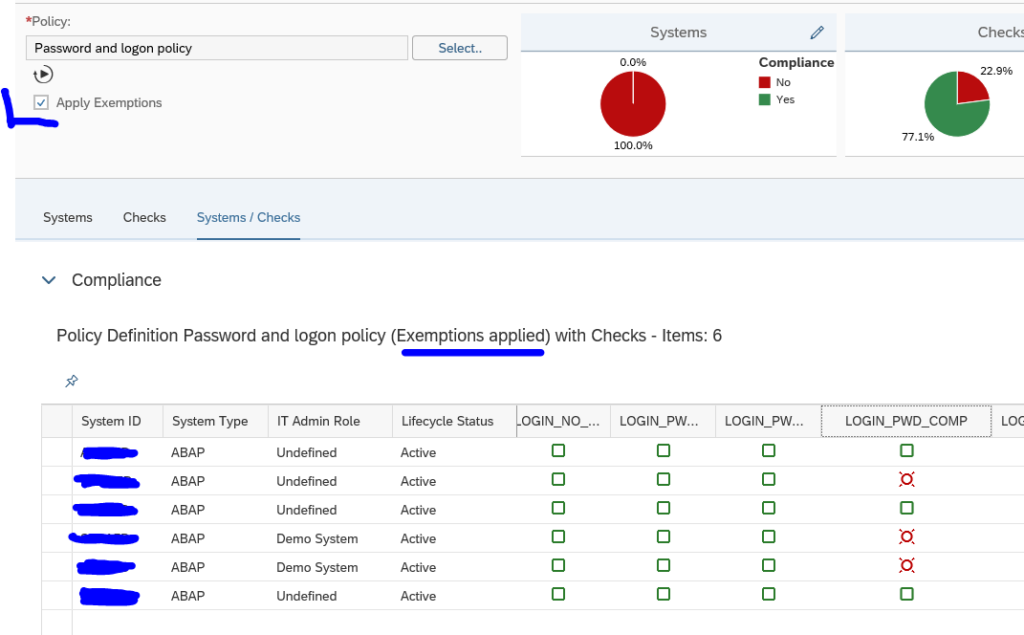

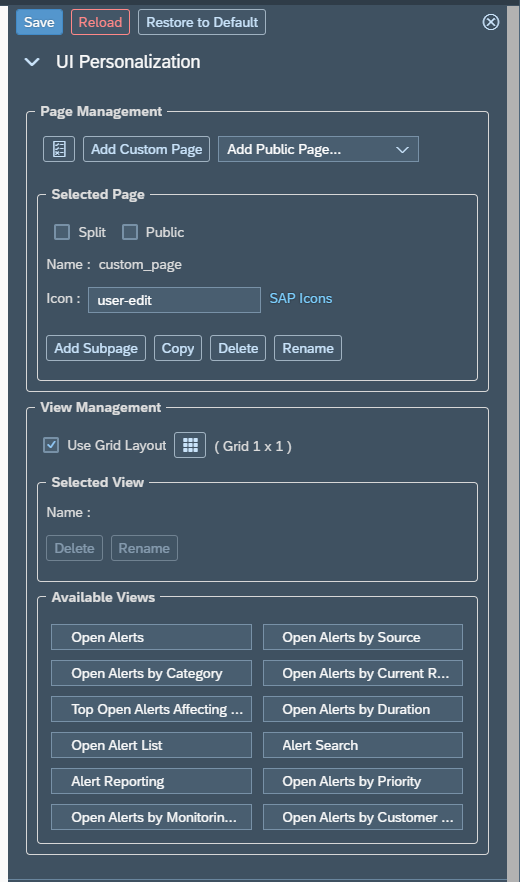

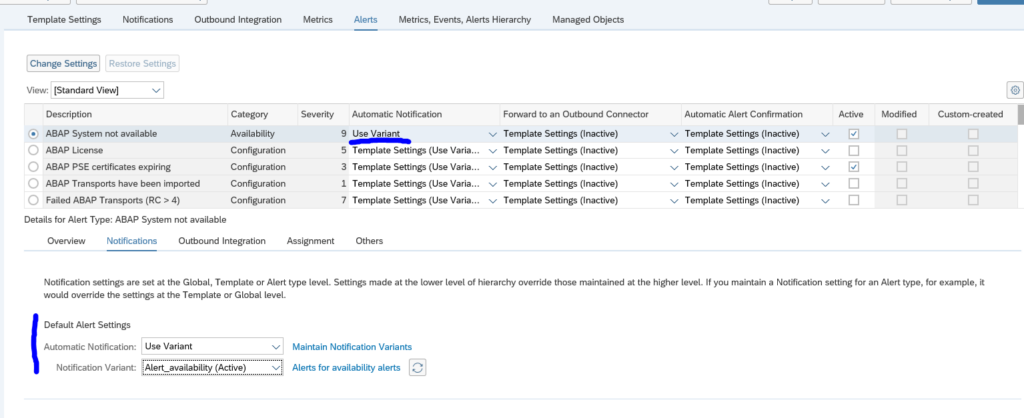

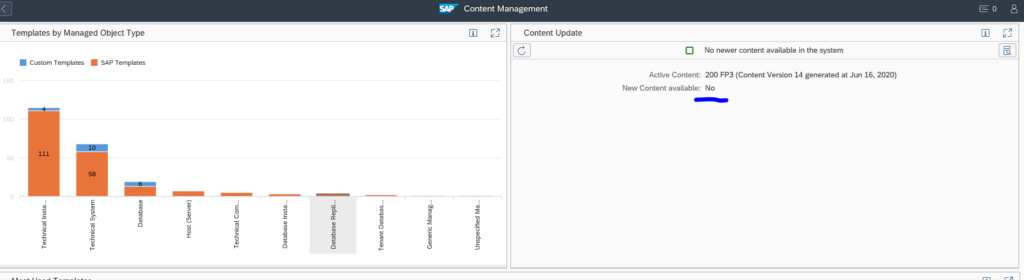

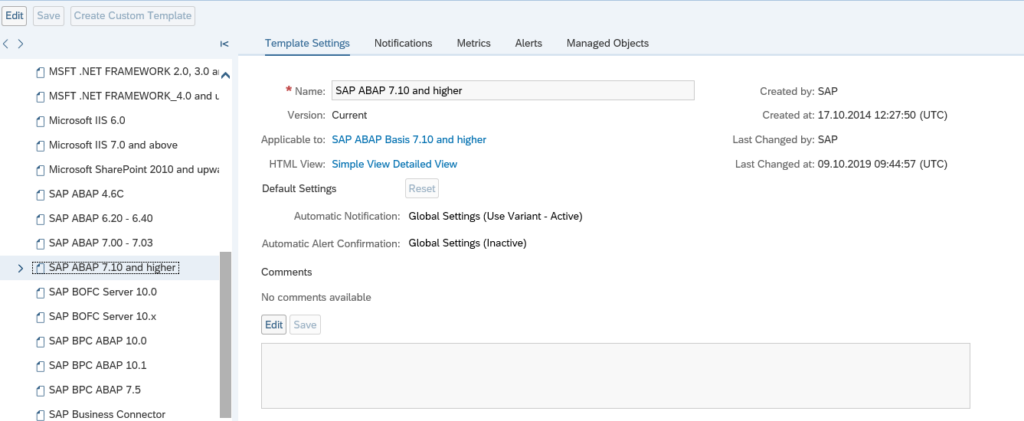

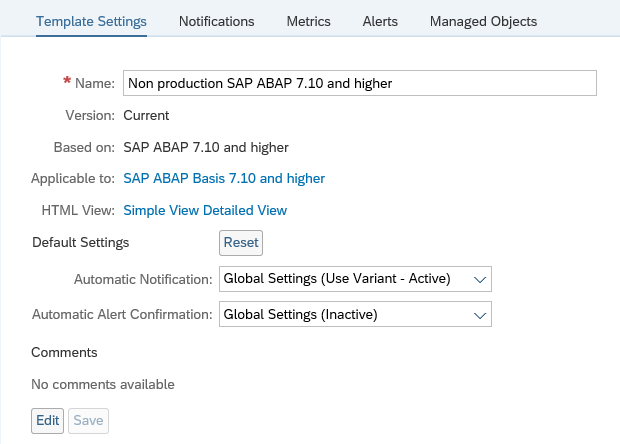

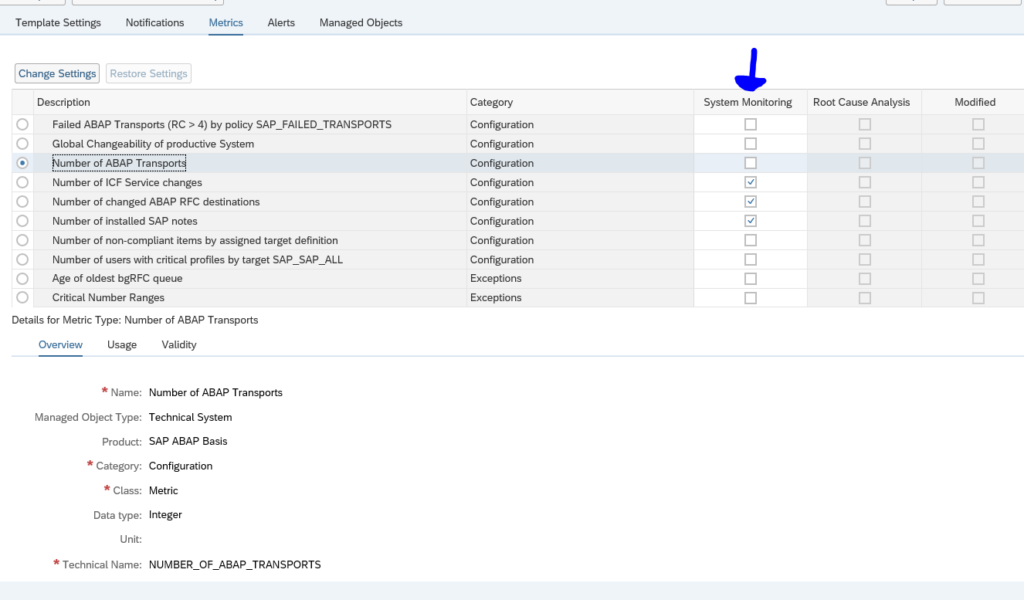

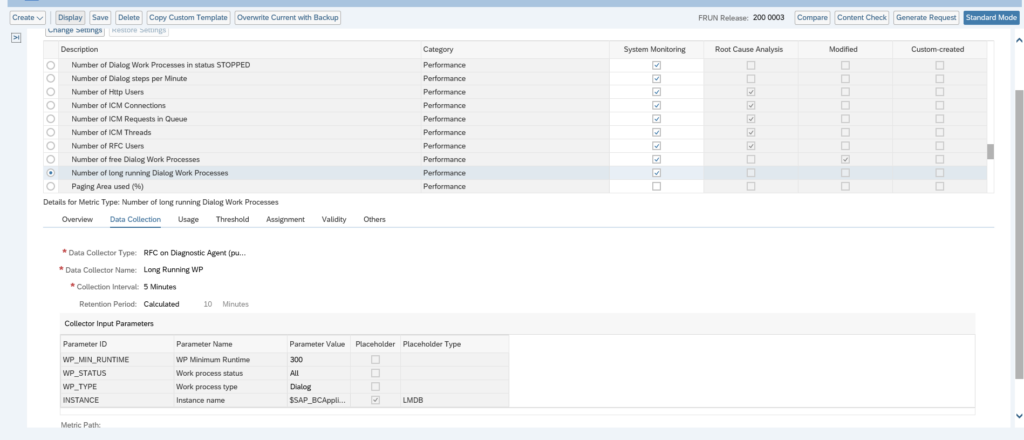

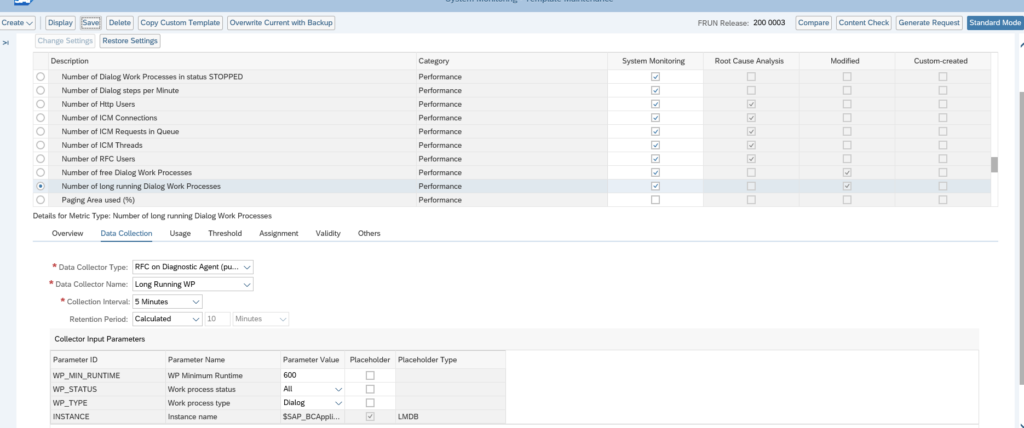

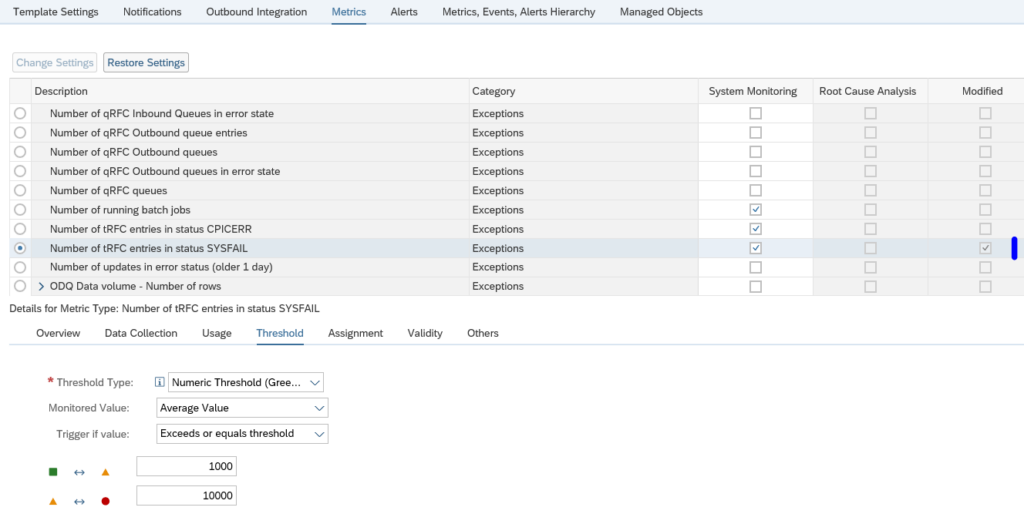

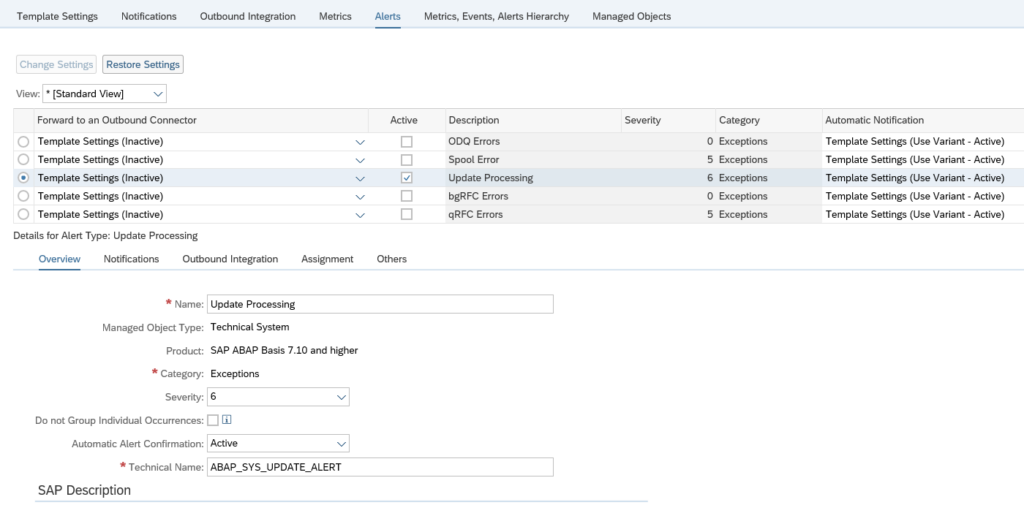

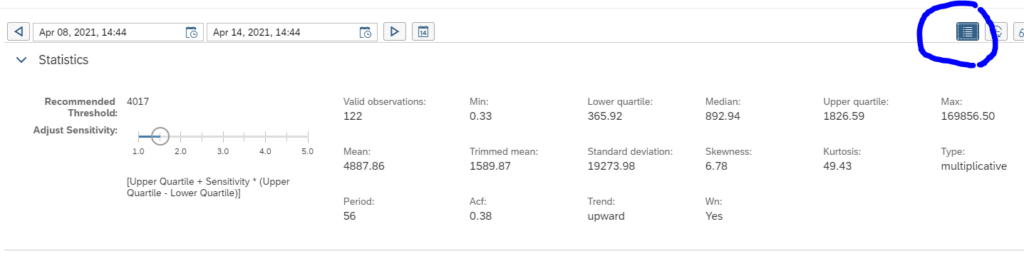

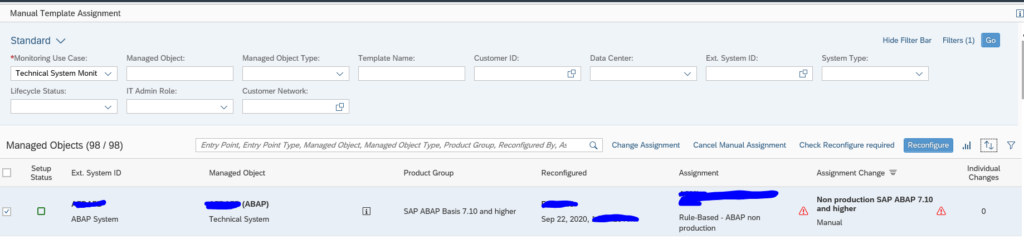

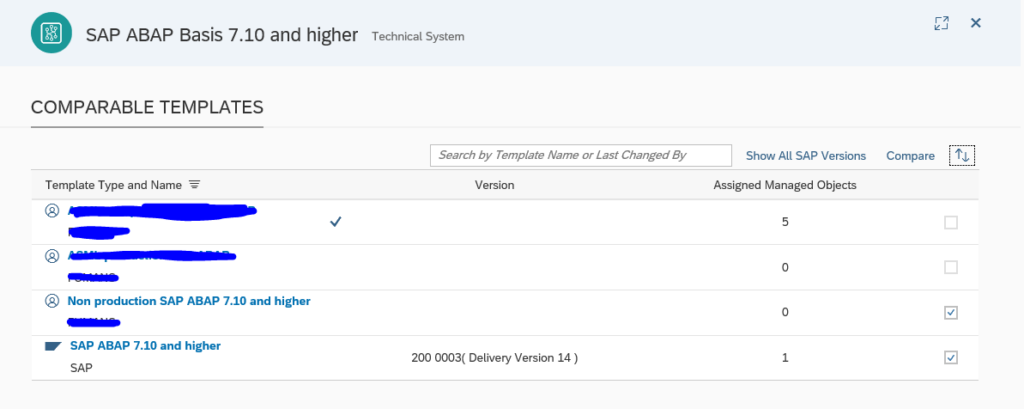

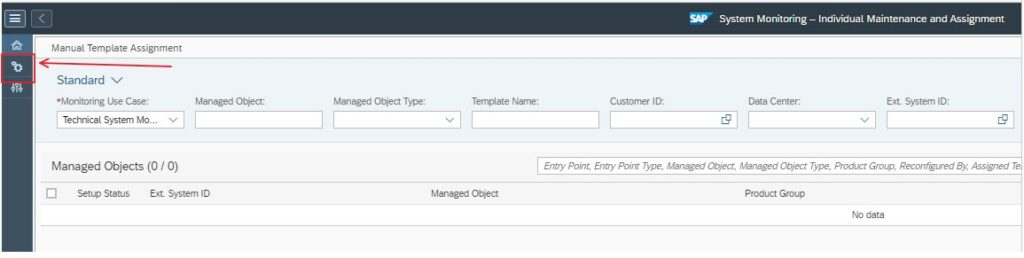

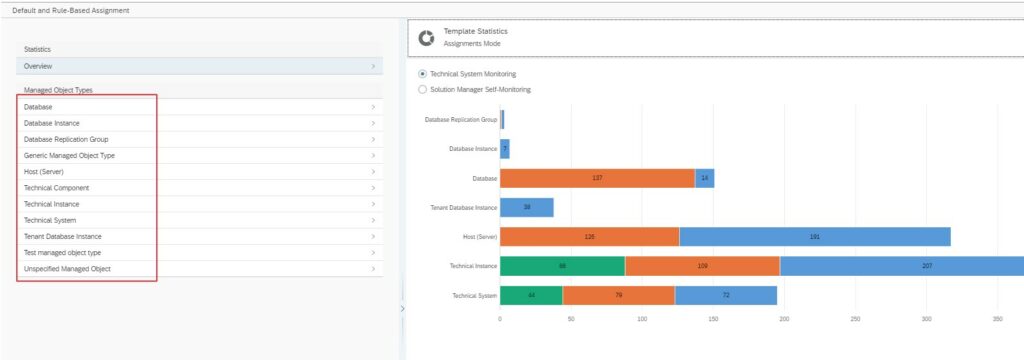

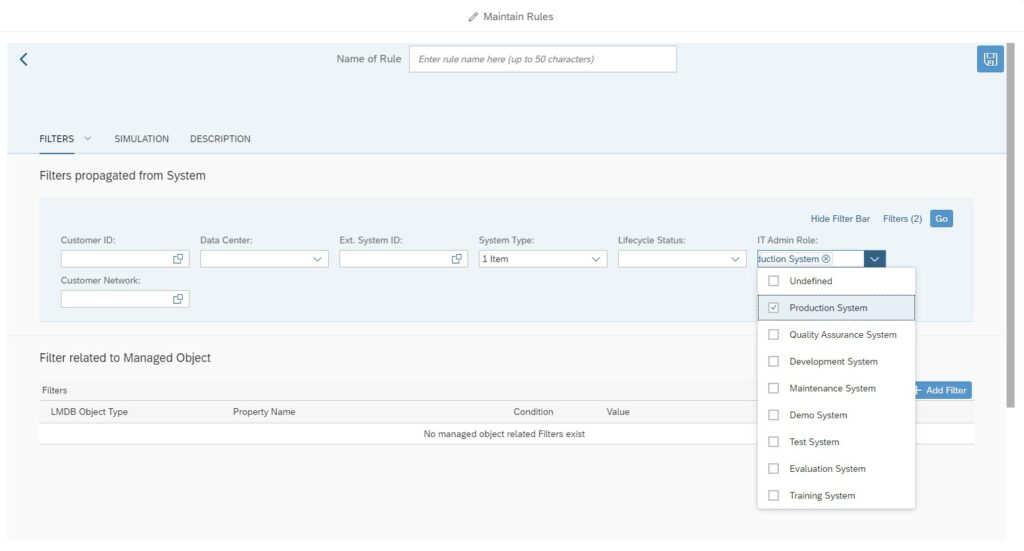

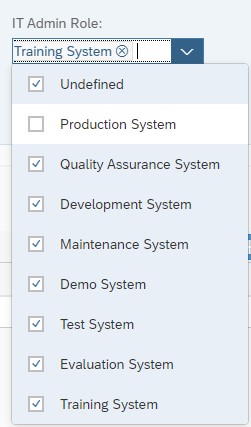

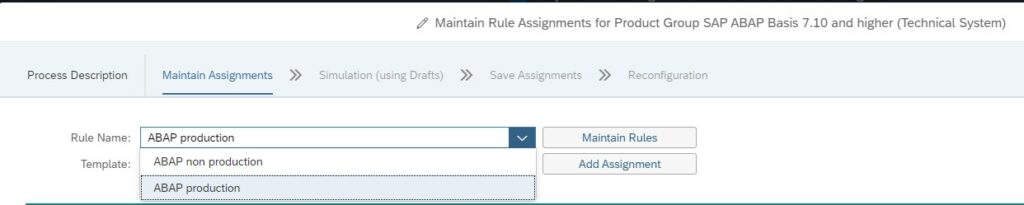

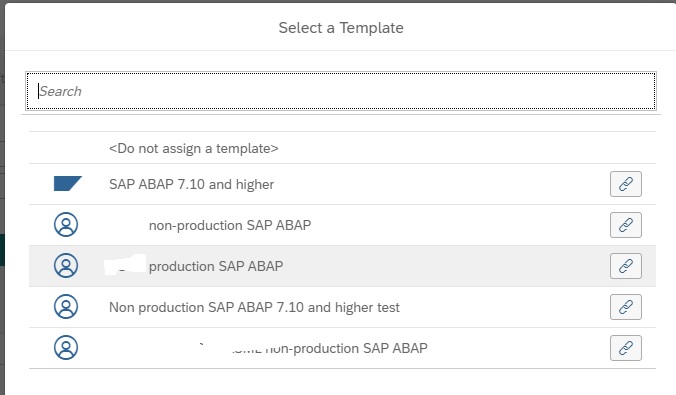

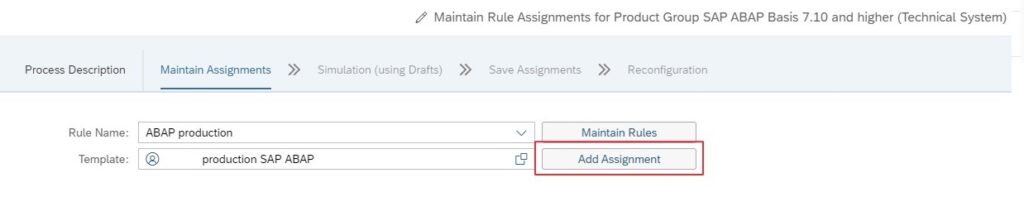

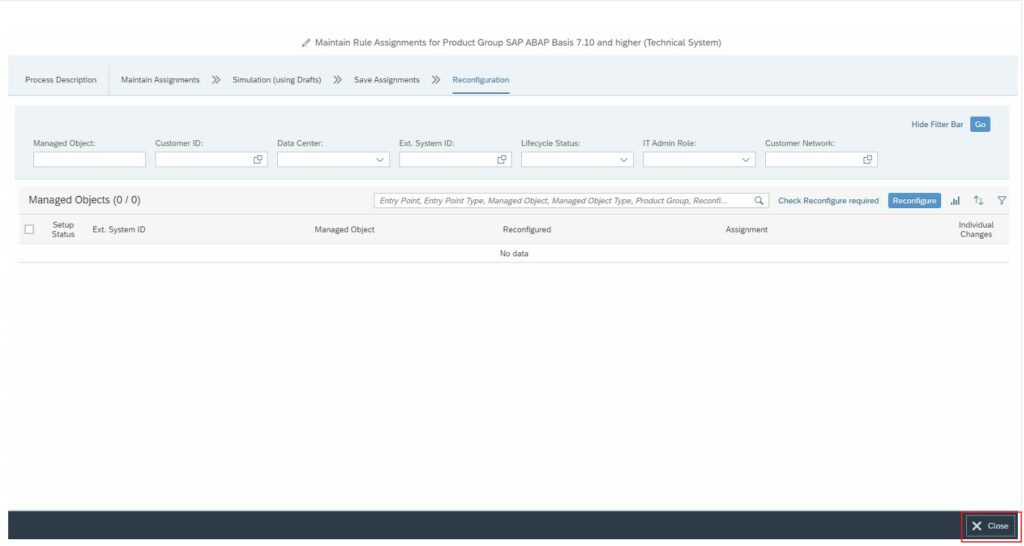

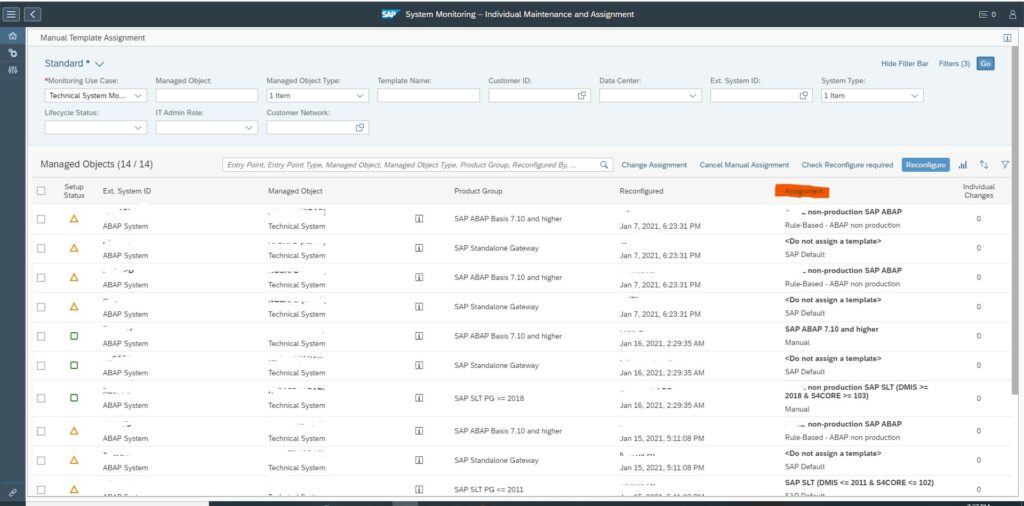

Alert reaction automation in template settings

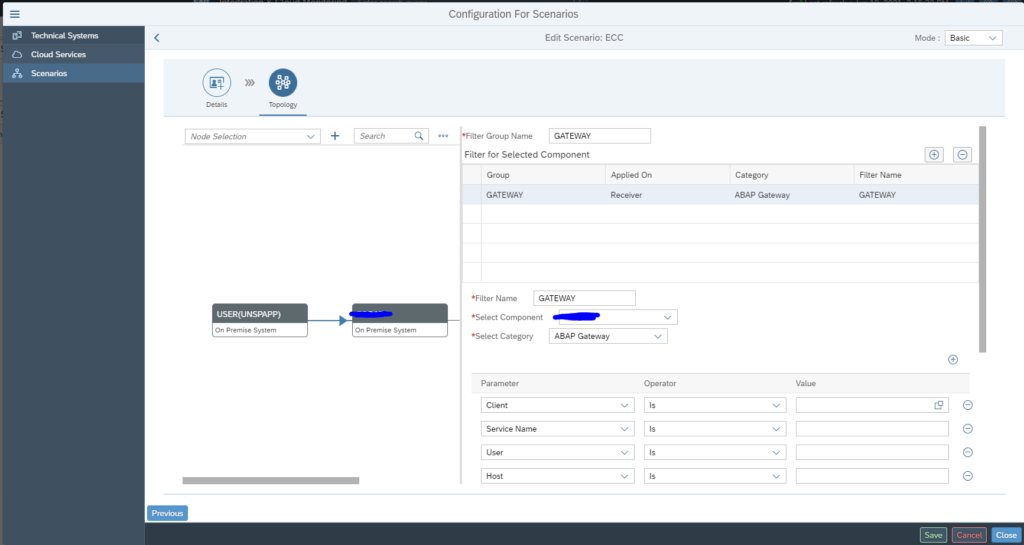

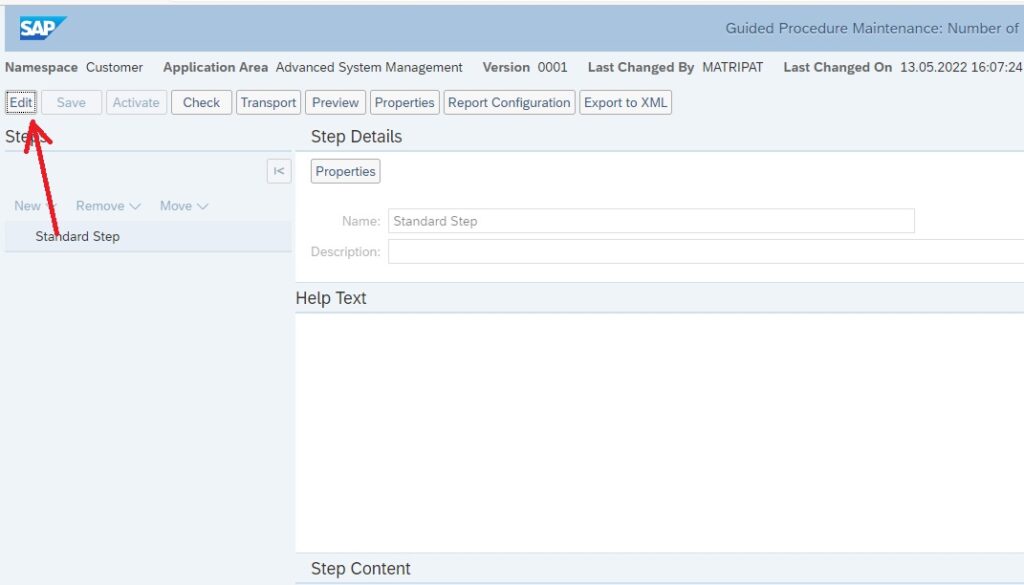

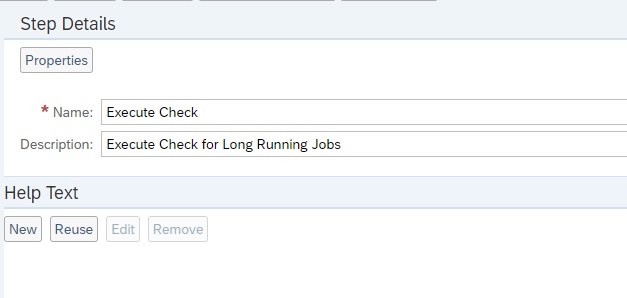

The alert reaction to ServiceNow can also be automated as Outbound Integration. If you are in template maintenance mode, switch to Expert mode.

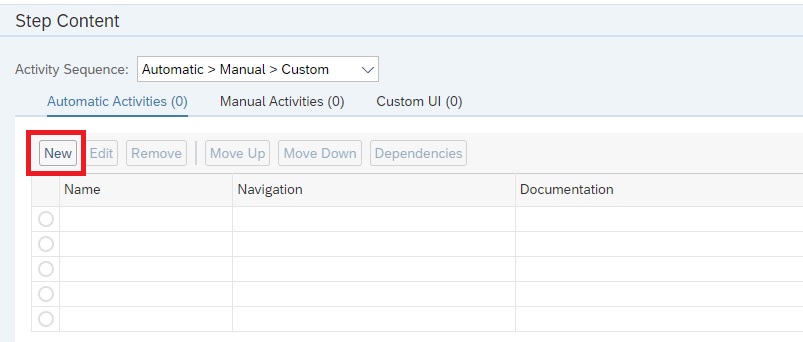

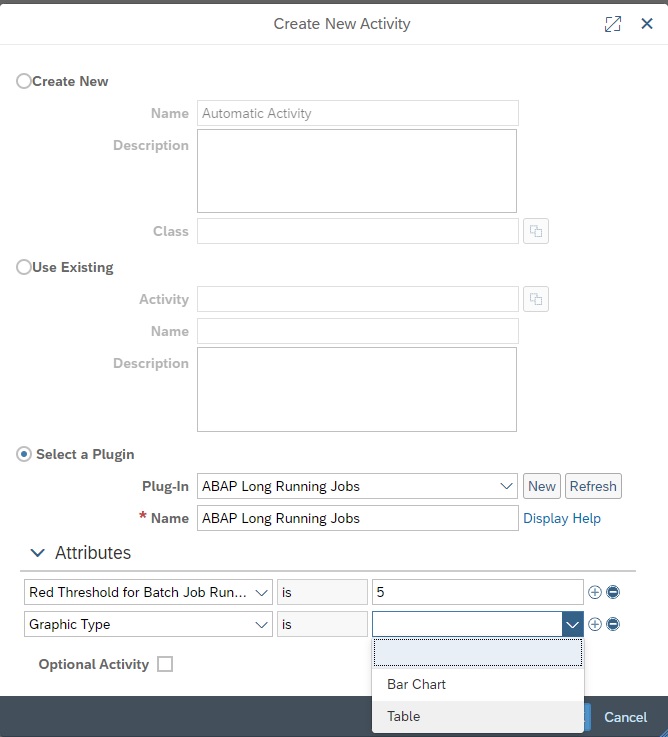

In the alerts tab now configure the alert type for Forward to and Outbound Connector:

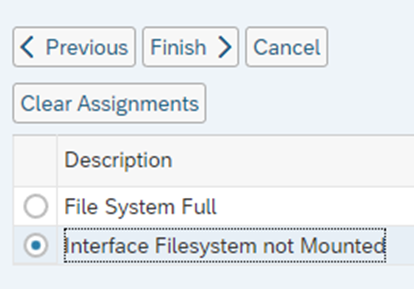

Assign the correct variant.

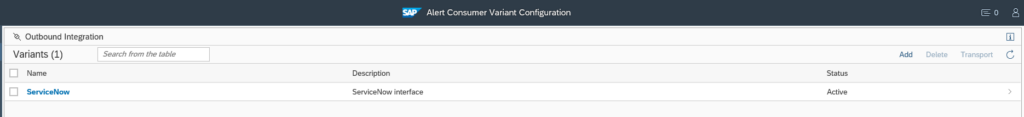

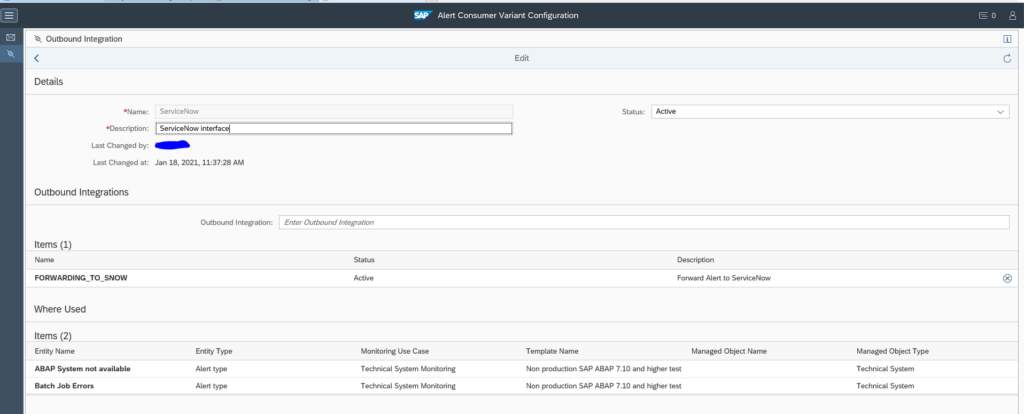

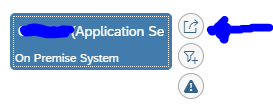

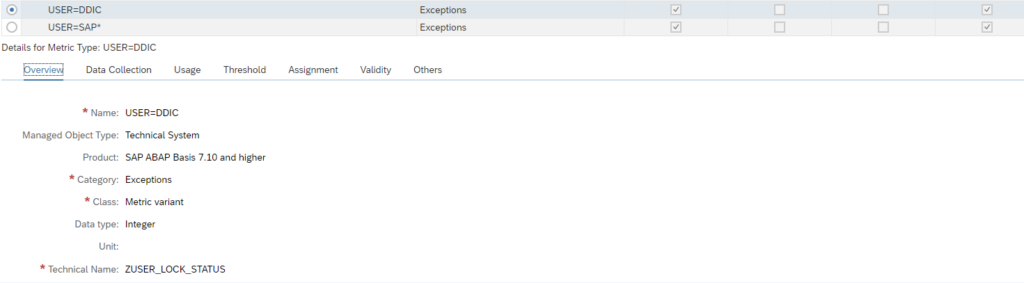

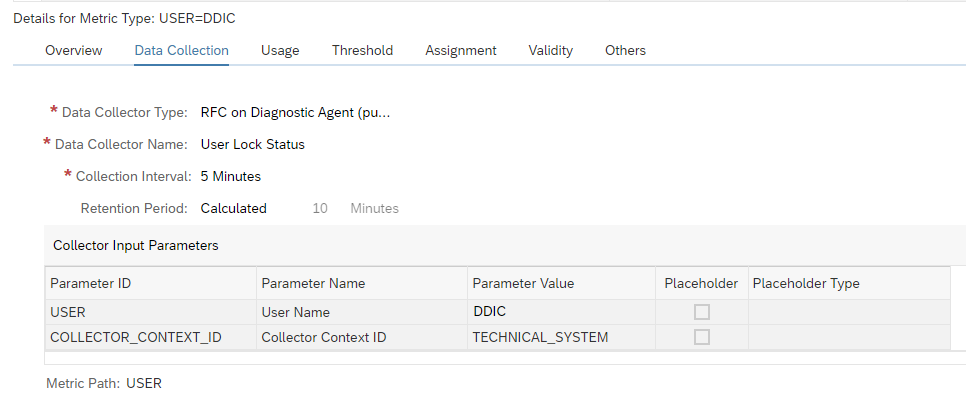

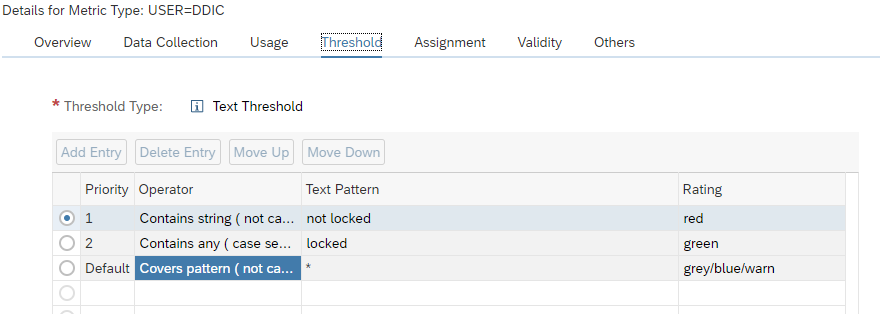

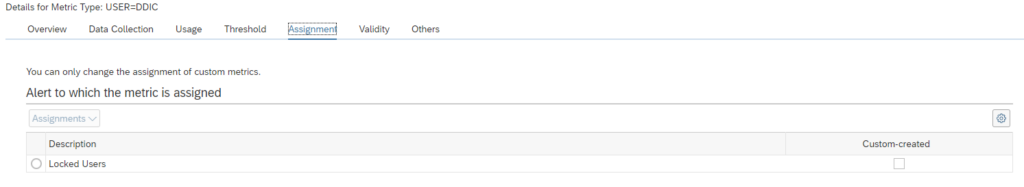

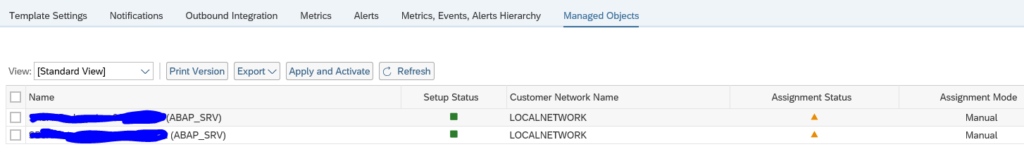

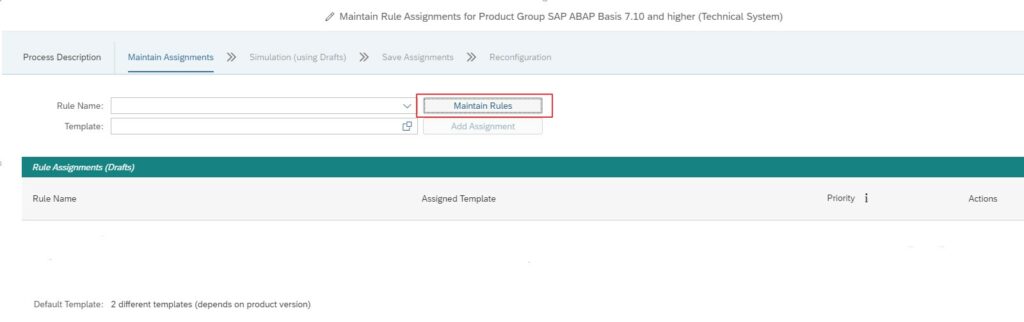

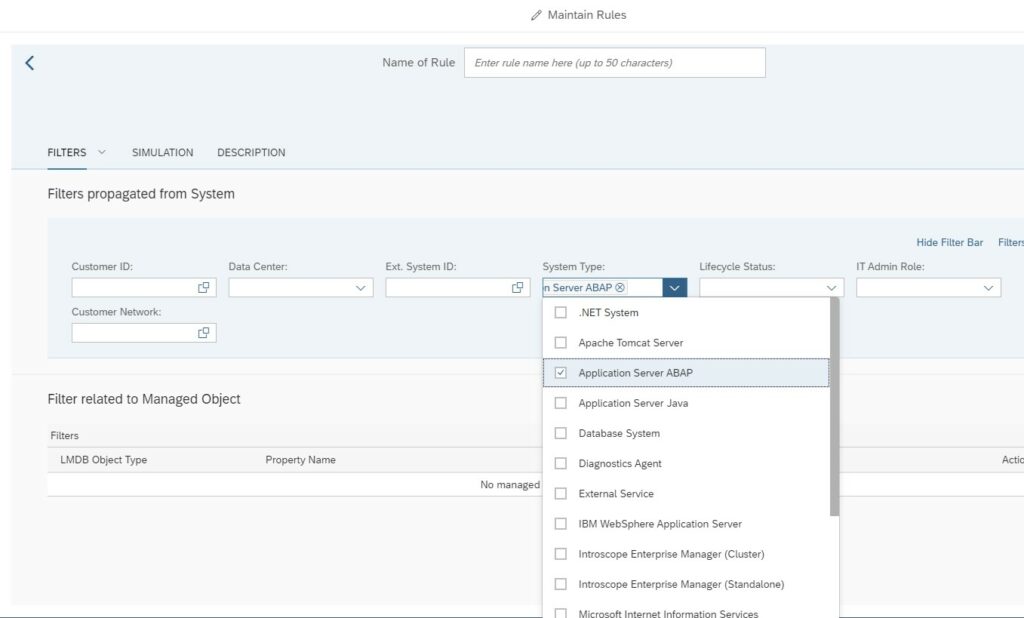

If you click on the variant you go to the variant configuration screen:

Then select the outbound integration name to see the details:

Important here is the where used list, which shows you from which templates and template elements the connector is called.

Whenever the alert is raised, also the outbound integration connector to ServiceNow is called.

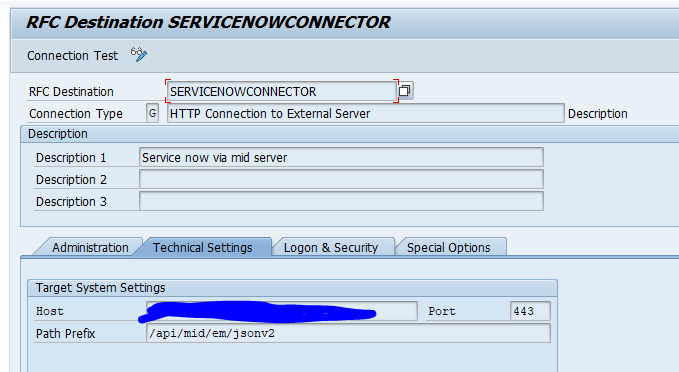

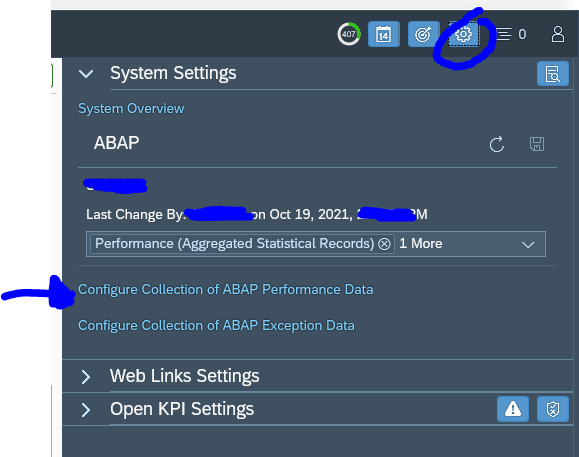

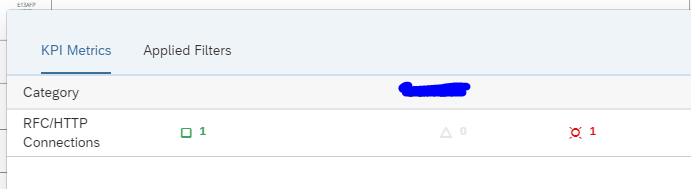

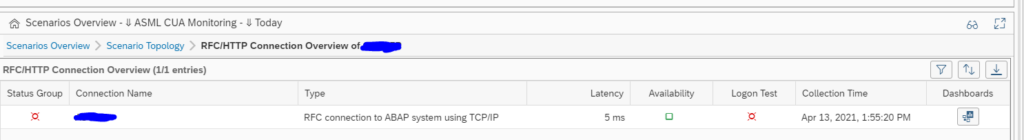

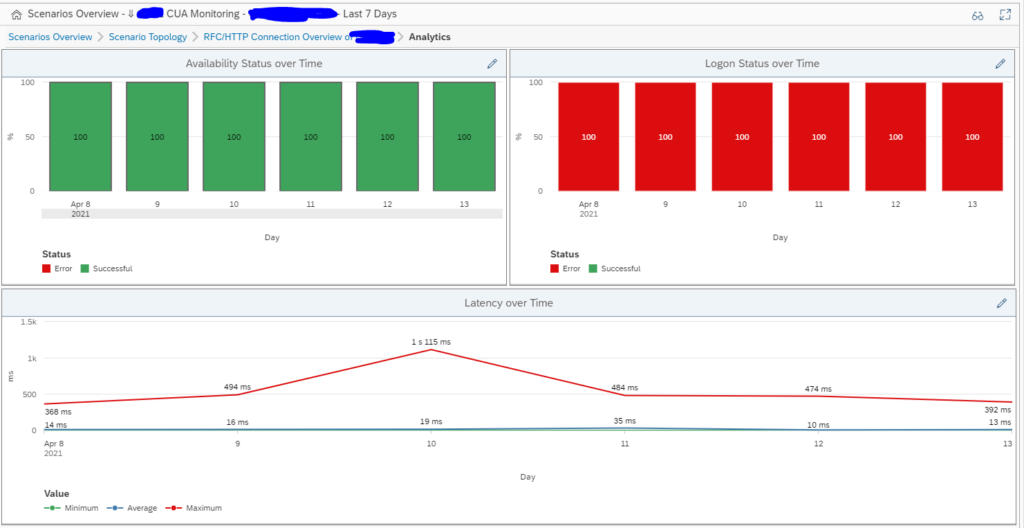

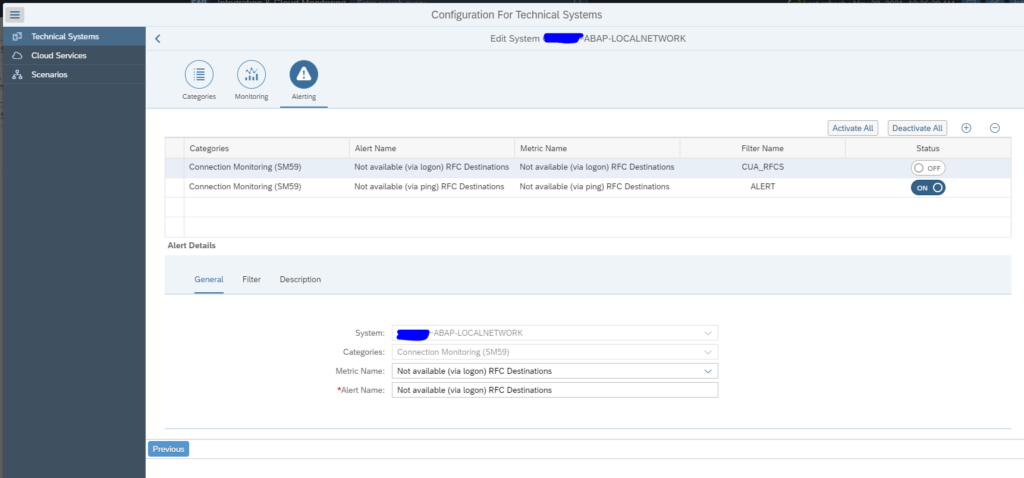

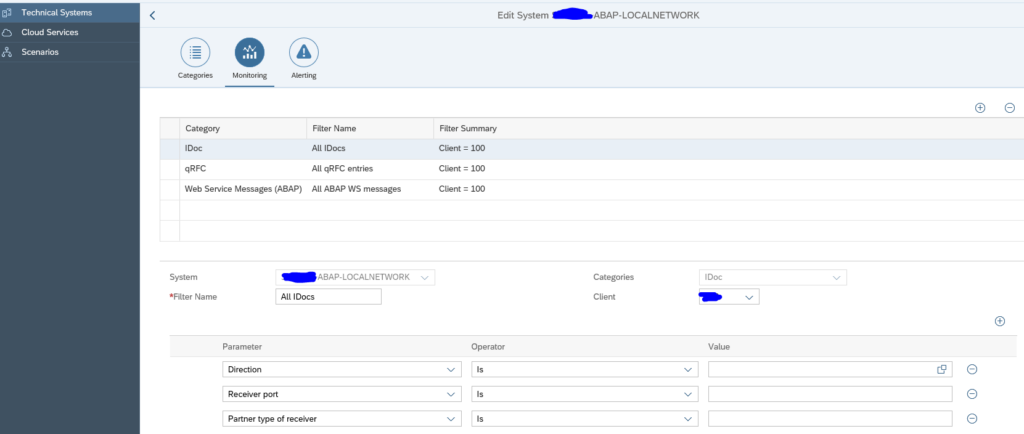

Set up the RFC destination

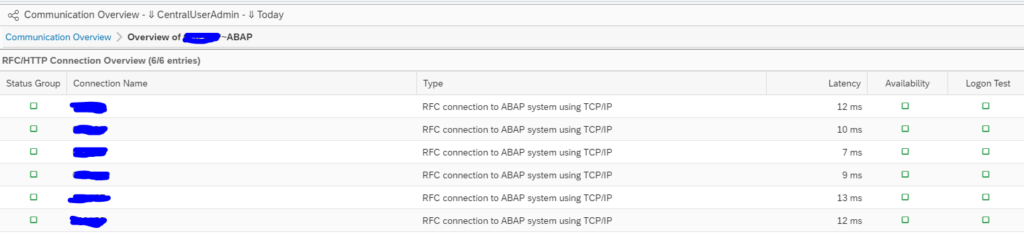

In SM59 setup the RFC connection towards the MID server as type H RFC connection:

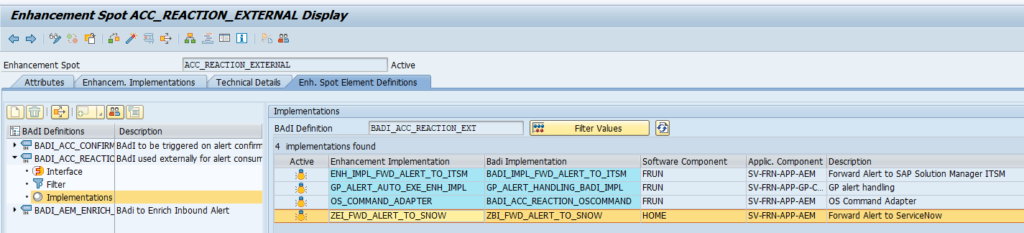

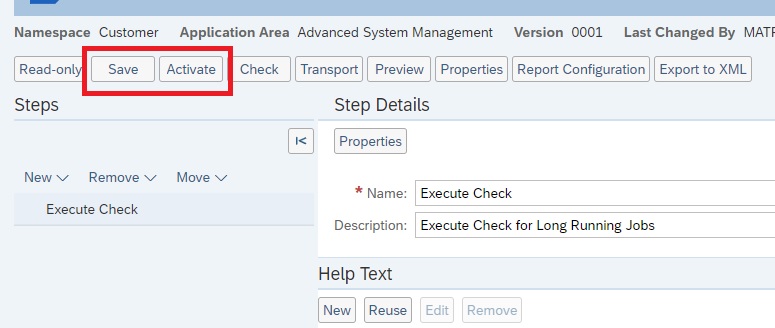

Activation of the enhancement spot and BADI

The details of the enhancement spot and BADI implementation are in the SAP document published on the SAP Focused Run Expert Portal.

Use transaction SE18 or SE80 to activate enhancement spot ACC_REACTION_EXTERNAL and then activate BADI BADI_ACC_REACTION_EXT.

The result looks as follows:

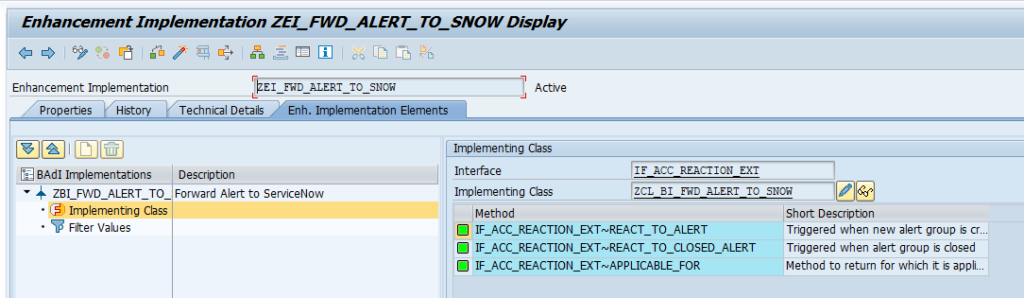

Double click on the implementation:

Double click on the REACT_TO_ALERT interface to go to the code. The code we implemented looks as below:

METHOD if_acc_reaction_ext~react_to_alert.

IF is_alert-ref->get_type( ) <> 'ALERT'.

RETURN.

ENDIF.

" Init. the application log.

me->zgo_logger = NEW zcl_snow_bi_logger( ). " Add info message to notify reaction was triggered

me->zgo_logger->bal_log_add_message(

EXPORTING

ziv_msgty = zif_snow_constants=>zgc_message_types-info ziv_msgno = '002'

).

" Send message to service now

NEW zcl_snow_bi_common( )->zif_snow_bi_common~send_message_to_snow( EXPORTING

zii_logger = me->zgo_logger

zii_snow_message = NEW zcl_snow_event_message( zii_alert = is_alert-ref )

ziv_resolution_state = zif_snow_constants=>zgc_resolution_state-new ).

" Save the application log

me->zgo_logger->bal_log_save( ).

ENDMETHOD.What do we do in the code:

- We only react on type ALERT

- We make an entry in the application log (so we can check later on in SLG1)

- We call the actual interface which we have implemented in class ZCL_SNOW_BI_COMMON class

The implementation code

The sending code in method ZIF_SNOW_BI_COMMON~SEND_MESSAGE_TO_SNOW that was just called looks as follows:

METHOD zif_snow_bi_common~send_message_to_snow.

" Retrieve JSON body for the request

DATA(zlv_snow_event_json) = zii_snow_message->get_json( ziv_resolution_state ).

me->add_json_to_bal_log( EXPORTING zii_logger = zii_logger

ziv_json = zlv_snow_event_json ).

TRY.

" Execute HTTP Post, send data to the MiD API

DATA(zlv_http_status_code) = NEW zcl_snow_mid_api( )->post( ziv_event_messages = zlv_snow_event_json ).

" Add HTTP response code to the log..

IF zlv_http_status_code-code >= 200 AND zlv_http_status_code-code < 300.

DATA(zlv_msgty) = zif_snow_constants=>zgc_message_types-success.

ELSE.

zlv_msgty = zif_snow_constants=>zgc_message_types-error.

ENDIF.

zii_logger->bal_log_add_message(

EXPORTING

ziv_msgty = zlv_msgty

ziv_msgno = '001'

ziv_msgv1 = |{ zlv_http_status_code-code } { zlv_http_status_code-reason }|

).

CATCH zcx_snow_mid_api INTO DATA(zlo_exception).

"Add exception to the application log

zii_logger->bal_log_add_message(

EXPORTING

ziv_msgid = zlo_exception->if_t100_message~t100key-msgid

ziv_msgno = zlo_exception->if_t100_message~t100key-msgno

ziv_msgv1 = CONV #( zlo_exception->if_t100_message~t100key-attr1 )

ziv_msgv2 = CONV #( zlo_exception->if_t100_message~t100key-attr2 )

ziv_msgv3 = CONV #( zlo_exception->if_t100_message~t100key-attr3 )

ziv_msgv4 = CONV #( zlo_exception->if_t100_message~t100key-attr4 )

).

ENDTRY.

ENDMETHOD.

What happens here:

- Data object is build in the data definition (details follow below)

- This is logged

- The actual call is performed by calling class ZCL_SNOW_MID_API (details follow below)

- The result is checked (200 is http code for Ok)

- Error result is logged in case of issues

The code for the message content

For the message content, we first define the message event type:

INTERFACE zif_snow_message

PUBLIC .

TYPES:

BEGIN OF zgts_event,

"! Source

source TYPE string,

"! Name of the object

node TYPE string,

"! Type of object, host, instance

type TYPE string,

"! Severity

severity TYPE string,

"! Date/Time(YYYY-MM-DD HH:MM:SS)

time_of_event TYPE string,

"! Alert description/name

description TYPE string,

"! SAP System ID

event_class TYPE string,

"! Unique ID

message_key TYPE string,

"! Alert state

resolution_state TYPE string,

"! Resource

resource TYPE string,

END OF zgts_event.

METHODS get_json IMPORTING ziv_resoultion_state TYPE string

RETURNING VALUE(zrv_json) TYPE /ui2/cl_json=>json.

ENDINTERFACE.

This event is used in the actual message build code:

METHOD zif_snow_message~get_json.

DATA zlv_events TYPE /ui2/cl_json=>json.

" Get current time stamp

GET TIME STAMP FIELD DATA(zlv_time_stamp_now).

DATA(zlv_event_json) = /ui2/cl_json=>serialize(

EXPORTING

data = VALUE zif_snow_message~zgts_event(

source = |{ syst-sysid } - FRUN |

node = zgi_alert->get_managed_object_name( )

type = zgi_alert->get_managed_object_type( )

severity = me->convert_severity( zgi_alert->get_severity( ) )

time_of_event = me->convert_alert_timestamp( ziv_timestamp = zlv_time_stamp_now )

description = COND #( LET custom_description = me->remove_html_tags( zgi_alert->get_custom_description( ) ) IN

WHEN strlen( custom_description ) > 0 THEN custom_description

ELSE me->remove_html_tags( zgi_alert->get_sap_description( ) ) )

event_class = substring( val = zgi_alert->get_managed_object_name( ) off = 0 len = 3 )

message_key = zgi_alert->get_type_id( )

resolution_state = ziv_resoultion_state

resource = zgi_alert->get_name( )

)

pretty_name = /ui2/cl_json=>pretty_mode-low_case

).

IF zlv_events IS INITIAL.

zlv_events = zlv_event_json.

ELSE.

zlv_events = zlv_events && ',' && zlv_event_json.

ENDIF.

IF zlv_events IS NOT INITIAL.

zrv_json = '{ "records": [' && zlv_events && '] }'.

ENDIF.

ENDMETHOD.

Simple method codes:

METHOD if_acc_mea~get_managed_object_name.

rv_managed_object_name = ms_mea-context_name.

ENDMETHOD.

METHOD if_acc_mea~get_managed_object_type.

rv_managed_object_type = ms_mea-context_type.

ENDMETHOD.

METHOD if_acc_mea~get_severity.

rv_severity = ms_mea-severity.

ENDMETHOD.

METHOD convert_alert_timestamp.

" Convert the timestamp

CONVERT TIME STAMP ziv_timestamp TIME ZONE 'UTC'

INTO DATE DATA(zlv_date) TIME DATA(zlv_time)

DAYLIGHT SAVING TIME DATA(zlv_dls_time).

zrv_date_time = |{ zlv_date+0(4) }-{ zlv_date+4(2) }-{ zlv_date+6(2) } { zlv_time+0(2) }:{ zlv_time+2(2) }:{ zlv_time+4(2) }|.

ENDMETHOD.

METHOD if_acc_mea~get_name.

rv_name = ms_mea-name.

ENDMETHOD.

METHOD if_acc_mea~get_type_id.

rv_type_id = ms_mea-type_id.

ENDMETHOD.

Helper method to remove HTML tags:

METHOD remove_html_tags.

IF ziv_description IS INITIAL.

RETURN.

ENDIF.

DATA(zlv_description) = ziv_description.

DATA(zlv_newline) = cl_abap_char_utilities=>newline.

REPLACE ALL OCCURRENCES OF '<h2>' IN zlv_description WITH '' IGNORING CASE.

REPLACE ALL OCCURRENCES OF '</h2>' IN zlv_description WITH '' IGNORING CASE.

REPLACE ALL OCCURRENCES OF '<strong>' IN zlv_description WITH '' IGNORING CASE.

REPLACE ALL OCCURRENCES OF '</strong>' IN zlv_description WITH '' IGNORING CASE.

REPLACE ALL OCCURRENCES OF '<p>' IN zlv_description WITH '' IGNORING CASE.

REPLACE ALL OCCURRENCES OF '</p>' IN zlv_description WITH zlv_newline IGNORING CASE.

REPLACE ALL OCCURRENCES OF '<b>' IN zlv_description WITH '' IGNORING CASE.

REPLACE ALL OCCURRENCES OF '</b>' IN zlv_description WITH '' IGNORING CASE.

REPLACE ALL OCCURRENCES OF '<u>' IN zlv_description WITH '' IGNORING CASE.

REPLACE ALL OCCURRENCES OF '</u>' IN zlv_description WITH '' IGNORING CASE.

REPLACE ALL OCCURRENCES OF '<i>' IN zlv_description WITH '' IGNORING CASE.

REPLACE ALL OCCURRENCES OF '</i>' IN zlv_description WITH '' IGNORING CASE.

REPLACE ALL OCCURRENCES OF '<ul>' IN zlv_description WITH '' IGNORING CASE.

REPLACE ALL OCCURRENCES OF '</ul>' IN zlv_description WITH '' IGNORING CASE.

REPLACE ALL OCCURRENCES OF '<li>' IN zlv_description WITH '' IGNORING CASE.

REPLACE ALL OCCURRENCES OF '</li>' IN zlv_description WITH zlv_newline IGNORING CASE.

REPLACE ALL OCCURRENCES OF '<a href="' IN zlv_description WITH '' IGNORING CASE.

REPLACE ALL OCCURRENCES OF REGEX '">[A-Za-z0-9_\~\-+=&[:space:]]*</a>' IN zlv_description WITH '' IGNORING CASE.

REPLACE ALL OCCURRENCES OF ' ' IN zlv_description WITH '_' IGNORING CASE.

REPLACE ALL OCCURRENCES OF ':' IN zlv_description WITH ':' IGNORING CASE.

zrv_desription = zlv_description.

ENDMETHOD.

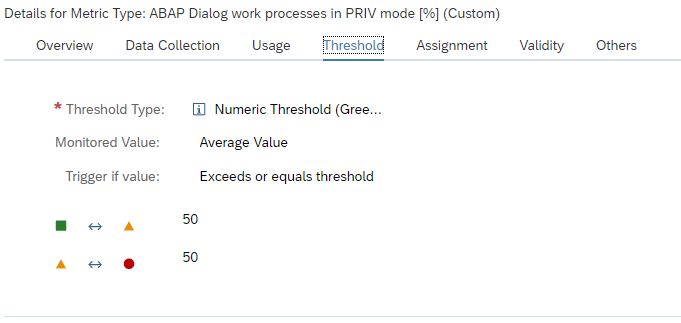

Method to convert severity:

METHOD convert_severity.

CASE ziv_sm_severity.

WHEN 1 OR 2 OR 3 OR 4.

zrv_sn_severity = 5. " Info

WHEN 5.

zrv_sn_severity = 4. " Warning

WHEN 6.

zrv_sn_severity = 3. " Minor

WHEN 7.

zrv_sn_severity = 2. " Major

WHEN 8 OR 9.

zrv_sn_severity = 1. " Critical

WHEN OTHERS.

zrv_sn_severity = 0. " Clear

ENDCASE.

ENDMETHOD.

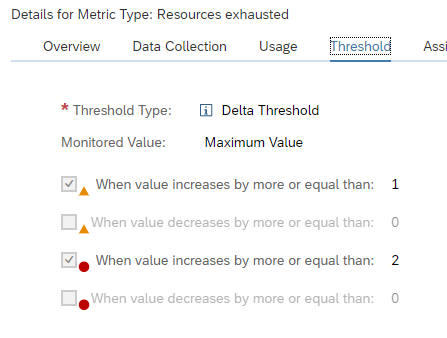

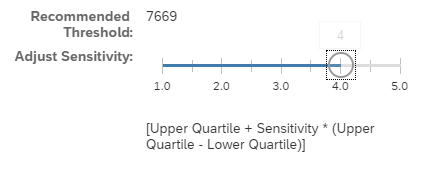

This method convert the SAP Focused Run severity code from 1 to 9 towards the codes in ServiceNow. Adjusts the codes per your requirement.

The sending code

The sending code is as follows (for more details on ABAP REST calls, read this blog):

METHOD post.

" Initialize the HTTP client

me->init_http_client( ).

" Set header

me->zgo_http_client->request->set_method( method = me->zgo_http_client->request->co_request_method_post ).

me->zgo_http_client->request->set_content_type( content_type = zgc_content_type ).

" Set body

me->zgo_http_client->request->set_cdata( EXPORTING data = ziv_event_messages ).

" Send the data (POST)

me->send( ).

" Receive the response; needed to get the http status code

me->receive( ).

" Get the status code

me->zgo_http_client->response->get_status(

IMPORTING

code = zrs_status-code " HTTP status code

reason = zrs_status-reason " HTTP status description

).

ENDMETHOD.

Subimplementations of the methods:

METHOD init_http_client.

cl_http_client=>create_by_destination(

EXPORTING

destination = zgc_destination " Logical destination (specified in function call)

IMPORTING

client = me->zgo_http_client " HTTP Client Abstraction

EXCEPTIONS

argument_not_found = 1

destination_not_found = 2

destination_no_authority = 3

plugin_not_active = 4

internal_error = 5

OTHERS = 6

).

IF sy-subrc NE 0.

me->raise_exception_for_sys_msg( ).

ENDIF.

ENDMETHOD.

METHOD send.

me->zgo_http_client->send(

EXCEPTIONS

http_communication_failure = 1

http_invalid_state = 2

http_processing_failed = 3

http_invalid_timeout = 4

OTHERS = 5

).

IF sy-subrc NE 0.

me->raise_exception_for_sys_msg( ).

ENDIF.

ENDMETHOD.

METHOD receive.

me->zgo_http_client->receive(

EXCEPTIONS

http_communication_failure = 1

http_invalid_state = 2

http_processing_failed = 3

OTHERS = 4

).

IF sy-subrc NE 0.

me->raise_exception_for_sys_msg( ).

ENDIF.

ENDMETHOD.

METHOD raise_exception_for_sys_msg.

RAISE EXCEPTION TYPE zcx_snow_mid_api

EXPORTING

textid = VALUE scx_t100key(

msgid = syst-msgid

msgno = syst-msgno

attr1 = syst-msgv1

attr2 = syst-msgv2

attr3 = syst-msgv3

attr4 = syst-msgv4

).

ENDMETHOD.

What is important here is that in the constant ZGC_DESTINATION is the definition of the H type RFC destination towards the midserver.

Helper code: zcl_snow_event_message

Method ZIF_SNOW_MESSAGE~GET_JSON with input ZIV_RESOULTION_STATE type STRING and returning ZRV_JSON type /UI2/CL_JSON=>JSON, code:

METHOD zif_snow_message~get_json.

DATA zlv_events TYPE /ui2/cl_json=>json.

" Get current time stamp

GET TIME STAMP FIELD DATA(zlv_time_stamp_now).

DATA(zlv_event_json) = /ui2/cl_json=>serialize(

EXPORTING

data = VALUE zif_snow_message~zgts_event(

source = |{ syst-sysid } - FRUN |

node = zgi_alert->get_managed_object_name( )

type = zgi_alert->get_managed_object_type( )

severity = me->convert_severity( zgi_alert->get_severity( ) )

time_of_event = me->convert_alert_timestamp( ziv_timestamp = zlv_time_stamp_now )

description = COND #( LET custom_description = me->remove_html_tags( zgi_alert->get_custom_description( ) ) IN

WHEN strlen( custom_description ) > 0 THEN custom_description

ELSE me->remove_html_tags( zgi_alert->get_sap_description( ) ) )

event_class = substring( val = zgi_alert->get_managed_object_name( ) off = 0 len = 3 )

message_key = zgi_alert->get_type_id( )

resolution_state = ziv_resoultion_state

resource = zgi_alert->get_name( )

)

pretty_name = /ui2/cl_json=>pretty_mode-low_case

).

IF zlv_events IS INITIAL.

zlv_events = zlv_event_json.

ELSE.

zlv_events = zlv_events && ',' && zlv_event_json.

ENDIF.

IF zlv_events IS NOT INITIAL.

zrv_json = '{ "records": [' && zlv_events && '] }'.

ENDIF.

ENDMETHOD.

Method Constructor with input ZII_ALERT type IF_ACC_MEA, code:

METHOD constructor.

me->zgi_alert = zii_alert.

ENDMETHOD.

Method CONVERT_ALERT_TIMESTAMP input ZIV_TIMESTAMP type TIMESTAMP, returning ZRV_DATE_TIME type STRING. Code:

METHOD convert_alert_timestamp.

" Convert the timestamp

CONVERT TIME STAMP ziv_timestamp TIME ZONE 'UTC'

INTO DATE DATA(zlv_date) TIME DATA(zlv_time)

DAYLIGHT SAVING TIME DATA(zlv_dls_time).

zrv_date_time = |{ zlv_date+0(4) }-{ zlv_date+4(2) }-{ zlv_date+6(2) } { zlv_time+0(2) }:{ zlv_time+2(2) }:{ zlv_time+4(2) }|.

ENDMETHOD.

Method CONVERT_SEVERITY input ZIV_SM_SEVERITY type AC_SEVERITY, returning ZRV_SN_SEVERITY type INT4. Code:

METHOD convert_severity.

CASE ziv_sm_severity.

WHEN 1 OR 2 OR 3 OR 4.

zrv_sn_severity = 5. " Info

WHEN 5.

zrv_sn_severity = 4. " Warning

WHEN 6.

zrv_sn_severity = 3. " Minor

WHEN 7.

zrv_sn_severity = 2. " Major

WHEN 8 OR 9.

zrv_sn_severity = 1. " Critical

WHEN OTHERS.

zrv_sn_severity = 0. " Clear

ENDCASE.

ENDMETHOD.

Method REMOVE_HTML_TAGS, input ZIV_DESCRIPTION type STRING, returning ZRV_DESRIPTION type STRING. Code:

METHOD remove_html_tags.

IF ziv_description IS INITIAL.

RETURN.

ENDIF.

DATA(zlv_description) = ziv_description.

DATA(zlv_newline) = cl_abap_char_utilities=>newline.

REPLACE ALL OCCURRENCES OF '<h2>' IN zlv_description WITH '' IGNORING CASE.

REPLACE ALL OCCURRENCES OF '</h2>' IN zlv_description WITH '' IGNORING CASE.

REPLACE ALL OCCURRENCES OF '<strong>' IN zlv_description WITH '' IGNORING CASE.

REPLACE ALL OCCURRENCES OF '</strong>' IN zlv_description WITH '' IGNORING CASE.

REPLACE ALL OCCURRENCES OF '<p>' IN zlv_description WITH '' IGNORING CASE.

REPLACE ALL OCCURRENCES OF '</p>' IN zlv_description WITH zlv_newline IGNORING CASE.

REPLACE ALL OCCURRENCES OF '<b>' IN zlv_description WITH '' IGNORING CASE.

REPLACE ALL OCCURRENCES OF '</b>' IN zlv_description WITH '' IGNORING CASE.

REPLACE ALL OCCURRENCES OF '<u>' IN zlv_description WITH '' IGNORING CASE.

REPLACE ALL OCCURRENCES OF '</u>' IN zlv_description WITH '' IGNORING CASE.

REPLACE ALL OCCURRENCES OF '<i>' IN zlv_description WITH '' IGNORING CASE.

REPLACE ALL OCCURRENCES OF '</i>' IN zlv_description WITH '' IGNORING CASE.

REPLACE ALL OCCURRENCES OF '<ul>' IN zlv_description WITH '' IGNORING CASE.

REPLACE ALL OCCURRENCES OF '</ul>' IN zlv_description WITH '' IGNORING CASE.

REPLACE ALL OCCURRENCES OF '<li>' IN zlv_description WITH '' IGNORING CASE.

REPLACE ALL OCCURRENCES OF '</li>' IN zlv_description WITH zlv_newline IGNORING CASE.

REPLACE ALL OCCURRENCES OF '<a href="' IN zlv_description WITH '' IGNORING CASE.

REPLACE ALL OCCURRENCES OF REGEX '">[A-Za-z0-9_\~\-+=&[:space:]]*</a>' IN zlv_description WITH '' IGNORING CASE.

REPLACE ALL OCCURRENCES OF ' ' IN zlv_description WITH '_' IGNORING CASE.

REPLACE ALL OCCURRENCES OF ':' IN zlv_description WITH ':' IGNORING CASE.

zrv_desription = zlv_description.

ENDMETHOD.

Helper code: zcl_snow_bi_logger

Exception class ZCX_SNOW_MID_API as redefinition of CX_ROOT with re-implementation of constructor; inputs TEXTID type F_T100_MESSAGE=>T100KEY and PREVIOUS type PREVIOUS.

Code:

METHOD constructor ##ADT_SUPPRESS_GENERATION. CALL METHOD super->constructor EXPORTING previous = previous. CLEAR me->textid. IF textid IS INITIAL. if_t100_message~t100key = if_t100_message=>default_textid. ELSE. if_t100_message~t100key = textid. ENDIF. ENDMETHOD.

Helper class zcl_snow_bi_logger.

Method: ZIF_SNOW_BI_LOGGER~BAL_LOG_SAVE:

METHOD zif_snow_bi_logger~bal_log_save. IF me->zgo_bal_log IS BOUND. me->zgo_bal_log->save( ziv_commit = abap_true ). ENDIF. ENDMETHOD.

Method: ZIF_SNOW_BI_LOGGER~BAL_LOG_ADD_EXCEPTION

Import parameter: ZIX_SNOW_EXCEPTION type ref to ZCX_SNOW_MID_API

Code:

METHOD zif_snow_bi_logger~bal_log_add_message.

IF me->zgo_bal_log IS BOUND.

me->zgo_bal_log->add_message(

EXPORTING

ziv_msgty = ziv_msgty

ziv_msgid = ziv_msgid

ziv_msgno = ziv_msgno

ziv_msgv1 = ziv_msgv1

ziv_msgv2 = ziv_msgv2

ziv_msgv3 = ziv_msgv3

ziv_msgv4 = ziv_msgv4

).

ENDIF.

ENDMETHOD.

Method: ZIF_SNOW_BI_LOGGER~BAL_LOG_ADD_FREE_TEXT

Inputs ZIV_MSGTY type SYMSGTY and ZIV_TEXT type ZCL_BC_BAL_LOG=>ZGTV_FREE_TEXT “(which is TYPES zgtv_free_text TYPE c LENGTH 200) .

Source:

METHOD zif_snow_bi_logger~bal_log_add_free_text.

IF me->zgo_bal_log IS BOUND.

me->zgo_bal_log->add_free_text(

EXPORTING

ziv_msgty = ziv_msgty

ziv_text = ziv_text

).

ENDIF.

ENDMETHOD.

Constructor code:

METHOD constructor.

me->zgo_bal_log = zcl_bc_bal_log=>factory(

ziv_object = zif_snow_constants=>zgc_bal_log-object

ziv_sub_object = zif_snow_constants=>zgc_bal_log-sub_object

).

ENDMETHOD.

Using the ServiceNow web services

The ServiceNow webservices including instructions on how to download the WSDL are published on the ServiceNow help web pages.

Download the WSDL file and follow the instructions from this blog to import the WSDL file inso SE80 and generate the ABAP web service proxy object. In SOAMANAGER setup the logical port towards your ServiceNow installation and make sure the connection is working.

Then implement the ABAP code as above.

In stead of calling the REST service, you now call the ABAP proxy generated:

* Data Declarations

DATA: zcl_proxy TYPE REF TO zco_zbapidemowebservice, " Proxy Class

zdata_in TYPE zzbapidemo, " Proxy Input

zdata_out TYPE zzbapidemoresponse, " Proxy Output

zfault TYPE REF TO cx_root. " Generic Fault

* Instantiate the proxy class providing the Logical port name

CREATE OBJECT zcl_proxy EXPORTING logical_port_name = 'ZDEMOWS'.

* Set Fixed Values

zdata_in-zimport = '1'.

TRY .

zcl_proxy->zbapidemo( EXPORTING input = zdata_in

IMPORTING output = zdata_out ).

WRITE: / zdata_out-zexport.

CATCH cx_root INTO zfault.

* here is the place for error handling

ENDTRY.

Off course you will use the generated in and out data from the generated service.

<< This blog was originally posted on SAP Focused Run Guru by Frank Umans. Repost done with permission. >>